Apimuk Sornsaeng

SMT-AD: a scalable quantum-inspired anomaly detection approach

Apr 07, 2026Abstract:Quantum-inspired tensor networks algorithms have shown to be effective and efficient models for machine learning tasks, including anomaly detection. Here, we propose a highly parallelizable quantum-inspired approach which we call SMT-AD from Superposition of Multiresolution Tensors for Anomaly Detection. It is based upon the superposition of bond-dimension-1 matrix product operators to transform the input data with Fourier-assisted feature embedding, where the number of learnable parameters grows linearly with feature size, embedding resolutions, and the number of additional components in the matrix product operators structure. We demonstrate successful anomaly detection when applied to standard datasets, including credit card transactions, and find that, even with minimal configurations, it achieves competitive performance against established anomaly detection baselines. Furthermore, it provides a straightforward way to reduce the weight of the model and even improve the performance by highlighting the most relevant input features.

Quantum Next Generation Reservoir Computing: An Efficient Quantum Algorithm for Forecasting Quantum Dynamics

Aug 28, 2023Abstract:Next Generation Reservoir Computing (NG-RC) is a modern class of model-free machine learning that enables an accurate forecasting of time series data generated by dynamical systems. We demonstrate that NG-RC can accurately predict full many-body quantum dynamics, instead of merely concentrating on the dynamics of observables, which is the conventional application of reservoir computing. In addition, we apply a technique which we refer to as skipping ahead to predict far future states accurately without the need to extract information about the intermediate states. However, adopting a classical NG-RC for many-body quantum dynamics prediction is computationally prohibitive due to the large Hilbert space of sample input data. In this work, we propose an end-to-end quantum algorithm for many-body quantum dynamics forecasting with a quantum computational speedup via the block-encoding technique. This proposal presents an efficient model-free quantum scheme to forecast quantum dynamics coherently, bypassing inductive biases incurred in a model-based approach.

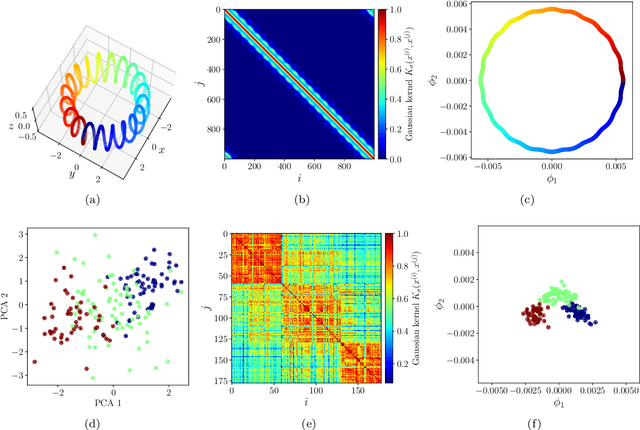

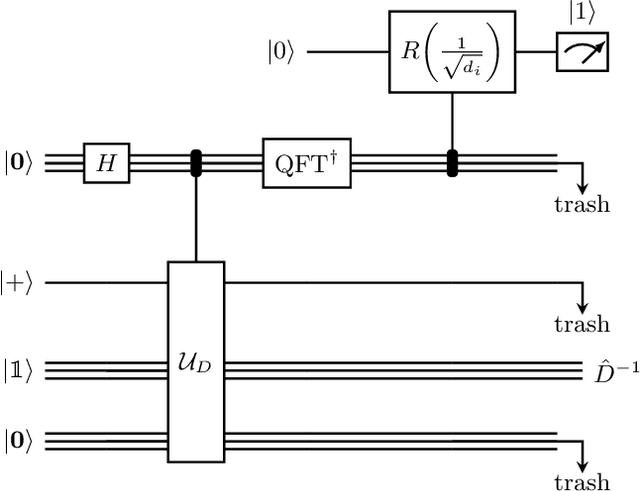

Quantum diffusion map for nonlinear dimensionality reduction

Jun 14, 2021

Abstract:Inspired by random walk on graphs, diffusion map (DM) is a class of unsupervised machine learning that offers automatic identification of low-dimensional data structure hidden in a high-dimensional dataset. In recent years, among its many applications, DM has been successfully applied to discover relevant order parameters in many-body systems, enabling automatic classification of quantum phases of matter. However, classical DM algorithm is computationally prohibitive for a large dataset, and any reduction of the time complexity would be desirable. With a quantum computational speedup in mind, we propose a quantum algorithm for DM, termed quantum diffusion map (qDM). Our qDM takes as an input N classical data vectors, performs an eigen-decomposition of the Markov transition matrix in time $O(\log^3 N)$, and classically constructs the diffusion map via the readout (tomography) of the eigenvectors, giving a total runtime of $O(N^2 \text{polylog}\, N)$. Lastly, quantum subroutines in qDM for constructing a Markov transition operator, and for analyzing its spectral properties can also be useful for other random walk-based algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge