Annabelle Bohrdt

Fluctuation based interpretable analysis scheme for quantum many-body snapshots

Apr 12, 2023Abstract:Microscopically understanding and classifying phases of matter is at the heart of strongly-correlated quantum physics. With quantum simulations, genuine projective measurements (snapshots) of the many-body state can be taken, which include the full information of correlations in the system. The rise of deep neural networks has made it possible to routinely solve abstract processing and classification tasks of large datasets, which can act as a guiding hand for quantum data analysis. However, though proven to be successful in differentiating between different phases of matter, conventional neural networks mostly lack interpretability on a physical footing. Here, we combine confusion learning with correlation convolutional neural networks, which yields fully interpretable phase detection in terms of correlation functions. In particular, we study thermodynamic properties of the 2D Heisenberg model, whereby the trained network is shown to pick up qualitative changes in the snapshots above and below a characteristic temperature where magnetic correlations become significantly long-range. We identify the full counting statistics of nearest neighbor spin correlations as the most important quantity for the decision process of the neural network, which go beyond averages of local observables. With access to the fluctuations of second-order correlations -- which indirectly include contributions from higher order, long-range correlations -- the network is able to detect changes of the specific heat and spin susceptibility, the latter being in analogy to magnetic properties of the pseudogap phase in high-temperature superconductors. By combining the confusion learning scheme with transformer neural networks, our work opens new directions in interpretable quantum image processing being sensible to long-range order.

Correlator Convolutional Neural Networks: An Interpretable Architecture for Image-like Quantum Matter Data

Nov 06, 2020

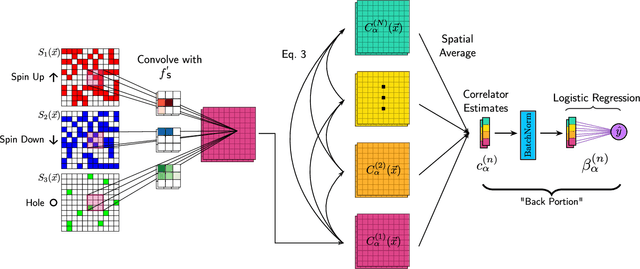

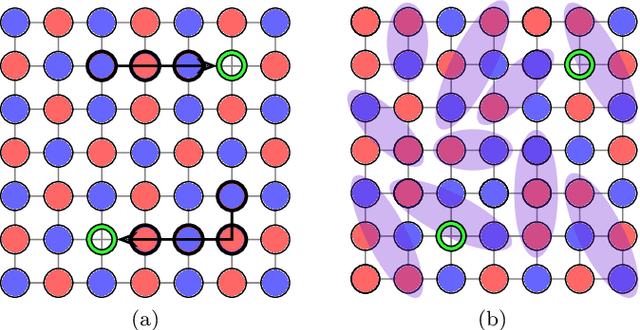

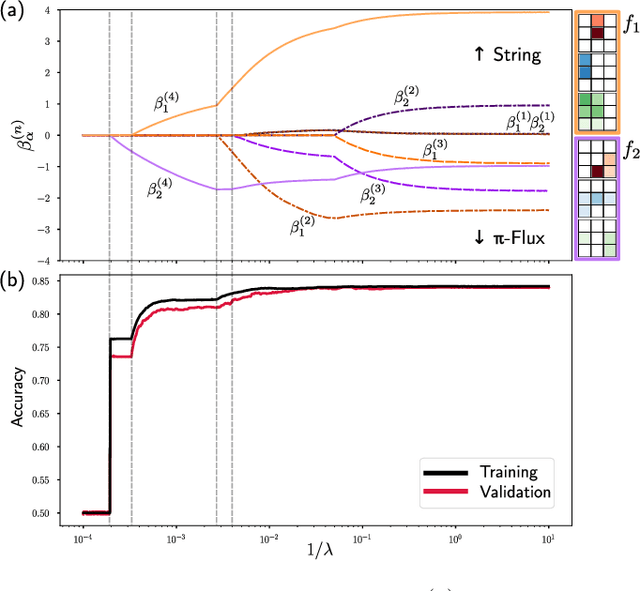

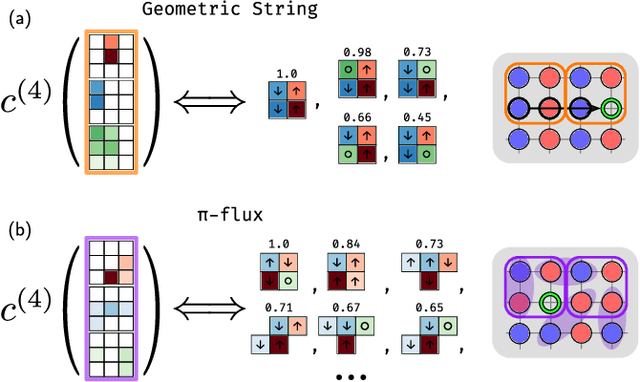

Abstract:Machine learning models are a powerful theoretical tool for analyzing data from quantum simulators, in which results of experiments are sets of snapshots of many-body states. Recently, they have been successfully applied to distinguish between snapshots that can not be identified using traditional one and two point correlation functions. Thus far, the complexity of these models has inhibited new physical insights from this approach. Here, using a novel set of nonlinearities we develop a network architecture that discovers features in the data which are directly interpretable in terms of physical observables. In particular, our network can be understood as uncovering high-order correlators which significantly differ between the data studied. We demonstrate this new architecture on sets of simulated snapshots produced by two candidate theories approximating the doped Fermi-Hubbard model, which is realized in state-of-the art quantum gas microscopy experiments. From the trained networks, we uncover that the key distinguishing features are fourth-order spin-charge correlators, providing a means to compare experimental data to theoretical predictions. Our approach lends itself well to the construction of simple, end-to-end interpretable architectures and is applicable to arbitrary lattice data, thus paving the way for new physical insights from machine learning studies of experimental as well as numerical data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge