Anna Wienhard

Vector-valued Distance and Gyrocalculus on the Space of Symmetric Positive Definite Matrices

Oct 26, 2021

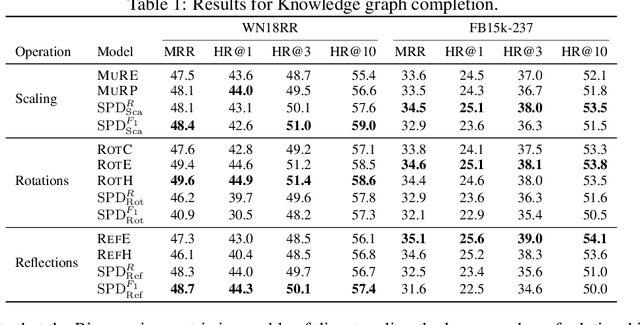

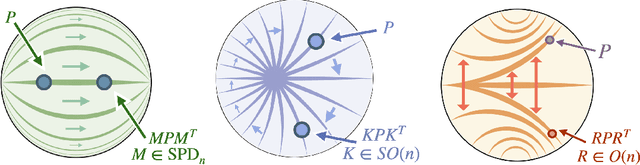

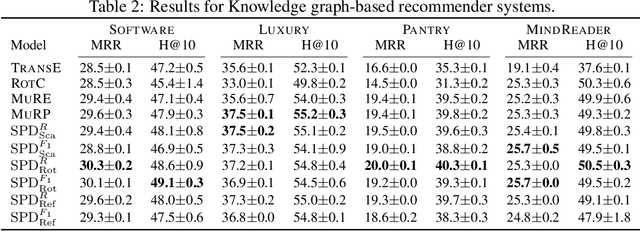

Abstract:We propose the use of the vector-valued distance to compute distances and extract geometric information from the manifold of symmetric positive definite matrices (SPD), and develop gyrovector calculus, constructing analogs of vector space operations in this curved space. We implement these operations and showcase their versatility in the tasks of knowledge graph completion, item recommendation, and question answering. In experiments, the SPD models outperform their equivalents in Euclidean and hyperbolic space. The vector-valued distance allows us to visualize embeddings, showing that the models learn to disentangle representations of positive samples from negative ones.

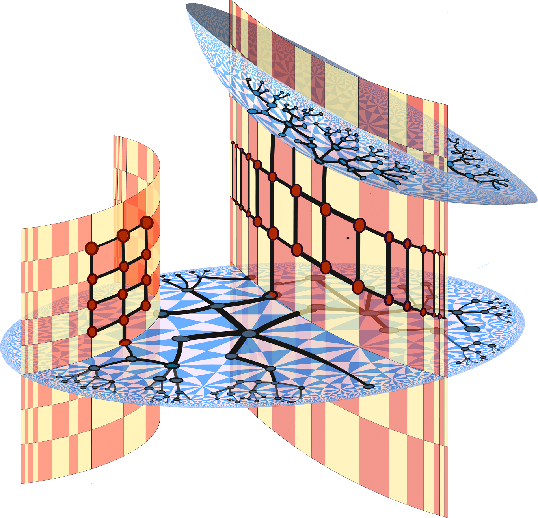

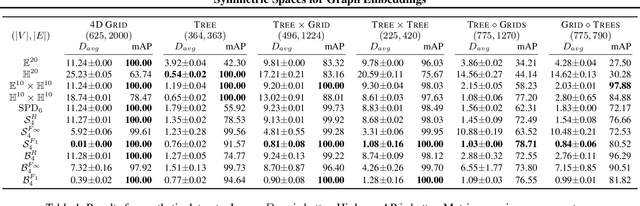

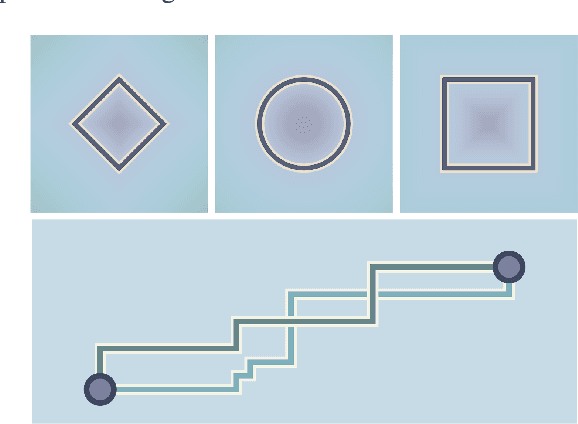

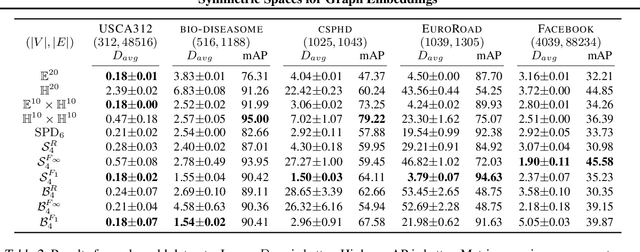

Symmetric Spaces for Graph Embeddings: A Finsler-Riemannian Approach

Jun 09, 2021

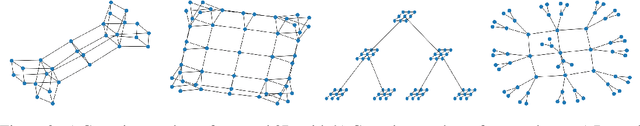

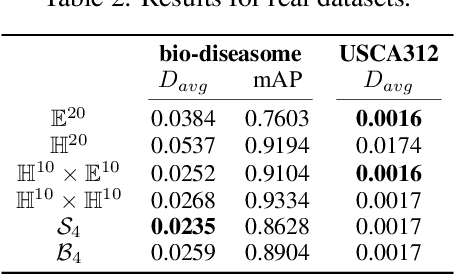

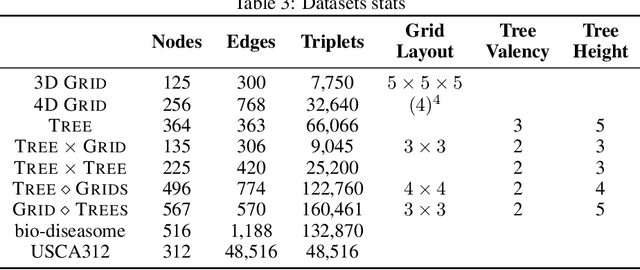

Abstract:Learning faithful graph representations as sets of vertex embeddings has become a fundamental intermediary step in a wide range of machine learning applications. We propose the systematic use of symmetric spaces in representation learning, a class encompassing many of the previously used embedding targets. This enables us to introduce a new method, the use of Finsler metrics integrated in a Riemannian optimization scheme, that better adapts to dissimilar structures in the graph. We develop a tool to analyze the embeddings and infer structural properties of the data sets. For implementation, we choose Siegel spaces, a versatile family of symmetric spaces. Our approach outperforms competitive baselines for graph reconstruction tasks on various synthetic and real-world datasets. We further demonstrate its applicability on two downstream tasks, recommender systems and node classification.

Hermitian Symmetric Spaces for Graph Embeddings

May 11, 2021

Abstract:Learning faithful graph representations as sets of vertex embeddings has become a fundamental intermediary step in a wide range of machine learning applications. The quality of the embeddings is usually determined by how well the geometry of the target space matches the structure of the data. In this work we learn continuous representations of graphs in spaces of symmetric matrices over C. These spaces offer a rich geometry that simultaneously admits hyperbolic and Euclidean subspaces, and are amenable to analysis and explicit computations. We implement an efficient method to learn embeddings and compute distances, and develop the tools to operate with such spaces. The proposed models are able to automatically adapt to very dissimilar arrangements without any apriori estimates of graph features. On various datasets with very diverse structural properties and reconstruction measures our model ties the results of competitive baselines for geometrically pure graphs and outperforms them for graphs with mixed geometric features, showcasing the versatility of our approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge