Anna Rodionova

Hybridizing the 1/5-th Success Rule with Q-Learning for Controlling the Mutation Rate of an Evolutionary Algorithm

Jun 19, 2020

Abstract:It is well known that evolutionary algorithms (EAs) achieve peak performance only when their parameters are suitably tuned to the given problem. Even more, it is known that the best parameter values can change during the optimization process. Parameter control mechanisms are techniques developed to identify and to track these values. Recently, a series of rigorous theoretical works confirmed the superiority of several parameter control techniques over EAs with best possible static parameters. Among these results are examples for controlling the mutation rate of the $(1+\lambda)$~EA when optimizing the OneMax problem. However, it was shown in [Rodionova et al., GECCO'19] that the quality of these techniques strongly depends on the offspring population size $\lambda$. We introduce in this work a new hybrid parameter control technique, which combines the well-known one-fifth success rule with Q-learning. We demonstrate that our HQL mechanism achieves equal or superior performance to all techniques tested in [Rodionova et al., GECCO'19] and this -- in contrast to previous parameter control methods -- simultaneously for all offspring population sizes $\lambda$. We also show that the promising performance of HQL is not restricted to OneMax, but extends to several other benchmark problems.

Offspring Population Size Matters when Comparing Evolutionary Algorithms with Self-Adjusting Mutation Rates

Apr 18, 2019

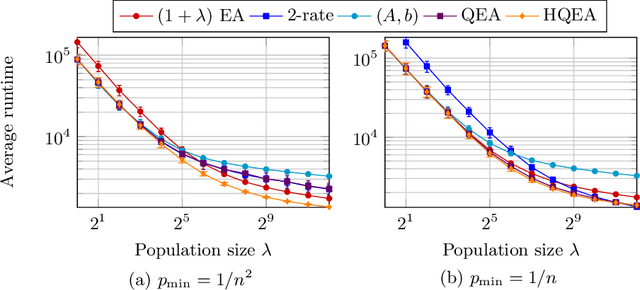

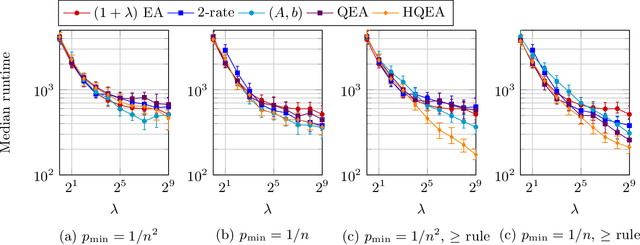

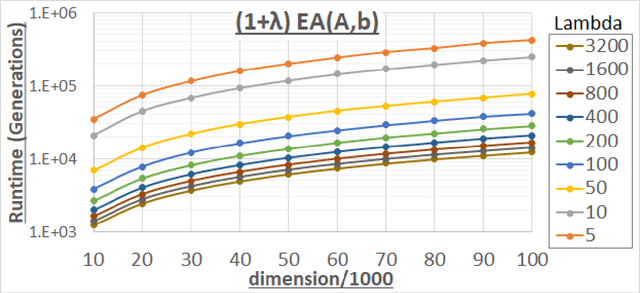

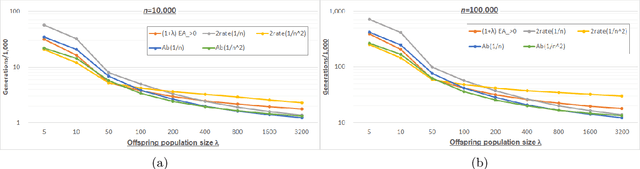

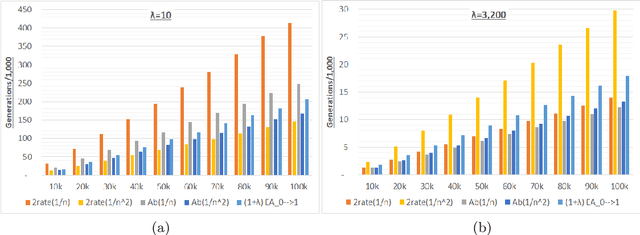

Abstract:We analyze the performance of the 2-rate $(1+\lambda)$ Evolutionary Algorithm (EA) with self-adjusting mutation rate control, its 3-rate counterpart, and a $(1+\lambda)$~EA variant using multiplicative update rules on the OneMax problem. We compare their efficiency for offspring population sizes ranging up to $\lambda=3,200$ and problem sizes up to $n=100,000$. Our empirical results show that the ranking of the algorithms is very consistent across all tested dimensions, but strongly depends on the population size. While for small values of $\lambda$ the 2-rate EA performs best, the multiplicative updates become superior for starting for some threshold value of $\lambda$ between 50 and 100. Interestingly, for population sizes around 50, the $(1+\lambda)$~EA with static mutation rates performs on par with the best of the self-adjusting algorithms. We also consider how the lower bound $p_{\min}$ for the mutation rate influences the efficiency of the algorithms. We observe that for the 2-rate EA and the EA with multiplicative update rules the more generous bound $p_{\min}=1/n^2$ gives better results than $p_{\min}=1/n$ when $\lambda$ is small. For both algorithms the situation reverses for large~$\lambda$.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge