Aniketh Manjunath

Boosting Segmentation Performance across datasets using histogram specification with application to pelvic bone segmentation

Jan 26, 2021

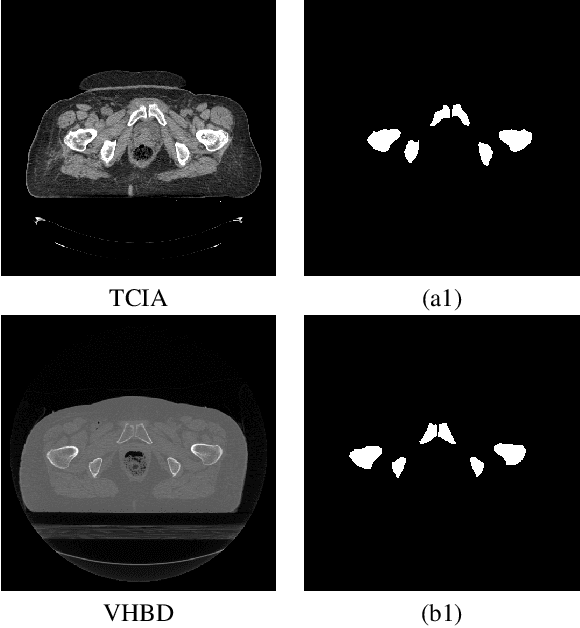

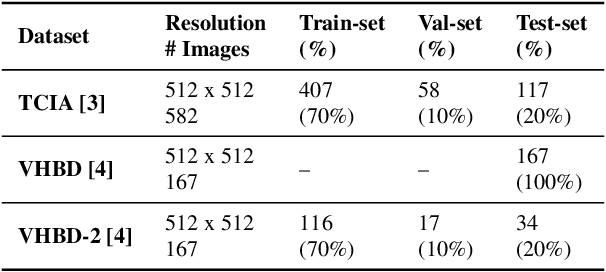

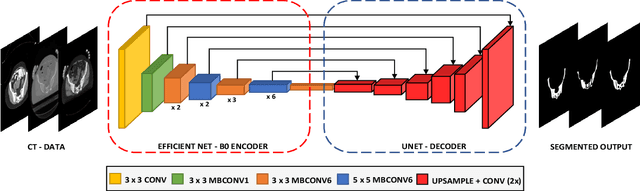

Abstract:Accurate segmentation of the pelvic CTs is crucial for the clinical diagnosis of pelvic bone diseases and for planning patient-specific hip surgeries. With the emergence and advancements of deep learning for digital healthcare, several methodologies have been proposed for such segmentation tasks. But in a low data scenario, the lack of abundant data needed to train a Deep Neural Network is a significant bottle-neck. In this work, we propose a methodology based on modulation of image tonal distributions and deep learning to boost the performance of networks trained on limited data. The strategy involves pre-processing of test data through histogram specification. This simple yet effective approach can be viewed as a style transfer methodology. The segmentation task uses a U-Net configuration with an EfficientNet-B0 backbone, optimized using an augmented BCE-IoU loss function. This configuration is validated on a total of 284 images taken from two publicly available CT datasets, TCIA (a cancer imaging archive) and the Visible Human Project. The average performance measures for the Dice coefficient and Intersection over Union are 95.7% and 91.9%, respectively, give strong evidence for the effectiveness of the approach, which is highly competitive with state-of-the-art methodologies.

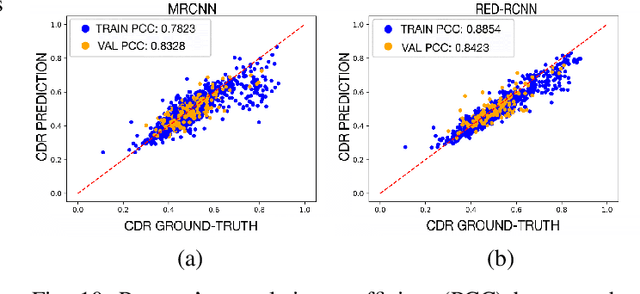

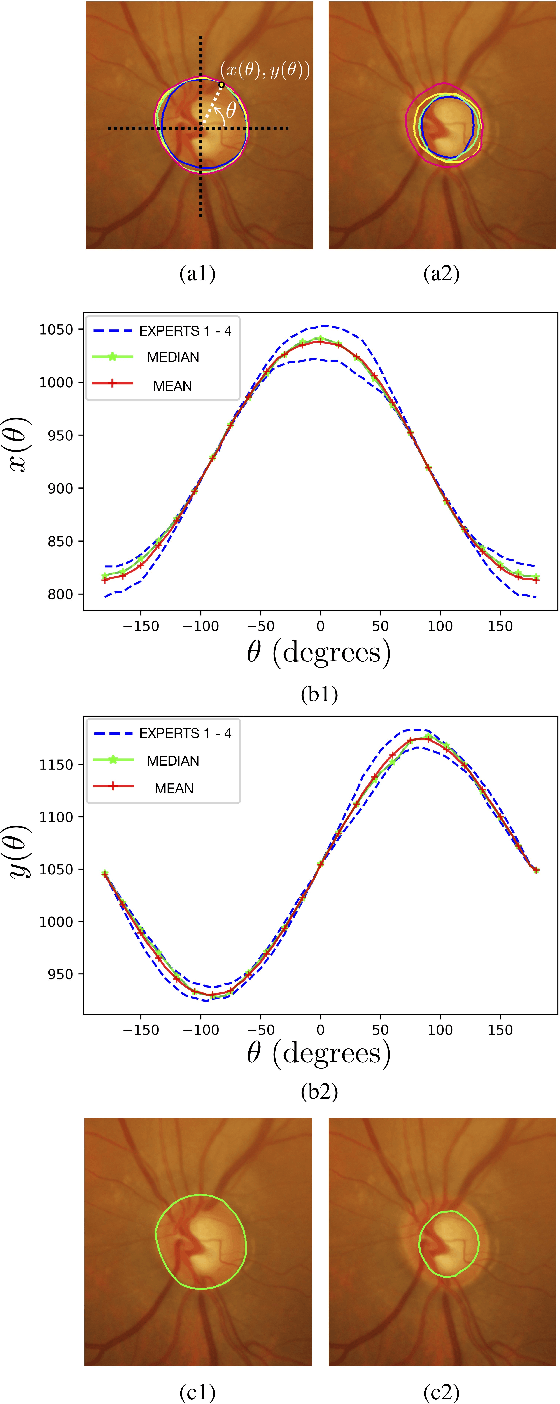

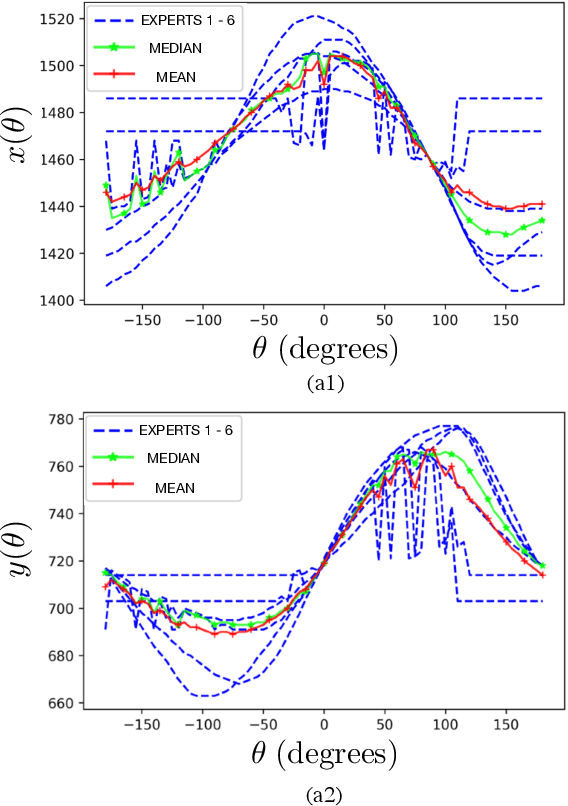

Robust Segmentation of Optic Disc and Cup from Fundus Images Using Deep Neural Networks

Dec 13, 2020

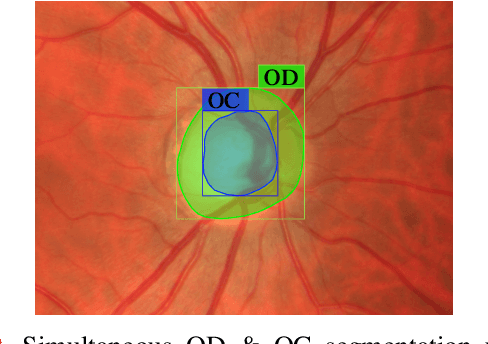

Abstract:Optic disc (OD) and optic cup (OC) are regions of prominent clinical interest in a retinal fundus image. They are the primary indicators of a glaucomatous condition. With the advent and success of deep learning for healthcare research, several approaches have been proposed for the segmentation of important features in retinal fundus images. We propose a novel approach for the simultaneous segmentation of the OD and OC using a residual encoder-decoder network (REDNet) based regional convolutional neural network (RCNN). The RED-RCNN is motivated by the Mask RCNN (MRCNN). Performance comparisons with the state-of-the-art techniques and extensive validations on standard publicly available fundus image datasets show that RED-RCNN has superior performance compared with MRCNN. RED-RCNN results in Sensitivity, Specificity, Accuracy, Precision, Dice and Jaccard indices of 95.64%, 99.9%, 99.82%, 95.68%, 95.64%, 91.65%, respectively, for OD segmentation, and 91.44%, 99.87%, 99.83%, 85.67%, 87.48%, 78.09%, respectively, for OC segmentation. Further, we perform two-stage glaucoma severity grading using the cup-to-disc ratio (CDR) computed based on the obtained OD/OC segmentation. The superior segmentation performance of RED-RCNN over MRCNN translates to higher accuracy in glaucoma severity grading.

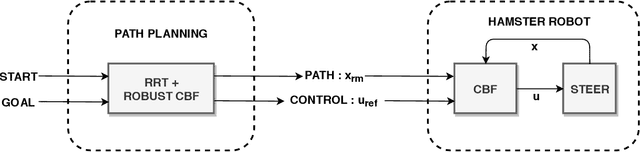

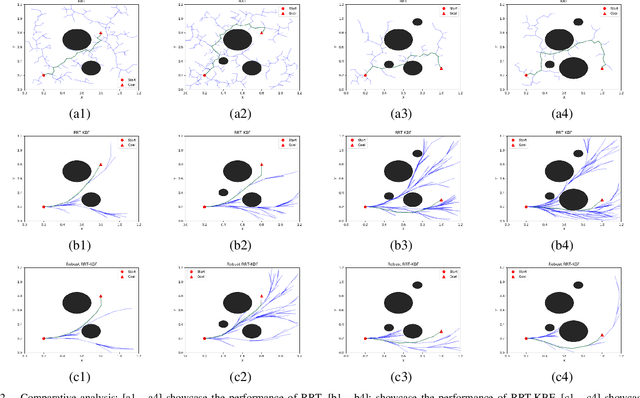

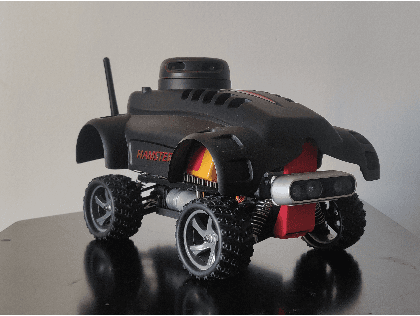

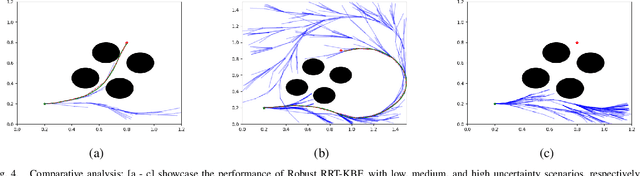

Safe and Robust Motion Planning for Dynamic Robotics via Control Barrier Functions

Nov 13, 2020

Abstract:Control Barrier Functions (CBF) are widely used to enforce the safety-critical constraints on nonlinear systems. Recently, these functions are being incorporated into a path planning framework to design a safety-critical path planner. However, these methods fall short of providing a realistic path considering both run-time complexity and safety-critical constraints. This paper proposes a novel motion planning approach using Rapidly exploring Random Trees (RRT) algorithm to enforce the robust CBF and kinodynamic constraints to generate a safety-critical path that is free of any obstacles while taking into account the model uncertainty from robot dynamics as well as perception. Result analysis indicates that the proposed method outperforms various conventional RRT based path planners, guaranteeing a safety-critical path with reduced computational overhead. We present numerical validation of the algorithm on the Hamster V7 robot car, a micro autonomous Unmanned Ground Vehicle, where it performs dynamic navigation on an obstacle-ridden path with various uncertainties in perception noises, and robot dynamics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge