Andrea Pagnani

Expectation propagation for the diluted Bayesian classifier

Sep 20, 2020

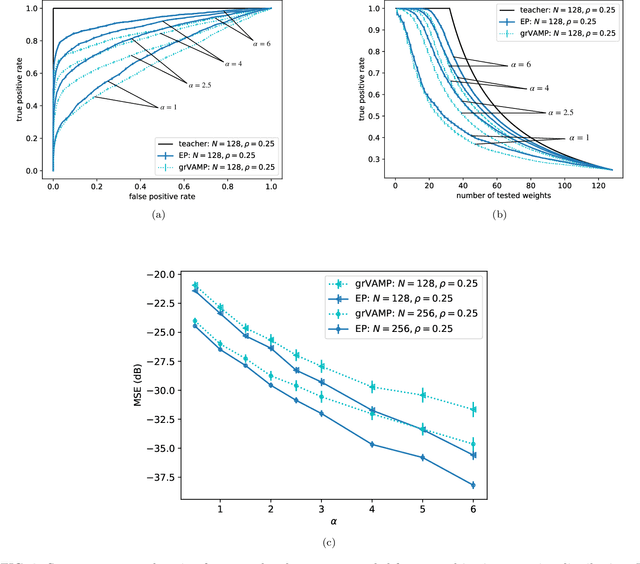

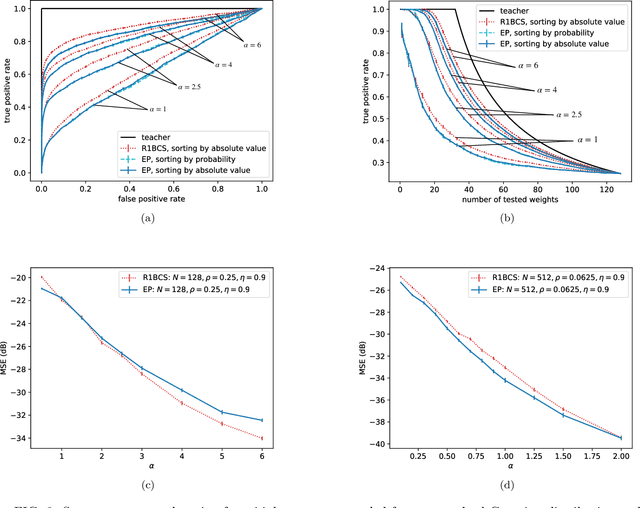

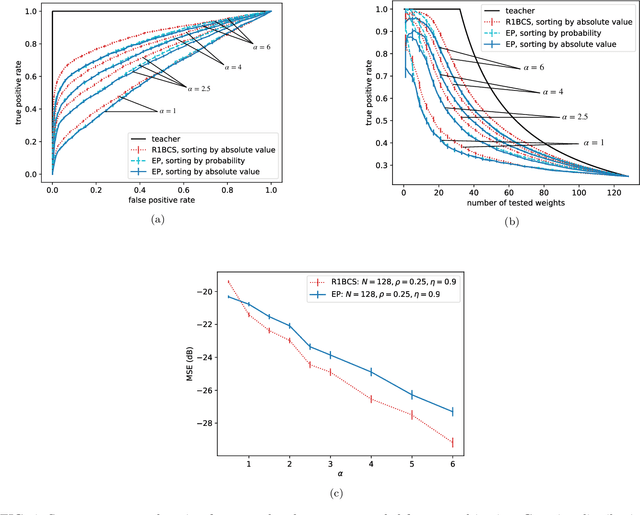

Abstract:Efficient feature selection from high-dimensional datasets is a very important challenge in many data-driven fields of science and engineering. We introduce a statistical mechanics inspired strategy that addresses the problem of sparse feature selection in the context of binary classification by leveraging a computational scheme known as expectation propagation (EP). The algorithm is used in order to train a continuous-weights perceptron learning a classification rule from a set of (possibly partly mislabeled) examples provided by a teacher perceptron with diluted continuous weights. We test the method in the Bayes optimal setting under a variety of conditions and compare it to other state-of-the-art algorithms based on message passing and on expectation maximization approximate inference schemes. Overall, our simulations show that EP is a robust and competitive algorithm in terms of variable selection properties, estimation accuracy and computationally complexity, especially when the student perceptron is trained from correlated patterns that prevent other iterative methods from converging. Furthermore, our numerical tests demonstrate that the algorithm is capable of learning online the unknown values of prior parameters, such as the dilution level of the weights of the teacher perceptron and the fraction of mislabeled examples, quite accurately. This is achieved by means of a simple maximum likelihood strategy that consists in minimizing the free energy associated with the EP algorithm.

Compressed sensing reconstruction using Expectation Propagation

Apr 10, 2019

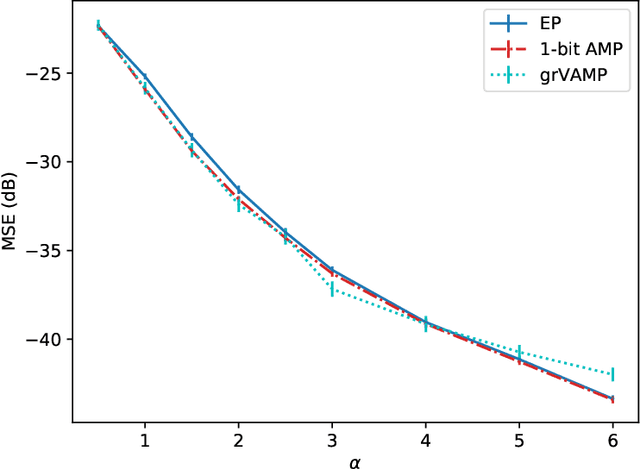

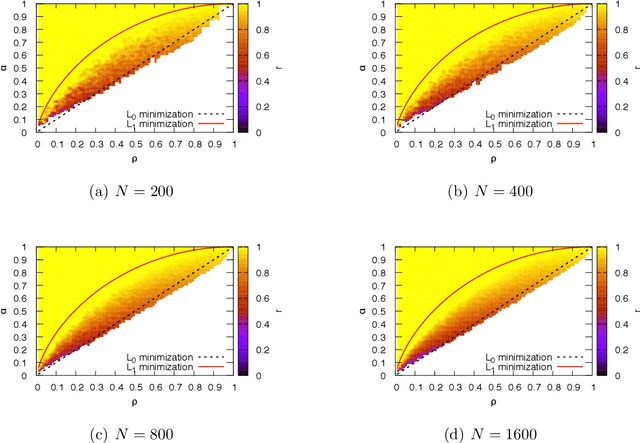

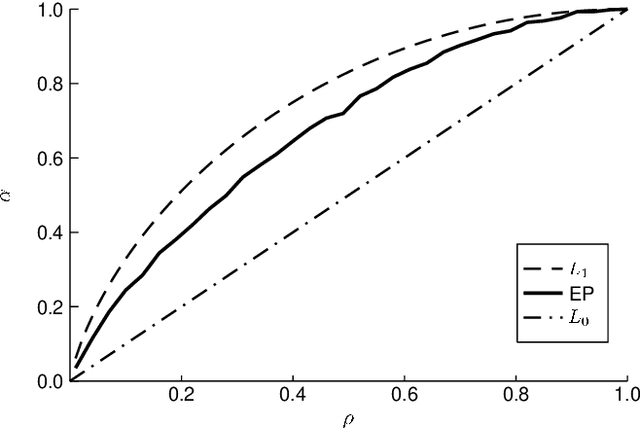

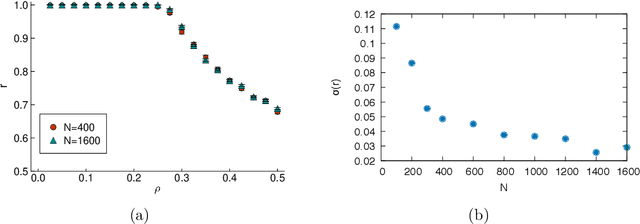

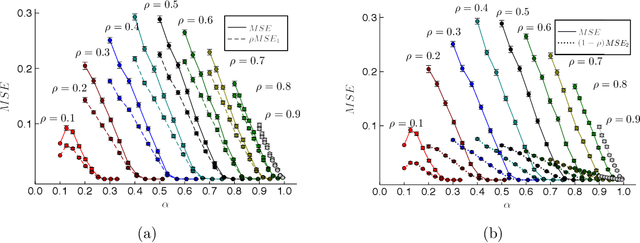

Abstract:Many interesting problems in fields ranging from telecommunications to computational biology can be formalized in terms of large underdetermined systems of linear equations with additional constraints or regularizers. One of the most studied ones, the Compressed Sensing problem (CS), consists in finding the solution with the smallest number of non-zero components of a given system of linear equations $\boldsymbol y = \mathbf{F} \boldsymbol w$ for known measurement vector $\boldsymbol y$ and sensing matrix $\mathbf{F}$. Here, we will address the compressed sensing problem within a Bayesian inference framework where the sparsity constraint is remapped into a singular prior distribution (called Spike-and-Slab or Bernoulli-Gauss). Solution to the problem is attempted through the computation of marginal distributions via Expectation Propagation (EP), an iterative computational scheme originally developed in Statistical Physics. We will show that this strategy is comparatively more accurate than the alternatives in solving instances of CS generated from statistically correlated measurement matrices. For computational strategies based on the Bayesian framework such as variants of Belief Propagation, this is to be expected, as they implicitly rely on the hypothesis of statistical independence among the entries of the sensing matrix. Perhaps surprisingly, the method outperforms uniformly also all the other state-of-the-art methods in our tests.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge