Andre Ivan

Inha University

5D Light Field Synthesis from a Monocular Video

Dec 23, 2019

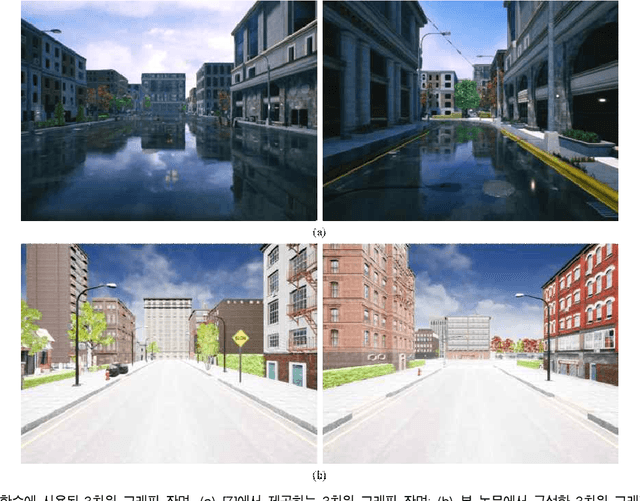

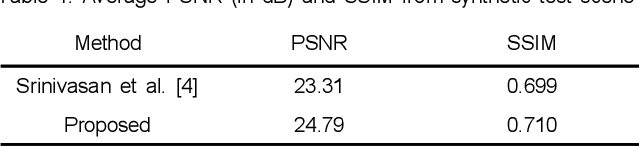

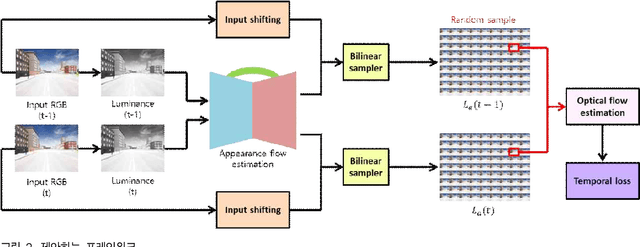

Abstract:Commercially available light field cameras have difficulty in capturing 5D (4D + time) light field videos. They can only capture still light filed images or are excessively expensive for normal users to capture the light field video. To tackle this problem, we propose a deep learning-based method for synthesizing a light field video from a monocular video. We propose a new synthetic light field video dataset that renders photorealistic scenes using UnrealCV rendering engine because no light field dataset is available. The proposed deep learning framework synthesizes the light field video with a full set (9$\times$9) of sub-aperture images from a normal monocular video. The proposed network consists of three sub-networks, namely, feature extraction, 5D light field video synthesis, and temporal consistency refinement. Experimental results show that our model can successfully synthesize the light field video for synthetic and actual scenes and outperforms the previous frame-by-frame methods quantitatively and qualitatively. The synthesized light field can be used for conventional light field applications, namely, depth estimation, viewpoint change, and refocusing.

Joint Spatial and Angular Super-Resolution from a Single Image

Nov 29, 2019

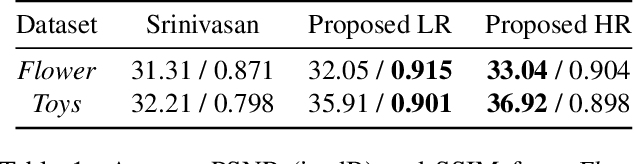

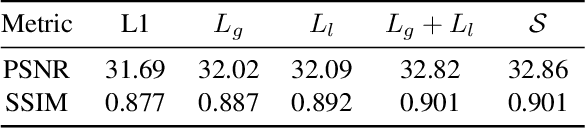

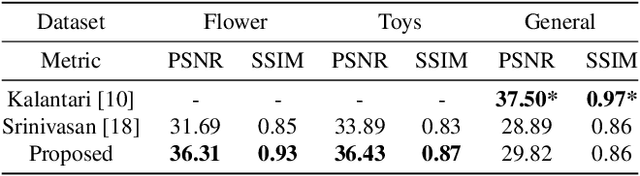

Abstract:Synthesizing a densely sampled light field from a single image is highly beneficial for many applications. Moreover, jointly solving both angular and spatial super-resolution problem also introduces new possibilities in light field imaging. The conventional method relies on physical-based rendering and a secondary network to solve the angular super-resolution problem. In addition, pixel-based loss limits the network capability to infer scene geometry globally. In this paper, we show that both super-resolution problems can be solved jointly from a single image by proposing a single end-to-end deep neural network that does not require a physical-based approach. Two novel loss functions based on known light field domain knowledge are proposed to enable the network to preserve the spatio-angular consistency between sub-aperture images. Experimental results show that the proposed model successfully synthesizes dense high resolution light field and it outperforms the state-of-the-art method in both quantitative and qualitative criteria. The method can be generalized to arbitrary scenes, rather than focusing on a particular subject. The synthesized light field can be used for various applications, such as depth estimation and refocusing.

Synthesizing a 4D Spatio-Angular Consistent Light Field from a Single Image

Mar 29, 2019

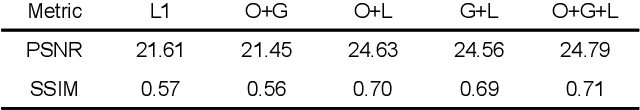

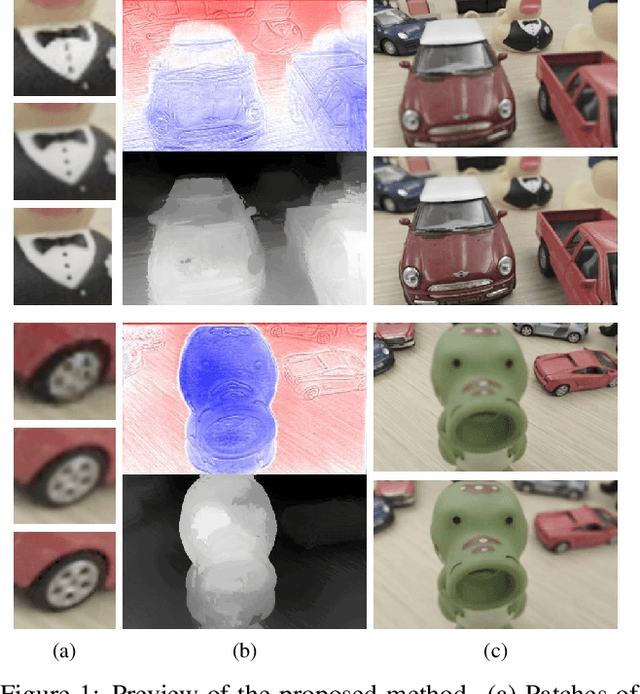

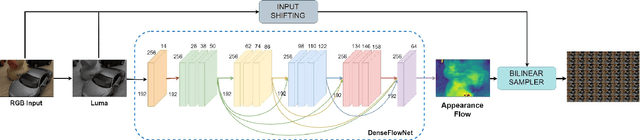

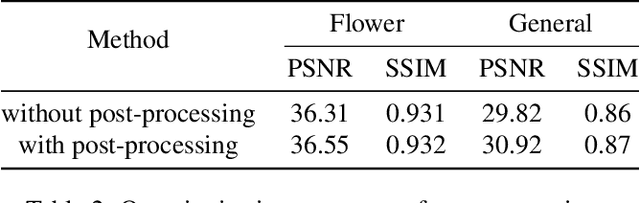

Abstract:Synthesizing a densely sampled light field from a single image is highly beneficial for many applications. The conventional method reconstructs a depth map and relies on physical-based rendering and a secondary network to improve the synthesized novel views. Simple pixel-based loss also limits the network by making it rely on pixel intensity cue rather than geometric reasoning. In this study, we show that a different geometric representation, namely, appearance flow, can be used to synthesize a light field from a single image robustly and directly. A single end-to-end deep neural network that does not require a physical-based approach nor a post-processing subnetwork is proposed. Two novel loss functions based on known light field domain knowledge are presented to enable the network to preserve the spatio-angular consistency between sub-aperture images effectively. Experimental results show that the proposed model successfully synthesizes dense light fields and qualitatively and quantitatively outperforms the previous model . The method can be generalized to arbitrary scenes, rather than focusing on a particular class of object. The synthesized light field can be used for various applications, such as depth estimation and refocusing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge