Ana Valdivia

Gender Trouble in Language Models: An Empirical Audit Guided by Gender Performativity Theory

May 20, 2025Abstract:Language models encode and subsequently perpetuate harmful gendered stereotypes. Research has succeeded in mitigating some of these harms, e.g. by dissociating non-gendered terms such as occupations from gendered terms such as 'woman' and 'man'. This approach, however, remains superficial given that associations are only one form of prejudice through which gendered harms arise. Critical scholarship on gender, such as gender performativity theory, emphasizes how harms often arise from the construction of gender itself, such as conflating gender with biological sex. In language models, these issues could lead to the erasure of transgender and gender diverse identities and cause harms in downstream applications, from misgendering users to misdiagnosing patients based on wrong assumptions about their anatomy. For FAccT research on gendered harms to go beyond superficial linguistic associations, we advocate for a broader definition of 'gender bias' in language models. We operationalize insights on the construction of gender through language from gender studies literature and then empirically test how 16 language models of different architectures, training datasets, and model sizes encode gender. We find that language models tend to encode gender as a binary category tied to biological sex, and that gendered terms that do not neatly fall into one of these binary categories are erased and pathologized. Finally, we show that larger models, which achieve better results on performance benchmarks, learn stronger associations between gender and sex, further reinforcing a narrow understanding of gender. Our findings lead us to call for a re-evaluation of how gendered harms in language models are defined and addressed.

Characterizing and modeling harms from interactions with design patterns in AI interfaces

Apr 17, 2024Abstract:The proliferation of applications using artificial intelligence (AI) systems has led to a growing number of users interacting with these systems through sophisticated interfaces. Human-computer interaction research has long shown that interfaces shape both user behavior and user perception of technical capabilities and risks. Yet, practitioners and researchers evaluating the social and ethical risks of AI systems tend to overlook the impact of anthropomorphic, deceptive, and immersive interfaces on human-AI interactions. Here, we argue that design features of interfaces with adaptive AI systems can have cascading impacts, driven by feedback loops, which extend beyond those previously considered. We first conduct a scoping review of AI interface designs and their negative impact to extract salient themes of potentially harmful design patterns in AI interfaces. Then, we propose Design-Enhanced Control of AI systems (DECAI), a conceptual model to structure and facilitate impact assessments of AI interface designs. DECAI draws on principles from control systems theory -- a theory for the analysis and design of dynamic physical systems -- to dissect the role of the interface in human-AI systems. Through two case studies on recommendation systems and conversational language model systems, we show how DECAI can be used to evaluate AI interface designs.

There is an elephant in the room: Towards a critique on the use of fairness in biometrics

Dec 24, 2021

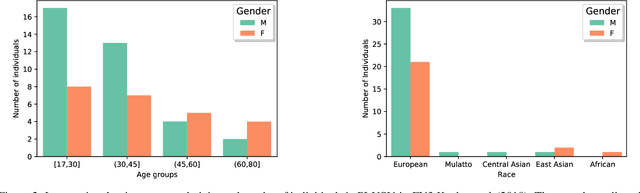

Abstract:In 2019, the UK's Immigration and Asylum Chamber of the Upper Tribunal dismissed an asylum appeal basing the decision on the output of a biometric system, alongside other discrepancies. The fingerprints of the asylum seeker were found in a biometric database which contradicted the appellant's account. The Tribunal found this evidence unequivocal and denied the asylum claim. Nowadays, the proliferation of biometric systems is shaping public debates around its political, social and ethical implications. Yet whilst concerns towards the racialised use of this technology for migration control have been on the rise, investment in the biometrics industry and innovation is increasing considerably. Moreover, fairness has also been recently adopted by biometrics to mitigate bias and discrimination on biometrics. However, algorithmic fairness cannot distribute justice in scenarios which are broken or intended purpose is to discriminate, such as biometrics deployed at the border. In this paper, we offer a critical reading of recent debates about biometric fairness and show its limitations drawing on research in fairness in machine learning and critical border studies. Building on previous fairness demonstrations, we prove that biometric fairness criteria are mathematically mutually exclusive. Then, the paper moves on illustrating empirically that a fair biometric system is not possible by reproducing experiments from previous works. Finally, we discuss the politics of fairness in biometrics by situating the debate at the border. We claim that bias and error rates have different impact on citizens and asylum seekers. Fairness has overshadowed the elephant in the room of biometrics, focusing on the demographic biases and ethical discourses of algorithms rather than examine how these systems reproduce historical and political injustices.

How fair can we go in machine learning? Assessing the boundaries of fairness in decision trees

Jun 22, 2020

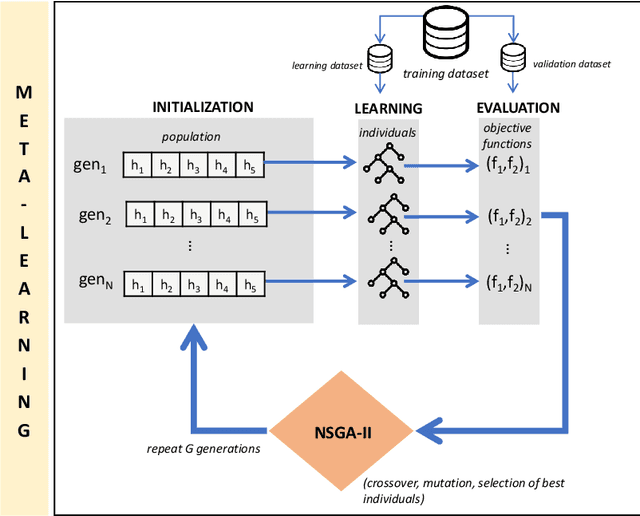

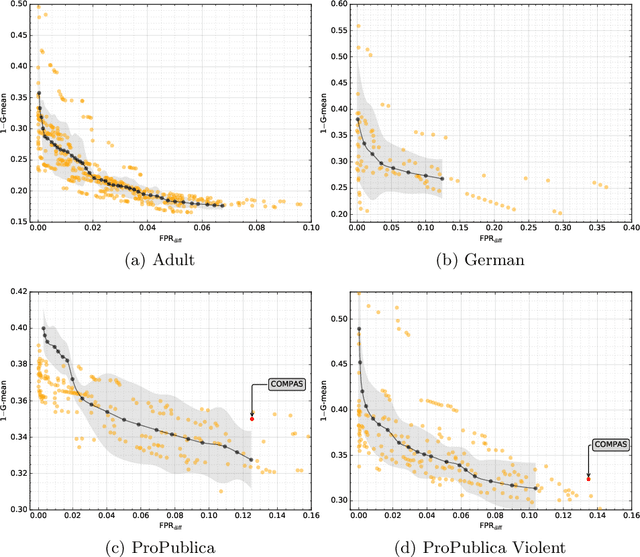

Abstract:Fair machine learning works have been focusing on the development of equitable algorithms that address discrimination of certain groups. Yet, many of these fairness-aware approaches aim to obtain a unique solution to the problem, which leads to a poor understanding of the statistical limits of bias mitigation interventions. We present the first methodology that allows to explore those limits within a multi-objective framework that seeks to optimize any measure of accuracy and fairness and provides a Pareto front with the best feasible solutions. In this work, we focus our study on decision tree classifiers since they are widely accepted in machine learning, are easy to interpret and can deal with non-numerical information naturally. We conclude experimentally that our method can optimize decision tree models by being fairer with a small cost of the classification error. We believe that our contribution will help stakeholders of sociotechnical systems to assess how far they can go being fair and accurate, thus serving in the support of enhanced decision making where machine learning is used.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge