Amit Sangroya

DifCluE: Generating Counterfactual Explanations with Diffusion Autoencoders and modal clustering

Feb 17, 2025Abstract:Generating multiple counterfactual explanations for different modes within a class presents a significant challenge, as these modes are distinct yet converge under the same classification. Diffusion probabilistic models (DPMs) have demonstrated a strong ability to capture the underlying modes of data distributions. In this paper, we harness the power of a Diffusion Autoencoder to generate multiple distinct counterfactual explanations. By clustering in the latent space, we uncover the directions corresponding to the different modes within a class, enabling the generation of diverse and meaningful counterfactuals. We introduce a novel methodology, DifCluE, which consistently identifies these modes and produces more reliable counterfactual explanations. Our experimental results demonstrate that DifCluE outperforms the current state-of-the-art in generating multiple counterfactual explanations, offering a significant advance- ment in model interpretability.

Learning Latent Beliefs and Performing Epistemic Reasoning for Efficient and Meaningful Dialog Management

Nov 26, 2018

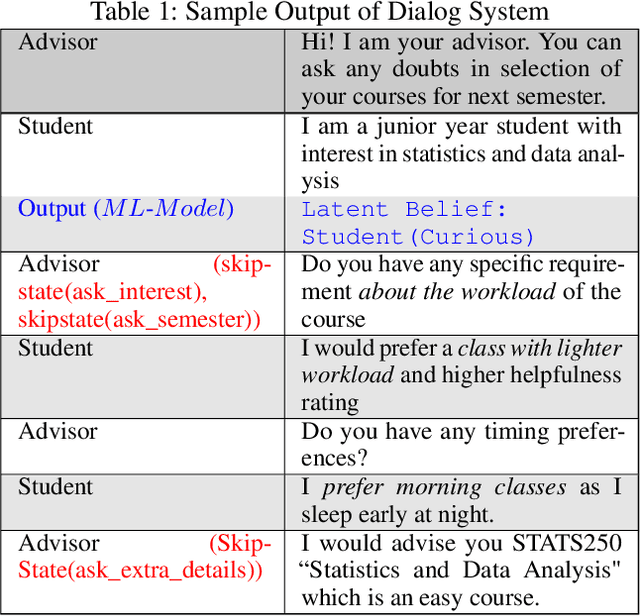

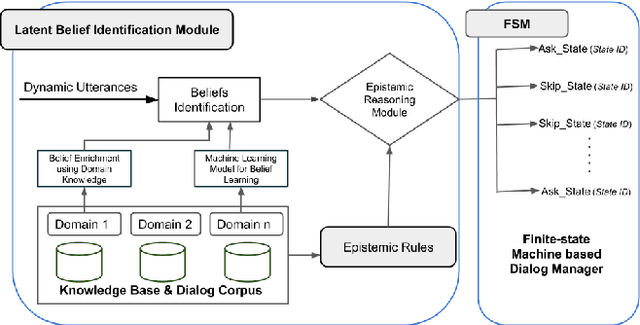

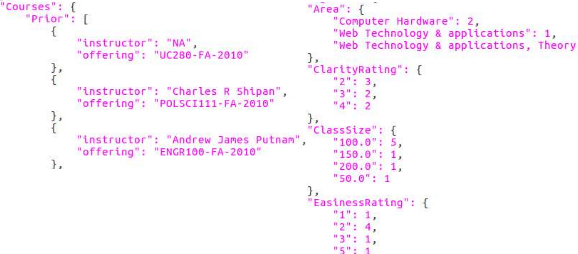

Abstract:Many dialogue management frameworks allow the system designer to directly define belief rules to implement an efficient dialog policy. Because these rules are directly defined, the components are said to be hand-crafted. As dialogues become more complex, the number of states, transitions, and policy decisions becomes very large. To facilitate the dialog policy design process, we propose an approach to automatically learn belief rules using a supervised machine learning approach. We validate our ideas in Student-Advisor conversation domain, where we extract latent beliefs like student is \textit{curious, confused and neutral}, etc. Further, we also perform epistemic reasoning that helps to tailor the dialog according to student's emotional state and hence improve the overall effectiveness of the dialog system. Our latent belief identification approach shows an accuracy of 87\% and this results in efficient and meaningful dialog management.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge