Amir Hesam Salavati

Convolutional Neural Associative Memories: Massive Capacity with Noise Tolerance

Jul 24, 2014

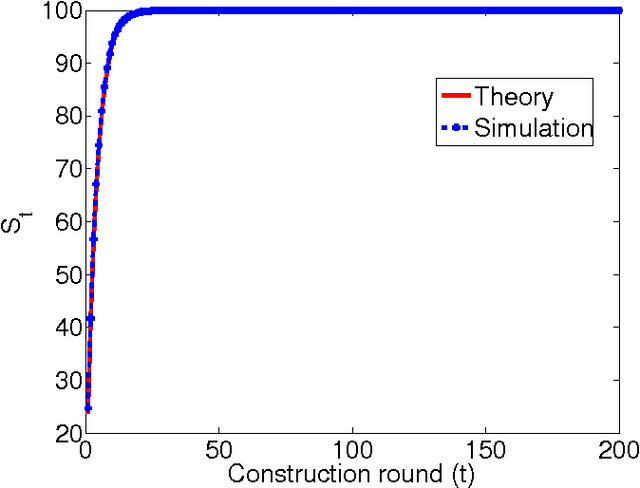

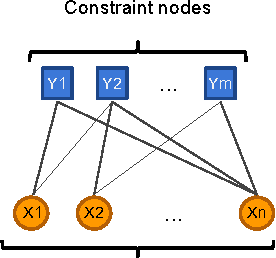

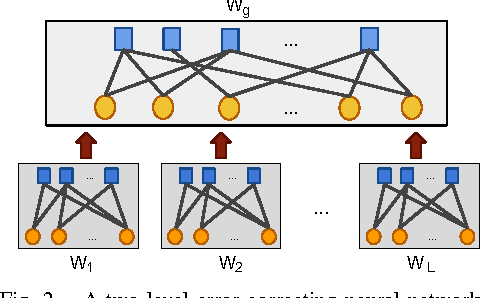

Abstract:The task of a neural associative memory is to retrieve a set of previously memorized patterns from their noisy versions using a network of neurons. An ideal network should have the ability to 1) learn a set of patterns as they arrive, 2) retrieve the correct patterns from noisy queries, and 3) maximize the pattern retrieval capacity while maintaining the reliability in responding to queries. The majority of work on neural associative memories has focused on designing networks capable of memorizing any set of randomly chosen patterns at the expense of limiting the retrieval capacity. In this paper, we show that if we target memorizing only those patterns that have inherent redundancy (i.e., belong to a subspace), we can obtain all the aforementioned properties. This is in sharp contrast with the previous work that could only improve one or two aspects at the expense of the third. More specifically, we propose framework based on a convolutional neural network along with an iterative algorithm that learns the redundancy among the patterns. The resulting network has a retrieval capacity that is exponential in the size of the network. Moreover, the asymptotic error correction performance of our network is linear in the size of the patterns. We then ex- tend our approach to deal with patterns lie approximately in a subspace. This extension allows us to memorize datasets containing natural patterns (e.g., images). Finally, we report experimental results on both synthetic and real datasets to support our claims.

Noise Facilitation in Associative Memories of Exponential Capacity

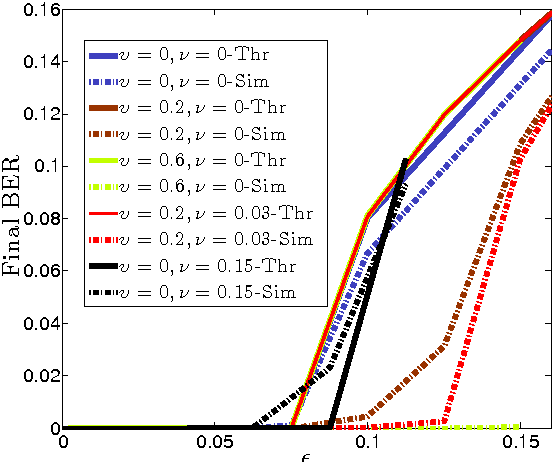

Mar 13, 2014Abstract:Recent advances in associative memory design through structured pattern sets and graph-based inference algorithms have allowed reliable learning and recall of an exponential number of patterns. Although these designs correct external errors in recall, they assume neurons that compute noiselessly, in contrast to the highly variable neurons in brain regions thought to operate associatively such as hippocampus and olfactory cortex. Here we consider associative memories with noisy internal computations and analytically characterize performance. As long as the internal noise level is below a specified threshold, the error probability in the recall phase can be made exceedingly small. More surprisingly, we show that internal noise actually improves the performance of the recall phase while the pattern retrieval capacity remains intact, i.e., the number of stored patterns does not reduce with noise (up to a threshold). Computational experiments lend additional support to our theoretical analysis. This work suggests a functional benefit to noisy neurons in biological neuronal networks.

Coupled Neural Associative Memories

Aug 23, 2013

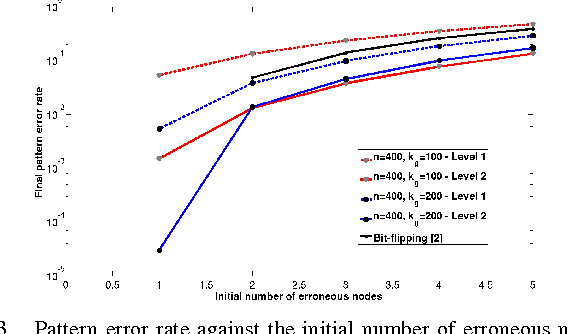

Abstract:We propose a novel architecture to design a neural associative memory that is capable of learning a large number of patterns and recalling them later in presence of noise. It is based on dividing the neurons into local clusters and parallel plains, very similar to the architecture of the visual cortex of macaque brain. The common features of our proposed architecture with those of spatially-coupled codes enable us to show that the performance of such networks in eliminating noise is drastically better than the previous approaches while maintaining the ability of learning an exponentially large number of patterns. Previous work either failed in providing good performance during the recall phase or in offering large pattern retrieval (storage) capacities. We also present computational experiments that lend additional support to the theoretical analysis.

Neural Networks Built from Unreliable Components

May 31, 2013

Abstract:Recent advances in associative memory design through strutured pattern sets and graph-based inference algorithms have allowed the reliable learning and retrieval of an exponential number of patterns. Both these and classical associative memories, however, have assumed internally noiseless computational nodes. This paper considers the setting when internal computations are also noisy. Even if all components are noisy, the final error probability in recall can often be made exceedingly small, as we characterize. There is a threshold phenomenon. We also show how to optimize inference algorithm parameters when knowing statistical properties of internal noise.

A Non-Binary Associative Memory with Exponential Pattern Retrieval Capacity and Iterative Learning: Extended Results

Feb 15, 2013

Abstract:We consider the problem of neural association for a network of non-binary neurons. Here, the task is to first memorize a set of patterns using a network of neurons whose states assume values from a finite number of integer levels. Later, the same network should be able to recall previously memorized patterns from their noisy versions. Prior work in this area consider storing a finite number of purely random patterns, and have shown that the pattern retrieval capacities (maximum number of patterns that can be memorized) scale only linearly with the number of neurons in the network. In our formulation of the problem, we concentrate on exploiting redundancy and internal structure of the patterns in order to improve the pattern retrieval capacity. Our first result shows that if the given patterns have a suitable linear-algebraic structure, i.e. comprise a sub-space of the set of all possible patterns, then the pattern retrieval capacity is in fact exponential in terms of the number of neurons. The second result extends the previous finding to cases where the patterns have weak minor components, i.e. the smallest eigenvalues of the correlation matrix tend toward zero. We will use these minor components (or the basis vectors of the pattern null space) to both increase the pattern retrieval capacity and error correction capabilities. An iterative algorithm is proposed for the learning phase, and two simple neural update algorithms are presented for the recall phase. Using analytical results and simulations, we show that the proposed methods can tolerate a fair amount of errors in the input while being able to memorize an exponentially large number of patterns.

Multi-Level Error-Resilient Neural Networks with Learning

Jun 21, 2012

Abstract:The problem of neural network association is to retrieve a previously memorized pattern from its noisy version using a network of neurons. An ideal neural network should include three components simultaneously: a learning algorithm, a large pattern retrieval capacity and resilience against noise. Prior works in this area usually improve one or two aspects at the cost of the third. Our work takes a step forward in closing this gap. More specifically, we show that by forcing natural constraints on the set of learning patterns, we can drastically improve the retrieval capacity of our neural network. Moreover, we devise a learning algorithm whose role is to learn those patterns satisfying the above mentioned constraints. Finally we show that our neural network can cope with a fair amount of noise.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge