Amar Halilovic

Proceedings of 1st Workshop on Advancing Artificial Intelligence through Theory of Mind

Apr 28, 2025

Abstract:This volume includes a selection of papers presented at the Workshop on Advancing Artificial Intelligence through Theory of Mind held at AAAI 2025 in Philadelphia US on 3rd March 2025. The purpose of this volume is to provide an open access and curated anthology for the ToM and AI research community.

Towards Probabilistic Planning of Explanations for Robot Navigation

Oct 26, 2024

Abstract:In robotics, ensuring that autonomous systems are comprehensible and accountable to users is essential for effective human-robot interaction. This paper introduces a novel approach that integrates user-centered design principles directly into the core of robot path planning processes. We propose a probabilistic framework for automated planning of explanations for robot navigation, where the preferences of different users regarding explanations are probabilistically modeled to tailor the stochasticity of the real-world human-robot interaction and the communication of decisions of the robot and its actions towards humans. This approach aims to enhance the transparency of robot path planning and adapt to diverse user explanation needs by anticipating the types of explanations that will satisfy individual users.

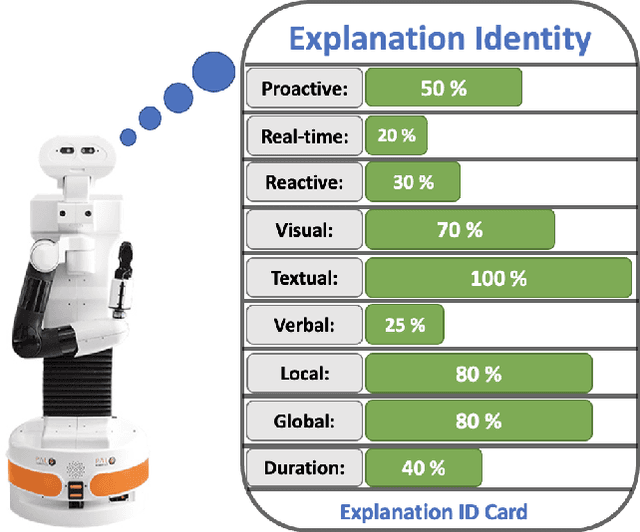

Robot Explanation Identity

May 22, 2024

Abstract:To bring robots into human everyday life, their capacity for social interaction must increase. One way for robots to acquire social skills is by assigning them the concept of identity. This research focuses on the concept of \textit{Explanation Identity} within the broader context of robots' roles in society, particularly their ability to interact socially and explain decisions. Explanation Identity refers to the combination of characteristics and approaches robots use to justify their actions to humans. Drawing from different technical and social disciplines, we introduce Explanation Identity as a multidisciplinary concept and discuss its importance in Human-Robot Interaction. Our theoretical framework highlights the necessity for robots to adapt their explanations to the user's context, demonstrating empathy and ethical integrity. This research emphasizes the dynamic nature of robot identity and guides the integration of explanation capabilities in social robots, aiming to improve user engagement and acceptance.

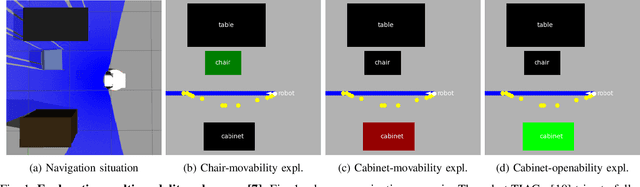

Understanding Path Planning Explanations

Nov 13, 2023

Abstract:Navigation is a must-have skill for any mobile robot. A core challenge in navigation is the need to account for an ample number of possible configurations of environment and navigation contexts. We claim that a mobile robot should be able to explain its navigational choices making its decisions understandable to humans. In this paper, we briefly present our approach to explaining navigational decisions of a robot through visual and textual explanations. We propose a user study to test the understandability and simplicity of the robot explanations and outline our further research agenda.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge