Alexandru G. Bardas

Distributed Energy Management and Demand Response in Smart Grids: A Multi-Agent Deep Reinforcement Learning Framework

Nov 29, 2022

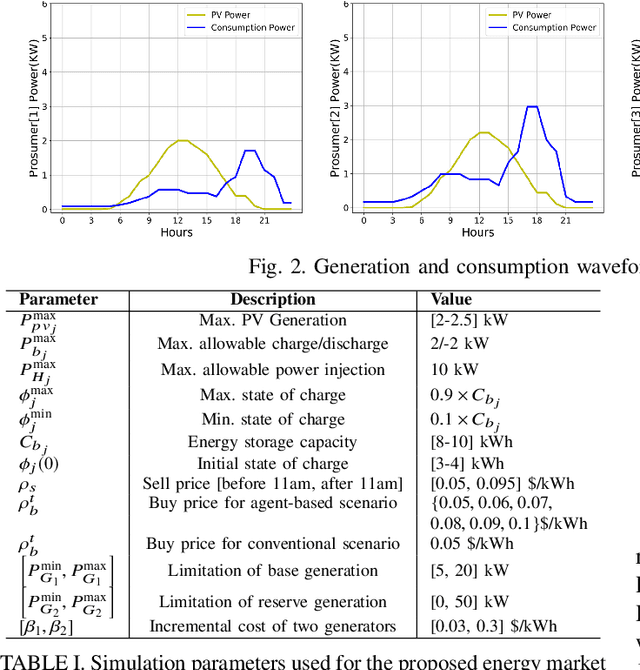

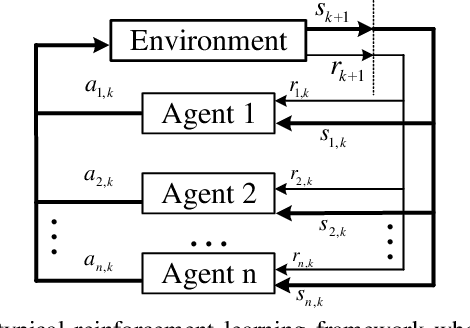

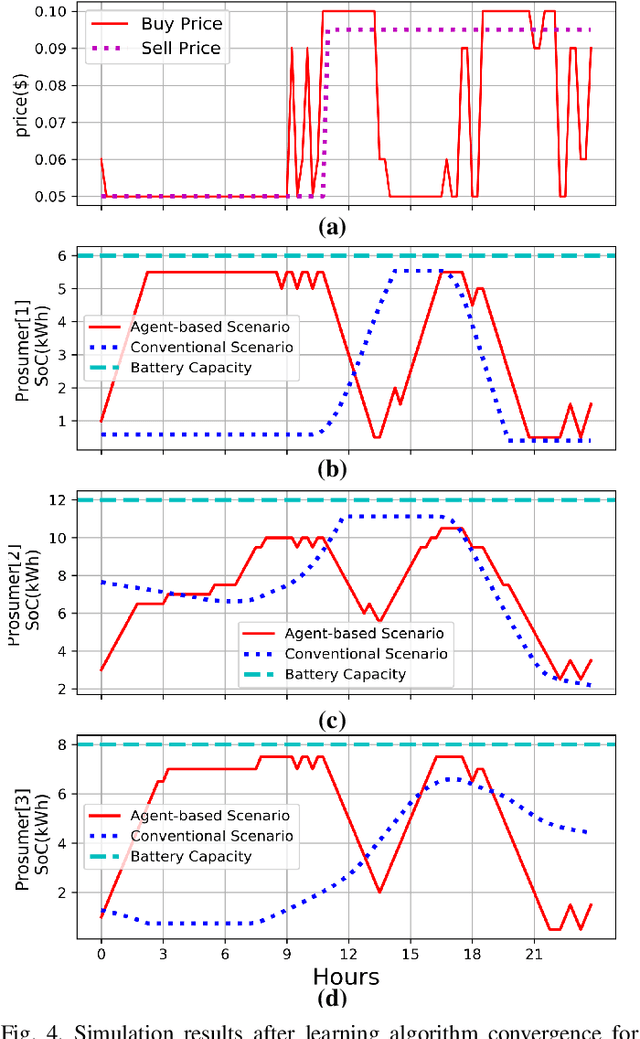

Abstract:This paper presents a multi-agent Deep Reinforcement Learning (DRL) framework for autonomous control and integration of renewable energy resources into smart power grid systems. In particular, the proposed framework jointly considers demand response (DR) and distributed energy management (DEM) for residential end-users. DR has a widely recognized potential for improving power grid stability and reliability, while at the same time reducing end-users energy bills. However, the conventional DR techniques come with several shortcomings, such as the inability to handle operational uncertainties while incurring end-user disutility, which prevents widespread adoption in real-world applications. The proposed framework addresses these shortcomings by implementing DR and DEM based on real-time pricing strategy that is achieved using deep reinforcement learning. Furthermore, this framework enables the power grid service provider to leverage distributed energy resources (i.e., PV rooftop panels and battery storage) as dispatchable assets to support the smart grid during peak hours, thus achieving management of distributed energy resources. Simulation results based on the Deep Q-Network (DQN) demonstrate significant improvements of the 24-hour accumulative profit for both prosumers and the power grid service provider, as well as major reductions in the utilization of the power grid reserve generators.

A Multi-Agent Deep Reinforcement Learning Approach for a Distributed Energy Marketplace in Smart Grids

Sep 23, 2020

Abstract:This paper presents a Reinforcement Learning (RL) based energy market for a prosumer dominated microgrid. The proposed market model facilitates a real-time and demanddependent dynamic pricing environment, which reduces grid costs and improves the economic benefits for prosumers. Furthermore, this market model enables the grid operator to leverage prosumers storage capacity as a dispatchable asset for grid support applications. Simulation results based on the Deep QNetwork (DQN) framework demonstrate significant improvements of the 24-hour accumulative profit for both prosumers and the grid operator, as well as major reductions in grid reserve power utilization.

Demand Responsive Dynamic Pricing Framework for Prosumer Dominated Microgrids using Multiagent Reinforcement Learning

Sep 23, 2020

Abstract:Demand Response (DR) has a widely recognized potential for improving grid stability and reliability while reducing customers energy bills. However, the conventional DR techniques come with several shortcomings, such as inability to handle operational uncertainties and incurring customer disutility, impeding their wide spread adoption in real-world applications. This paper proposes a new multiagent Reinforcement Learning (RL) based decision-making environment for implementing a Real-Time Pricing (RTP) DR technique in a prosumer dominated microgrid. The proposed technique addresses several shortcomings common to traditional DR methods and provides significant economic benefits to the grid operator and prosumers. To show its better efficacy, the proposed DR method is compared to a baseline traditional operation scenario in a small-scale microgrid system. Finally, investigations on the use of prosumers energy storage capacity in this microgrid highlight the advantages of the proposed method in establishing a balanced market setup.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge