Alexander Rakowski

DCID: Deep Canonical Information Decomposition

Jun 27, 2023

Abstract:We consider the problem of identifying the signal shared between two one-dimensional target variables, in the presence of additional multivariate observations. Canonical Correlation Analysis (CCA)-based methods have traditionally been used to identify shared variables, however, they were designed for multivariate targets and only offer trivial solutions for univariate cases. In the context of Multi-Task Learning (MTL), various models were postulated to learn features that are sparse and shared across multiple tasks. However, these methods were typically evaluated by their predictive performance. To the best of our knowledge, no prior studies systematically evaluated models in terms of correctly recovering the shared signal. Here, we formalize the setting of univariate shared information retrieval, and propose ICM, an evaluation metric which can be used in the presence of ground-truth labels, quantifying 3 aspects of the learned shared features. We further propose Deep Canonical Information Decomposition (DCID) - a simple, yet effective approach for learning the shared variables. We benchmark the models on a range of scenarios on synthetic data with known ground-truths and observe DCID outperforming the baselines in a wide range of settings. Finally, we demonstrate a real-life application of DCID on brain Magnetic Resonance Imaging (MRI) data, where we are able to extract more accurate predictors of changes in brain regions and obesity. The code for our experiments as well as the supplementary materials are available at https://github.com/alexrakowski/dcid

Disentangling multiple scattering with deep learning: application to strain mapping from electron diffraction patterns

Feb 01, 2022

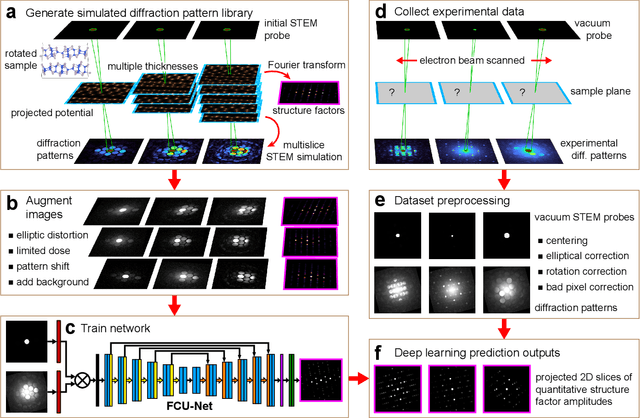

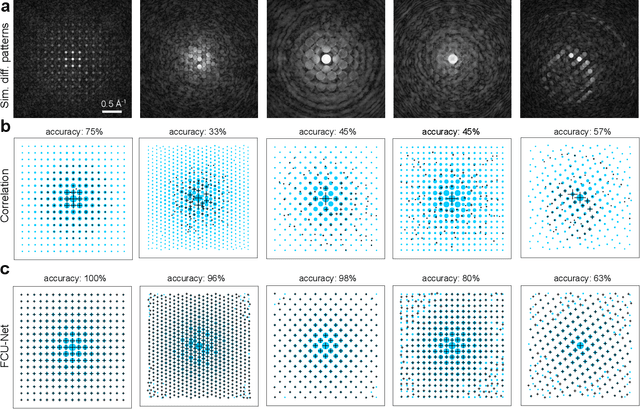

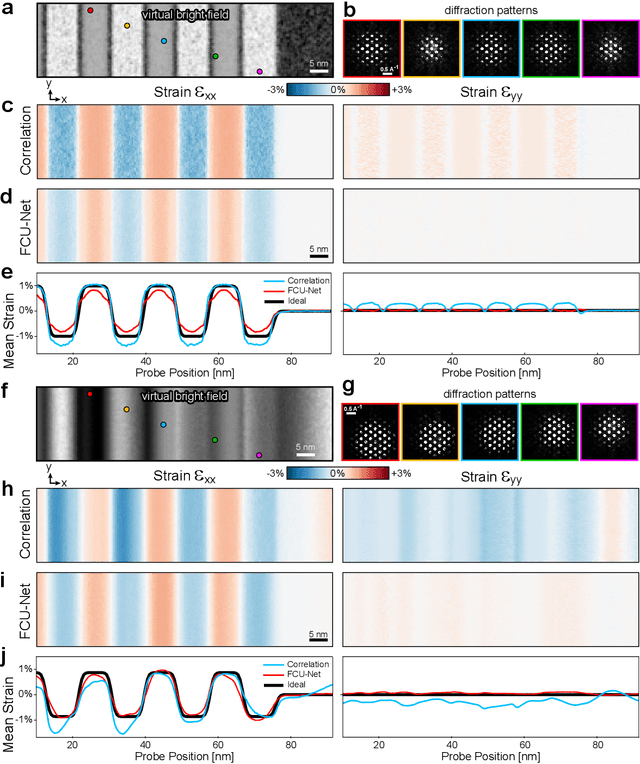

Abstract:Implementation of a fast, robust, and fully-automated pipeline for crystal structure determination and underlying strain mapping for crystalline materials is important for many technological applications. Scanning electron nanodiffraction offers a procedure for identifying and collecting strain maps with good accuracy and high spatial resolutions. However, the application of this technique is limited, particularly in thick samples where the electron beam can undergo multiple scattering, which introduces signal nonlinearities. Deep learning methods have the potential to invert these complex signals, but previous implementations are often trained only on specific crystal systems or a small subset of the crystal structure and microscope parameter phase space. In this study, we implement a Fourier space, complex-valued deep neural network called FCU-Net, to invert highly nonlinear electron diffraction patterns into the corresponding quantitative structure factor images. We trained the FCU-Net using over 200,000 unique simulated dynamical diffraction patterns which include many different combinations of crystal structures, orientations, thicknesses, microscope parameters, and common experimental artifacts. We evaluated the trained FCU-Net model against simulated and experimental 4D-STEM diffraction datasets, where it substantially out-performs conventional analysis methods. Our simulated diffraction pattern library, implementation of FCU-Net, and trained model weights are freely available in open source repositories, and can be adapted to many different diffraction measurement problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge