Alexander Koebler

LVLM-Aided Alignment of Task-Specific Vision Models

Dec 26, 2025

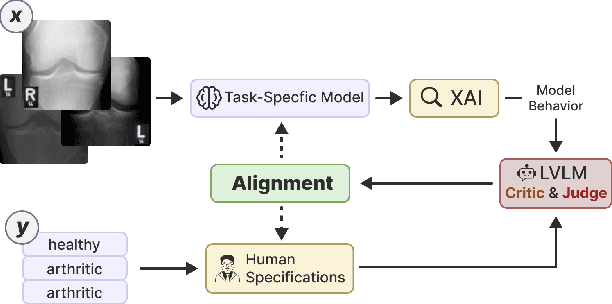

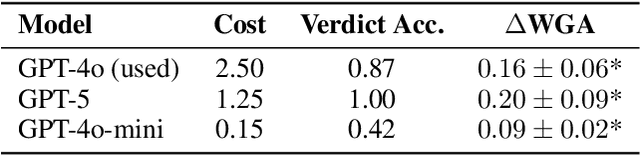

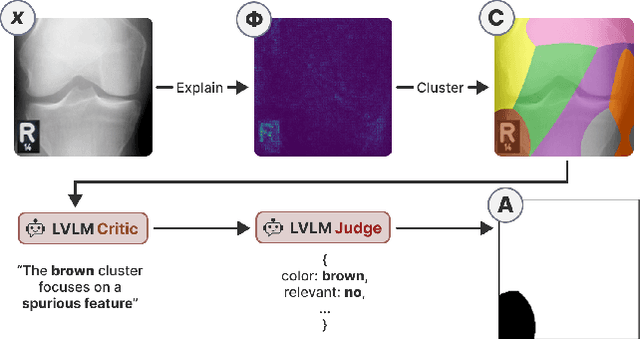

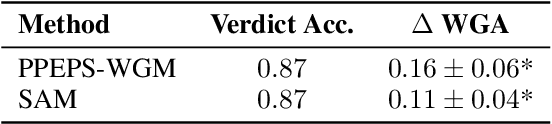

Abstract:In high-stakes domains, small task-specific vision models are crucial due to their low computational requirements and the availability of numerous methods to explain their results. However, these explanations often reveal that the models do not align well with human domain knowledge, relying instead on spurious correlations. This might result in brittle behavior once deployed in the real-world. To address this issue, we introduce a novel and efficient method for aligning small task-specific vision models with human domain knowledge by leveraging the generalization capabilities of a Large Vision Language Model (LVLM). Our LVLM-Aided Visual Alignment (LVLM-VA) method provides a bidirectional interface that translates model behavior into natural language and maps human class-level specifications to image-level critiques, enabling effective interaction between domain experts and the model. Our method demonstrates substantial improvement in aligning model behavior with human specifications, as validated on both synthetic and real-world datasets. We show that it effectively reduces the model's dependence on spurious features and on group-specific biases, without requiring fine-grained feedback.

Incremental Uncertainty-aware Performance Monitoring with Active Labeling Intervention

May 11, 2025Abstract:We study the problem of monitoring machine learning models under gradual distribution shifts, where circumstances change slowly over time, often leading to unnoticed yet significant declines in accuracy. To address this, we propose Incremental Uncertainty-aware Performance Monitoring (IUPM), a novel label-free method that estimates performance changes by modeling gradual shifts using optimal transport. In addition, IUPM quantifies the uncertainty in the performance prediction and introduces an active labeling procedure to restore a reliable estimate under a limited labeling budget. Our experiments show that IUPM outperforms existing performance estimation baselines in various gradual shift scenarios and that its uncertainty awareness guides label acquisition more effectively compared to other strategies.

Grasping Partially Occluded Objects Using Autoencoder-Based Point Cloud Inpainting

Mar 16, 2025Abstract:Flexible industrial production systems will play a central role in the future of manufacturing due to higher product individualization and customization. A key component in such systems is the robotic grasping of known or unknown objects in random positions. Real-world applications often come with challenges that might not be considered in grasping solutions tested in simulation or lab settings. Partial occlusion of the target object is the most prominent. Examples of occlusion can be supporting structures in the camera's field of view, sensor imprecision, or parts occluding each other due to the production process. In all these cases, the resulting lack of information leads to shortcomings in calculating grasping points. In this paper, we present an algorithm to reconstruct the missing information. Our inpainting solution facilitates the real-world utilization of robust object matching approaches for grasping point calculation. We demonstrate the benefit of our solution by enabling an existing grasping system embedded in a real-world industrial application to handle occlusions in the input. With our solution, we drastically decrease the number of objects discarded by the process.

Explanatory Model Monitoring to Understand the Effects of Feature Shifts on Performance

Aug 24, 2024

Abstract:Monitoring and maintaining machine learning models are among the most critical challenges in translating recent advances in the field into real-world applications. However, current monitoring methods lack the capability of provide actionable insights answering the question of why the performance of a particular model really degraded. In this work, we propose a novel approach to explain the behavior of a black-box model under feature shifts by attributing an estimated performance change to interpretable input characteristics. We refer to our method that combines concepts from Optimal Transport and Shapley Values as Explanatory Performance Estimation (XPE). We analyze the underlying assumptions and demonstrate the superiority of our approach over several baselines on different data sets across various data modalities such as images, audio, and tabular data. We also indicate how the generated results can lead to valuable insights, enabling explanatory model monitoring by revealing potential root causes for model deterioration and guiding toward actionable countermeasures.

The Thousand Faces of Explainable AI Along the Machine Learning Life Cycle: Industrial Reality and Current State of Research

Oct 11, 2023Abstract:In this paper, we investigate the practical relevance of explainable artificial intelligence (XAI) with a special focus on the producing industries and relate them to the current state of academic XAI research. Our findings are based on an extensive series of interviews regarding the role and applicability of XAI along the Machine Learning (ML) lifecycle in current industrial practice and its expected relevance in the future. The interviews were conducted among a great variety of roles and key stakeholders from different industry sectors. On top of that, we outline the state of XAI research by providing a concise review of the relevant literature. This enables us to provide an encompassing overview covering the opinions of the surveyed persons as well as the current state of academic research. By comparing our interview results with the current research approaches we reveal several discrepancies. While a multitude of different XAI approaches exists, most of them are centered around the model evaluation phase and data scientists. Their versatile capabilities for other stages are currently either not sufficiently explored or not popular among practitioners. In line with existing work, our findings also confirm that more efforts are needed to enable also non-expert users' interpretation and understanding of opaque AI models with existing methods and frameworks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge