Alexander Farhang

Investigating Generalization by Controlling Normalized Margin

May 08, 2022

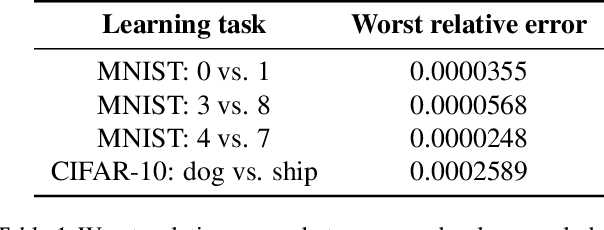

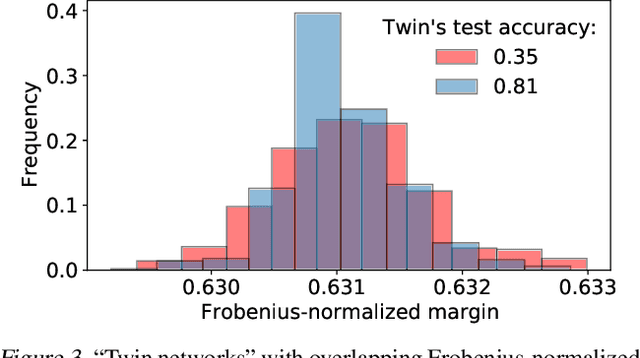

Abstract:Weight norm $\|w\|$ and margin $\gamma$ participate in learning theory via the normalized margin $\gamma/\|w\|$. Since standard neural net optimizers do not control normalized margin, it is hard to test whether this quantity causally relates to generalization. This paper designs a series of experimental studies that explicitly control normalized margin and thereby tackle two central questions. First: does normalized margin always have a causal effect on generalization? The paper finds that no -- networks can be produced where normalized margin has seemingly no relationship with generalization, counter to the theory of Bartlett et al. (2017). Second: does normalized margin ever have a causal effect on generalization? The paper finds that yes -- in a standard training setup, test performance closely tracks normalized margin. The paper suggests a Gaussian process model as a promising explanation for this behavior.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge