Alberto Castagna

A Conceptual Framework for AI-based Decision Systems in Critical Infrastructures

Apr 21, 2025

Abstract:The interaction between humans and AI in safety-critical systems presents a unique set of challenges that remain partially addressed by existing frameworks. These challenges stem from the complex interplay of requirements for transparency, trust, and explainability, coupled with the necessity for robust and safe decision-making. A framework that holistically integrates human and AI capabilities while addressing these concerns is notably required, bridging the critical gaps in designing, deploying, and maintaining safe and effective systems. This paper proposes a holistic conceptual framework for critical infrastructures by adopting an interdisciplinary approach. It integrates traditionally distinct fields such as mathematics, decision theory, computer science, philosophy, psychology, and cognitive engineering and draws on specialized engineering domains, particularly energy, mobility, and aeronautics. The flexibility in its adoption is also demonstrated through its instantiation on an already existing framework.

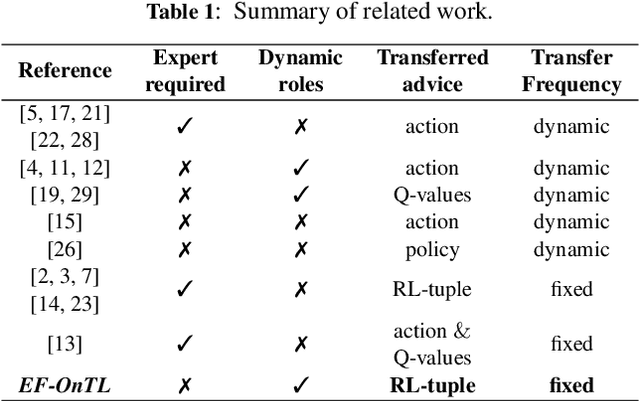

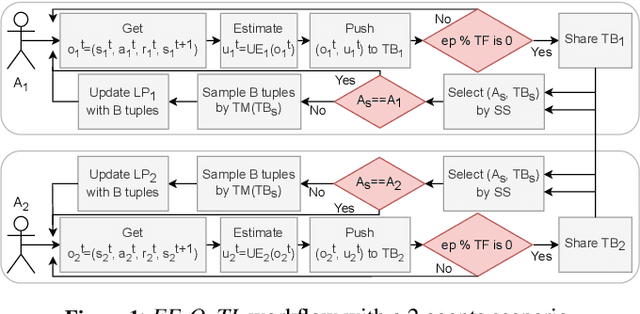

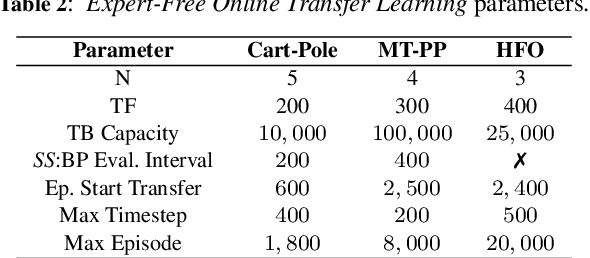

Expert-Free Online Transfer Learning in Multi-Agent Reinforcement Learning

Mar 02, 2023

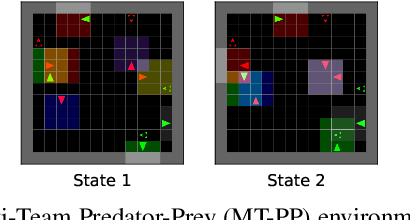

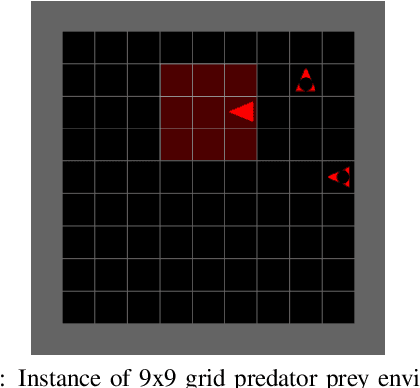

Abstract:Transfer learning in Reinforcement Learning (RL) has been widely studied to overcome training issues of Deep-RL, i.e., exploration cost, data availability and convergence time, by introducing a way to enhance training phase with external knowledge. Generally, knowledge is transferred from expert-agents to novices. While this fixes the issue for a novice agent, a good understanding of the task on expert agent is required for such transfer to be effective. As an alternative, in this paper we propose Expert-Free Online Transfer Learning (EF-OnTL), an algorithm that enables expert-free real-time dynamic transfer learning in multi-agent system. No dedicated expert exists, and transfer source agent and knowledge to be transferred are dynamically selected at each transfer step based on agents' performance and uncertainty. To improve uncertainty estimation, we also propose State Action Reward Next-State Random Network Distillation (sars-RND), an extension of RND that estimates uncertainty from RL agent-environment interaction. We demonstrate EF-OnTL effectiveness against a no-transfer scenario and advice-based baselines, with and without expert agents, in three benchmark tasks: Cart-Pole, a grid-based Multi-Team Predator-Prey (mt-pp) and Half Field Offense (HFO). Our results show that EF-OnTL achieve overall comparable performance when compared against advice-based baselines while not requiring any external input nor threshold tuning. EF-OnTL outperforms no-transfer with an improvement related to the complexity of the task addressed.

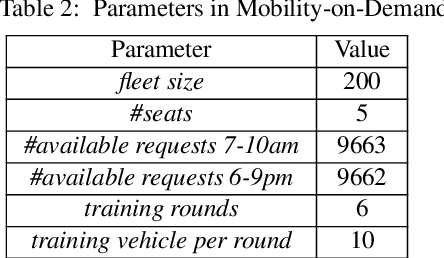

Multi-Agent Transfer Learning in Reinforcement Learning-Based Ride-Sharing Systems

Dec 01, 2021

Abstract:Reinforcement learning (RL) has been used in a range of simulated real-world tasks, e.g., sensor coordination, traffic light control, and on-demand mobility services. However, real world deployments are rare, as RL struggles with dynamic nature of real world environments, requiring time for learning a task and adapting to changes in the environment. Transfer Learning (TL) can help lower these adaptation times. In particular, there is a significant potential of applying TL in multi-agent RL systems, where multiple agents can share knowledge with each other, as well as with new agents that join the system. To obtain the most from inter-agent transfer, transfer roles (i.e., determining which agents act as sources and which as targets), as well as relevant transfer content parameters (e.g., transfer size) should be selected dynamically in each particular situation. As a first step towards fully dynamic transfers, in this paper we investigate the impact of TL transfer parameters with fixed source and target roles. Specifically, we label every agent-environment interaction with agent's epistemic confidence, and we filter the shared examples using varying threshold levels and sample sizes. We investigate impact of these parameters in two scenarios, a standard predator-prey RL benchmark and a simulation of a ride-sharing system with 200 vehicle agents and 10,000 ride-requests.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge