Akshaya Mishra

Deep Learning with Darwin: Evolutionary Synthesis of Deep Neural Networks

Feb 06, 2017

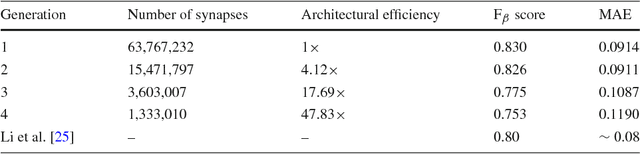

Abstract:Taking inspiration from biological evolution, we explore the idea of "Can deep neural networks evolve naturally over successive generations into highly efficient deep neural networks?" by introducing the notion of synthesizing new highly efficient, yet powerful deep neural networks over successive generations via an evolutionary process from ancestor deep neural networks. The architectural traits of ancestor deep neural networks are encoded using synaptic probability models, which can be viewed as the `DNA' of these networks. New descendant networks with differing network architectures are synthesized based on these synaptic probability models from the ancestor networks and computational environmental factor models, in a random manner to mimic heredity, natural selection, and random mutation. These offspring networks are then trained into fully functional networks, like one would train a newborn, and have more efficient, more diverse network architectures than their ancestor networks, while achieving powerful modeling capabilities. Experimental results for the task of visual saliency demonstrated that the synthesized `evolved' offspring networks can achieve state-of-the-art performance while having network architectures that are significantly more efficient (with a staggering $\sim$48-fold decrease in synapses by the fourth generation) compared to the original ancestor network.

Deep Quality: A Deep No-reference Quality Assessment System

Sep 22, 2016

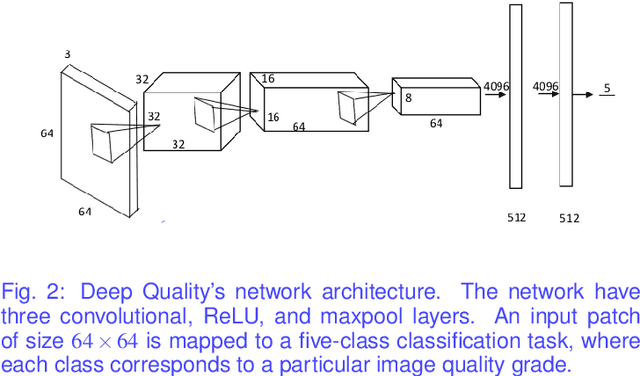

Abstract:Image quality assessment (IQA) continues to garner great interest in the research community, particularly given the tremendous rise in consumer video capture and streaming. Despite significant research effort in IQA in the past few decades, the area of no-reference image quality assessment remains a great challenge and is largely unsolved. In this paper, we propose a novel no-reference image quality assessment system called Deep Quality, which leverages the power of deep learning to model the complex relationship between visual content and the perceived quality. Deep Quality consists of a novel multi-scale deep convolutional neural network, trained to learn to assess image quality based on training samples consisting of different distortions and degradations such as blur, Gaussian noise, and compression artifacts. Preliminary results using the CSIQ benchmark image quality dataset showed that Deep Quality was able to achieve strong quality prediction performance (89% patch-level and 98% image-level prediction accuracy), being able to achieve similar performance as full-reference IQA methods.

Diverse Large-Scale ITS Dataset Created from Continuous Learning for Real-Time Vehicle Detection

Oct 07, 2015

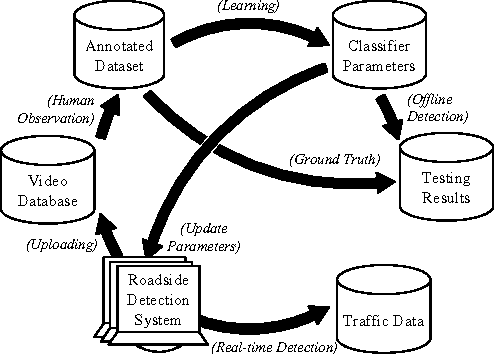

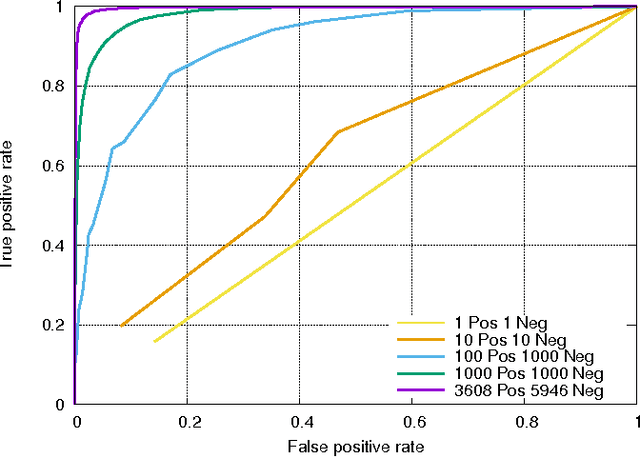

Abstract:In traffic engineering, vehicle detectors are trained on limited datasets resulting in poor accuracy when deployed in real world applications. Annotating large-scale high quality datasets is challenging. Typically, these datasets have limited diversity; they do not reflect the real-world operating environment. There is a need for a large-scale, cloud based positive and negative mining (PNM) process and a large-scale learning and evaluation system for the application of traffic event detection. The proposed positive and negative mining process addresses the quality of crowd sourced ground truth data through machine learning review and human feedback mechanisms. The proposed learning and evaluation system uses a distributed cloud computing framework to handle data-scaling issues associated with large numbers of samples and a high-dimensional feature space. The system is trained using AdaBoost on $1,000,000$ Haar-like features extracted from $70,000$ annotated video frames. The trained real-time vehicle detector achieves an accuracy of at least $95\%$ for $1/2$ and about $78\%$ for $19/20$ of the time when tested on approximately $7,500,000$ video frames. At the end of 2015, the dataset is expect to have over one billion annotated video frames.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge