Ahmad El Ferdaoussi

Maximizing Information in Neuron Populations for Neuromorphic Spike Encoding

Dec 11, 2024

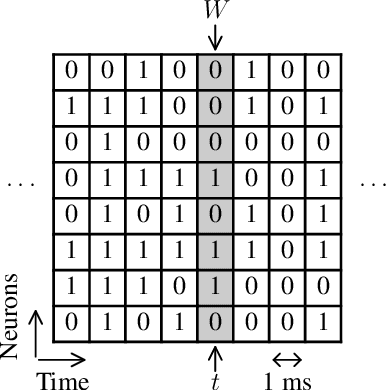

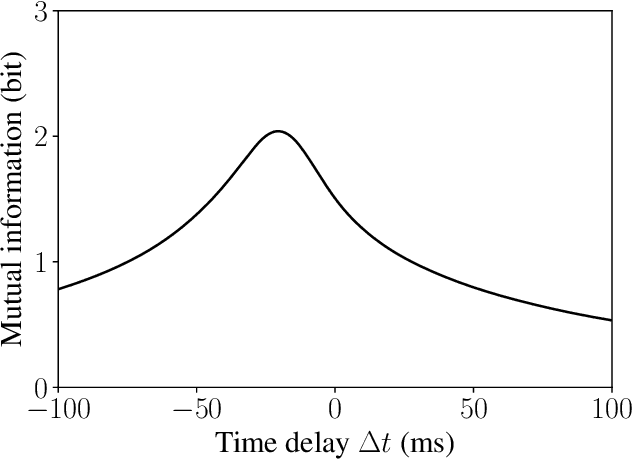

Abstract:Neuromorphic applications emulate the processing performed by the brain by using spikes as inputs instead of time-varying analog stimuli. Therefore, these time-varying stimuli have to be encoded into spikes, which can induce important information loss. To alleviate this loss, some studies use population coding strategies to encode more information using a population of neurons rather than just one neuron. However, configuring the encoding parameters of such a population is an open research question. This work proposes an approach based on maximizing the mutual information between the signal and the spikes in the population of neurons. The proposed algorithm is inspired by the information-theoretic framework of Partial Information Decomposition. Two applications are presented: blood pressure pulse wave classification, and neural action potential waveform classification. In both tasks, the data is encoded into spikes and the encoding parameters of the neuron populations are tuned to maximize the encoded information using the proposed algorithm. The spikes are then classified and the performance is measured using classification accuracy as a metric. Two key results are reported. Firstly, adding neurons to the population leads to an increase in both mutual information and classification accuracy beyond what could be accounted for by each neuron separately, showing the usefulness of population coding strategies. Secondly, the classification accuracy obtained with the tuned parameters is near-optimal and it closely follows the mutual information as more neurons are added to the population. Furthermore, the proposed approach significantly outperforms random parameter selection, showing the usefulness of the proposed approach. These results are reproduced in both applications.

Evaluation of Neuromorphic Spike Encoding of Sound Using Information Theory

Feb 19, 2022

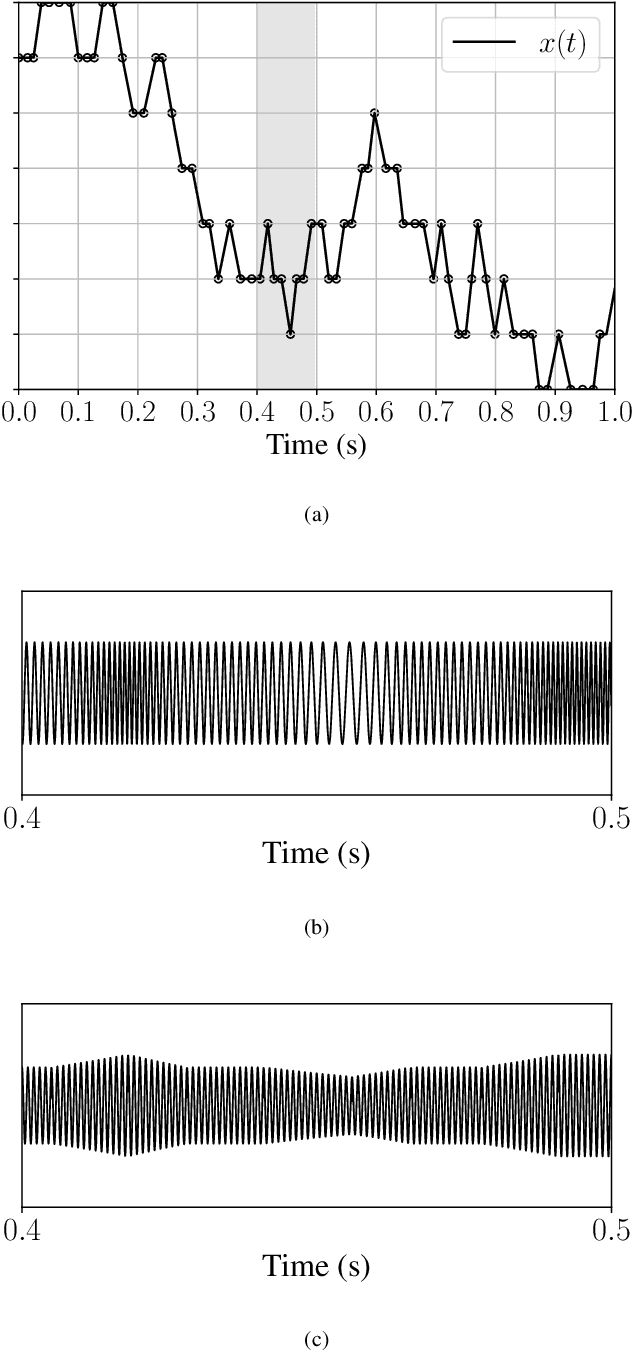

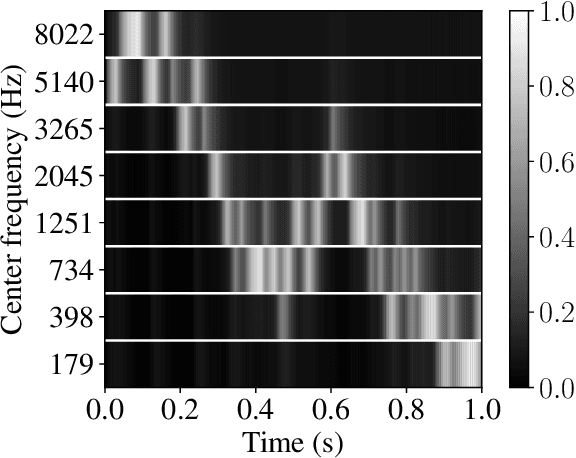

Abstract:The problem of spike encoding of sound consists in transforming a sound waveform into spikes. It is of interest in many domains, including the development of audio-based spiking neural networks, where it is the first and most crucial stage of processing. Many algorithms have been proposed to perform spike encoding of sound. However, a systematic approach to quantitatively evaluate their performance is currently lacking. We propose the use of an information-theoretic framework to solve this problem. Specifically, we evaluate the coding efficiency of four spike encoding algorithms on two coding tasks that consist of coding the fundamental characteristics of sound: frequency and amplitude. The algorithms investigated are: Independent Spike Coding, Send-on-Delta coding, Ben's Spiker Algorithm, and Leaky Integrate-and-Fire coding. Using the tools of information theory, we estimate the information that the spikes carry on relevant aspects of an input stimulus. We find disparities in the coding efficiencies of the algorithms, where Leaky Integrate-and-Fire coding performs best. The information-theoretic analysis of their performance on these coding tasks provides insight on the encoding of richer and more complex sound stimuli.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge