Adam Wolisz

Scheduling Out-of-Coverage Vehicular Communications Using Reinforcement Learning

Jul 13, 2022

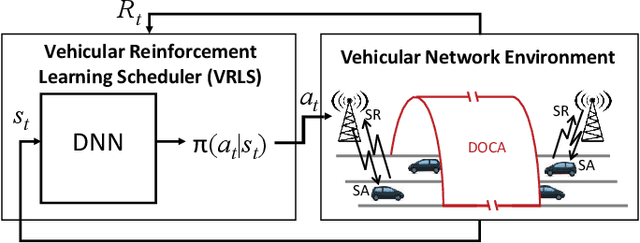

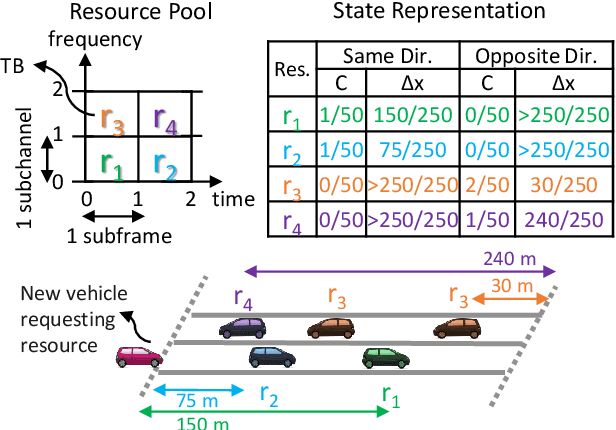

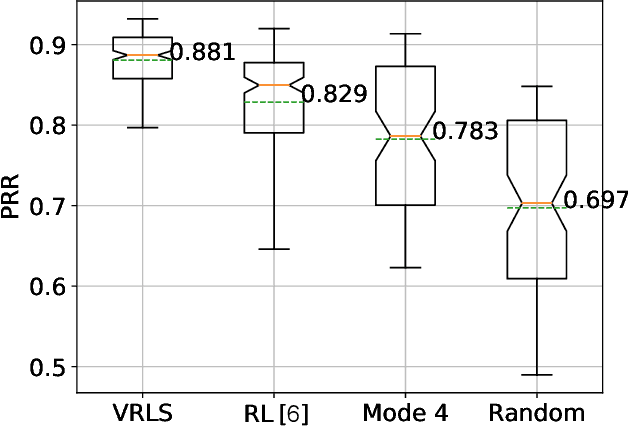

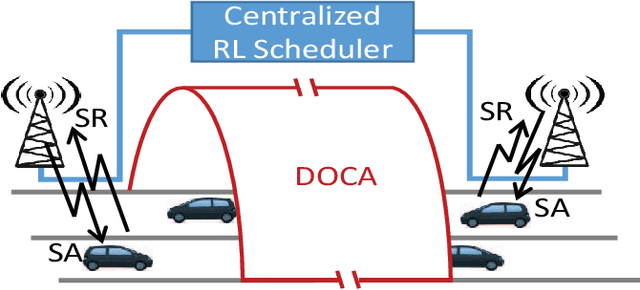

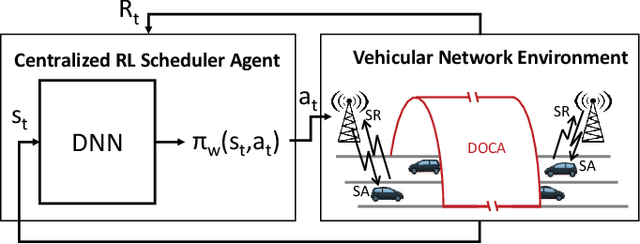

Abstract:Performance of vehicle-to-vehicle (V2V) communications depends highly on the employed scheduling approach. While centralized network schedulers offer high V2V communication reliability, their operation is conventionally restricted to areas with full cellular network coverage. In contrast, in out-of-cellular-coverage areas, comparatively inefficient distributed radio resource management is used. To exploit the benefits of the centralized approach for enhancing the reliability of V2V communications on roads lacking cellular coverage, we propose VRLS (Vehicular Reinforcement Learning Scheduler), a centralized scheduler that proactively assigns resources for out-of-coverage V2V communications \textit{before} vehicles leave the cellular network coverage. By training in simulated vehicular environments, VRLS can learn a scheduling policy that is robust and adaptable to environmental changes, thus eliminating the need for targeted (re-)training in complex real-life environments. We evaluate the performance of VRLS under varying mobility, network load, wireless channel, and resource configurations. VRLS outperforms the state-of-the-art distributed scheduling algorithm in zones without cellular network coverage by reducing the packet error rate by half in highly loaded conditions and achieving near-maximum reliability in low-load scenarios.

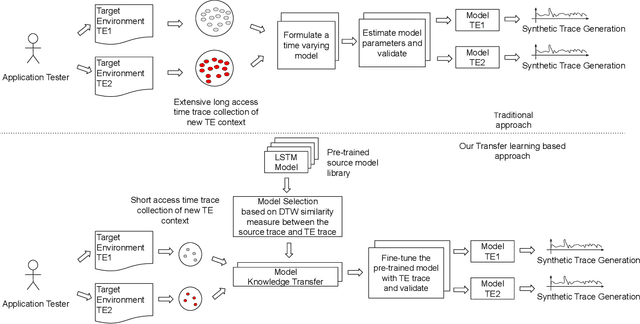

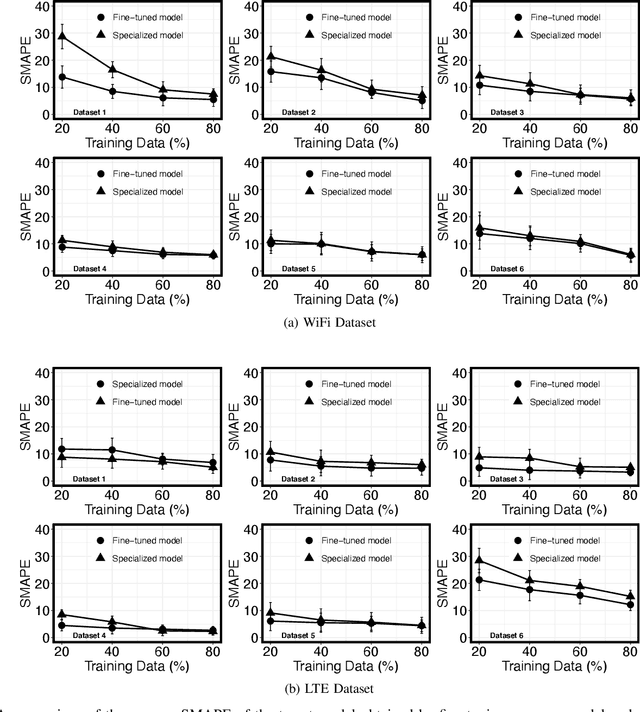

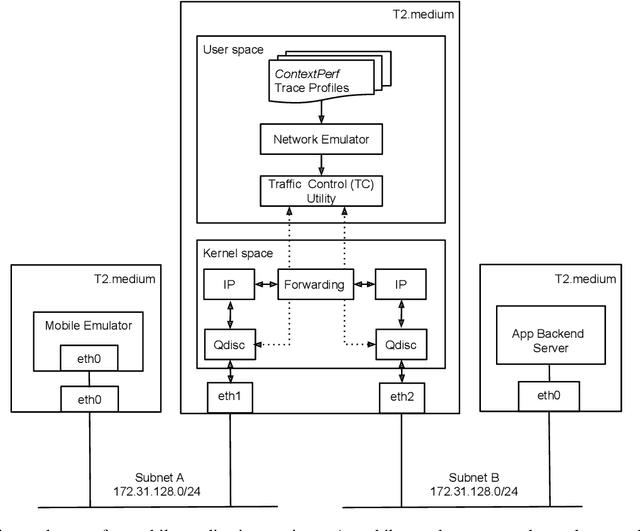

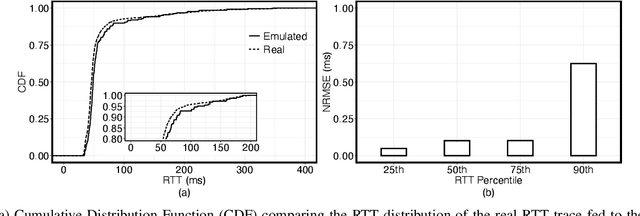

Generation of Realistic Cloud Access Times for Mobile Application Testing using Transfer Learning

Mar 16, 2021

Abstract:The network Quality of Service (QoS) metrics such as the access time, the bandwidth, and the packet loss play an important role in determining the Quality of Experience (QoE) of mobile applications. Various factors like the Radio Resource Control (RRC) states, the Mobile Network Operator (MNO) specific retransmission configurations, handovers triggered by the user mobility, the network load etc. can cause high variability in these QoS metrics on 4G/LTE, and WiFi networks, which can be detrimental to the application QoE. Therefore, exposing mobile application to realistic network QoS metrics is critical for testers attempting to predict its QoE. A viable approach is testing using synthetic traces. The main challenge in generation of realisitc synthetic traces is the diversity of environments and lack of wide scope of real traces to calibrate the generators. In this paper, we describe a measurement-driven methodology based on transfer learning with Long Short Term Memory (LSTM) neural nets to solve this problem. The methodology requires a relatively short sample of the targeted environment to adapt the presented basic model to new environments, thus simplifying synthetic traces generation. We present this feature for realistic WiFi and LTE cloud access time models adapted for diverse target environments with a trace size of just 6000 samples measured over a few tens of minutes. We demonstrate that synthetic traces generated from these models are capable of accurately reproducing application QoE metric distributions including their outlier values.

VRLS: A Unified Reinforcement Learning Scheduler for Vehicle-to-Vehicle Communications

Jul 22, 2019

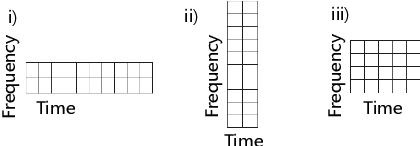

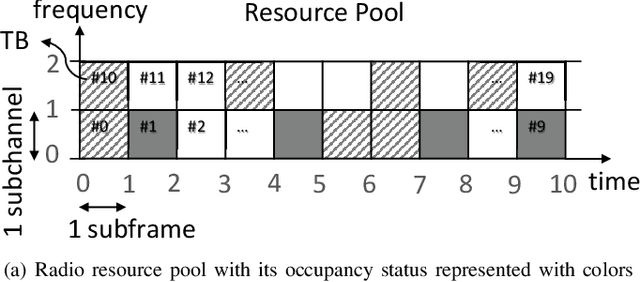

Abstract:Vehicle-to-vehicle (V2V) communications have distinct challenges that need to be taken into account when scheduling the radio resources. Although centralized schedulers (e.g., located on base stations) could be utilized to deliver high scheduling performance, they cannot be employed in case of coverage gaps. To address the issue of reliable scheduling of V2V transmissions out of coverage, we propose Vehicular Reinforcement Learning Scheduler (VRLS), a centralized scheduler that predictively assigns the resources for V2V communication while the vehicle is still in cellular network coverage. VRLS is a unified reinforcement learning (RL) solution, wherein the learning agent, the state representation, and the reward provided to the agent are applicable to different vehicular environments of interest (in terms of vehicular density, resource configuration, and wireless channel conditions). Such a unified solution eliminates the necessity of redesigning the RL components for a different environment, and facilitates transfer learning from one to another similar environment. We evaluate the performance of VRLS and show its ability to avoid collisions and half-duplex errors, and to reuse the resources better than the state of the art scheduling algorithms. We also show that pre-trained VRLS agent can adapt to different V2V environments with limited retraining, thus enabling real-world deployment in different scenarios.

Reinforcement Learning Scheduler for Vehicle-to-Vehicle Communications Outside Coverage

Apr 29, 2019

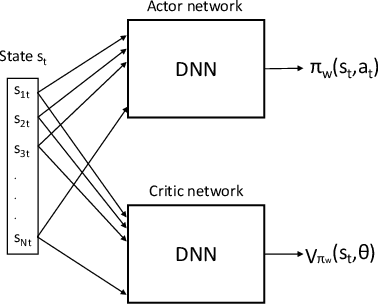

Abstract:Radio resources in vehicle-to-vehicle (V2V) communication can be scheduled either by a centralized scheduler residing in the network (e.g., a base station in case of cellular systems) or a distributed scheduler, where the resources are autonomously selected by the vehicles. The former approach yields a considerably higher resource utilization in case the network coverage is uninterrupted. However, in case of intermittent or out-of-coverage, due to not having input from centralized scheduler, vehicles need to revert to distributed scheduling. Motivated by recent advances in reinforcement learning (RL), we investigate whether a centralized learning scheduler can be taught to efficiently pre-assign the resources to vehicles for out-of-coverage V2V communication. Specifically, we use the actor-critic RL algorithm to train the centralized scheduler to provide non-interfering resources to vehicles before they enter the out-of-coverage area. Our initial results show that a RL-based scheduler can achieve performance as good as or better than the state-of-art distributed scheduler, often outperforming it. Furthermore, the learning process completes within a reasonable time (ranging from a few hundred to a few thousand epochs), thus making the RL-based scheduler a promising solution for V2V communications with intermittent network coverage.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge