Abdelaziz Bensrhair

Mamba base PKD for efficient knowledge compression

Mar 03, 2025Abstract:Deep neural networks (DNNs) have remarkably succeeded in various image processing tasks. However, their large size and computational complexity present significant challenges for deploying them in resource-constrained environments. This paper presents an innovative approach for integrating Mamba Architecture within a Progressive Knowledge Distillation (PKD) process to address the challenge of reducing model complexity while maintaining accuracy in image classification tasks. The proposed framework distills a large teacher model into progressively smaller student models, designed using Mamba blocks. Each student model is trained using Selective-State-Space Models (S-SSM) within the Mamba blocks, focusing on important input aspects while reducing computational complexity. The work's preliminary experiments use MNIST and CIFAR-10 as datasets to demonstrate the effectiveness of this approach. For MNIST, the teacher model achieves 98% accuracy. A set of seven student models as a group retained 63% of the teacher's FLOPs, approximating the teacher's performance with 98% accuracy. The weak student used only 1% of the teacher's FLOPs and maintained 72% accuracy. Similarly, for CIFAR-10, the students achieved 1% less accuracy compared to the teacher, with the small student retaining 5% of the teacher's FLOPs to achieve 50% accuracy. These results confirm the flexibility and scalability of Mamba Architecture, which can be integrated into PKD, succeeding in the process of finding students as weak learners. The framework provides a solution for deploying complex neural networks in real-time applications with a reduction in computational cost.

TransRx-6G-V2X : Transformer Encoder-Based Deep Neural Receiver For Next Generation of Cellular Vehicular Communications

Aug 02, 2024

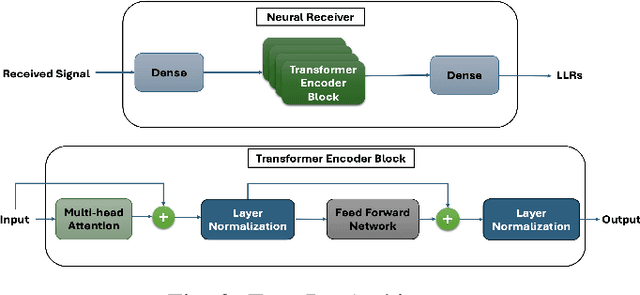

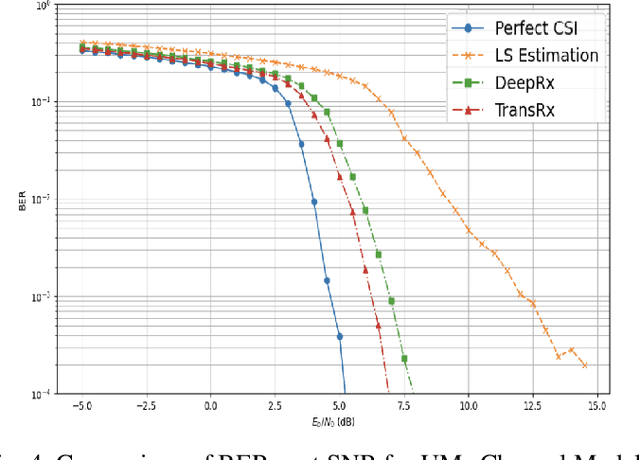

Abstract:End-to-end wireless communication is new concept expected to be widely used in the physical layer of future wireless communication systems (6G). It involves the substitution of transmitter and receiver block components with a deep neural network (DNN), aiming to enhance the efficiency of data transmission. This will ensure the transition of autonomous vehicles (AVs) from self-autonomy to full collaborative autonomy, that requires vehicular connectivity with high data throughput and minimal latency. In this article, we propose a novel neural network receiver based on transformer architecture, named TransRx, designed for vehicle-to-network (V2N) communications. The TransRx system replaces conventional receiver block components in traditional communication setups. We evaluated our proposed system across various scenarios using different parameter sets and velocities ranging from 0 to 120 km/h over Urban Macro-cell (UMa) channels as defined by 3GPP. The results demonstrate that TransRx outperforms the state-of-the-art systems, achieving a 3.5dB improvement in convergence to low Bit Error Rate (BER) compared to convolutional neural network (CNN)-based neural receivers, and an 8dB improvement compared to traditional baseline receiver configurations. Furthermore, our proposed system exhibits robust generalization capabilities, making it suitable for deployment in large-scale environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge