Where Does Trust Break Down? A Quantitative Trust Analysis of Deep Neural Networks via Trust Matrix and Conditional Trust Densities

Paper and Code

Sep 30, 2020

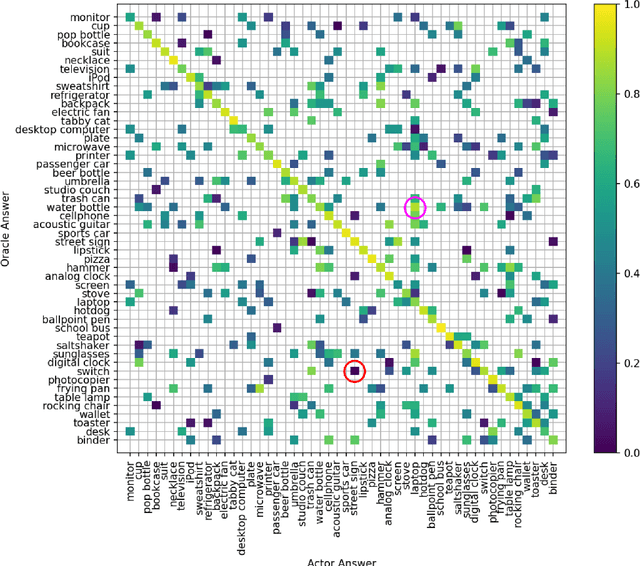

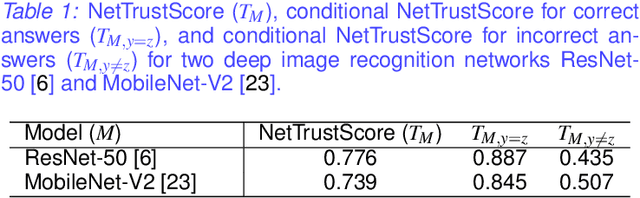

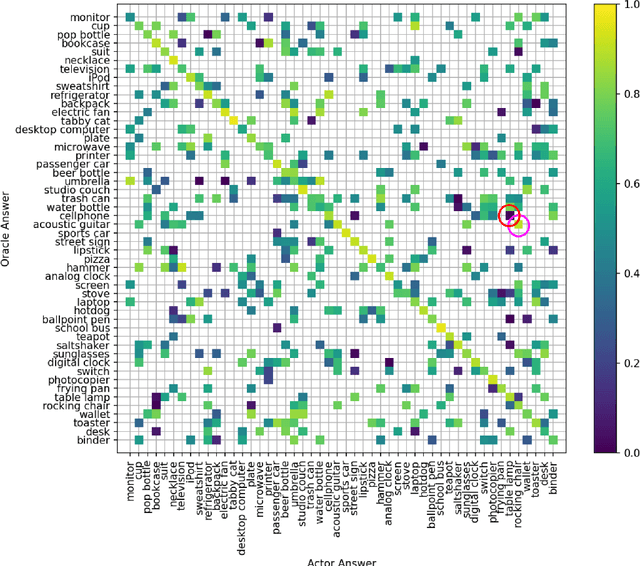

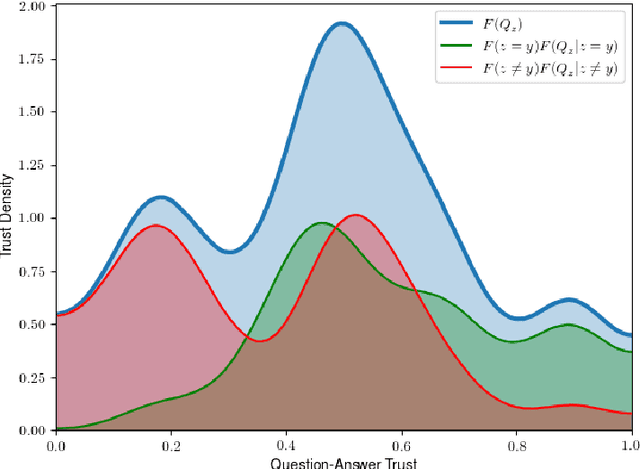

The advances and successes in deep learning in recent years have led to considerable efforts and investments into its widespread ubiquitous adoption for a wide variety of applications, ranging from personal assistants and intelligent navigation to search and product recommendation in e-commerce. With this tremendous rise in deep learning adoption comes questions about the trustworthiness of the deep neural networks that power these applications. Motivated to answer such questions, there has been a very recent interest in trust quantification. In this work, we introduce the concept of trust matrix, a novel trust quantification strategy that leverages the recently introduced question-answer trust metric by Wong et al. to provide deeper, more detailed insights into where trust breaks down for a given deep neural network given a set of questions. More specifically, a trust matrix defines the expected question-answer trust for a given actor-oracle answer scenario, allowing one to quickly spot areas of low trust that needs to be addressed to improve the trustworthiness of a deep neural network. The proposed trust matrix is simple to calculate, humanly interpretable, and to the best of the authors' knowledge is the first to study trust at the actor-oracle answer level. We further extend the concept of trust densities with the notion of conditional trust densities. We experimentally leverage trust matrices to study several well-known deep neural network architectures for image recognition, and further study the trust density and conditional trust densities for an interesting actor-oracle answer scenario. The results illustrate that trust matrices, along with conditional trust densities, can be useful tools in addition to the existing suite of trust quantification metrics for guiding practitioners and regulators in creating and certifying deep learning solutions for trusted operation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge