What Makes Good Few-shot Examples for Vision-Language Models?

Paper and Code

May 22, 2024

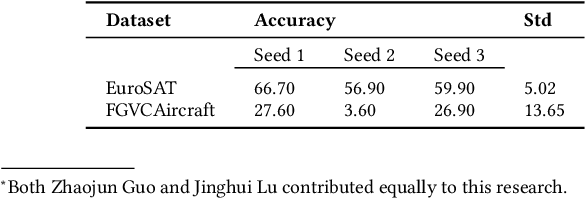

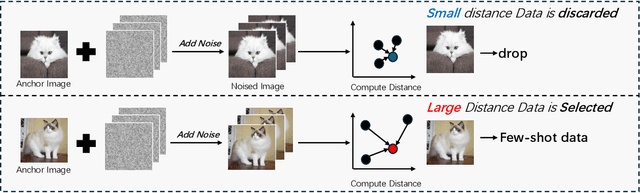

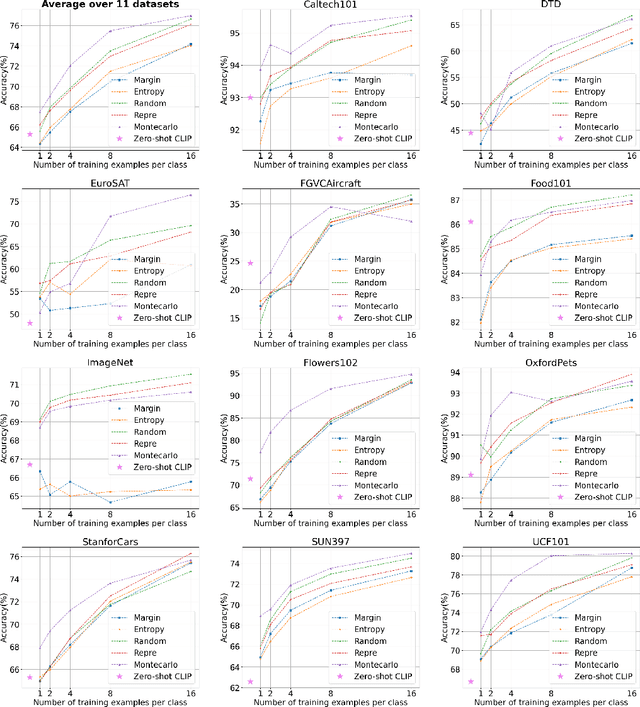

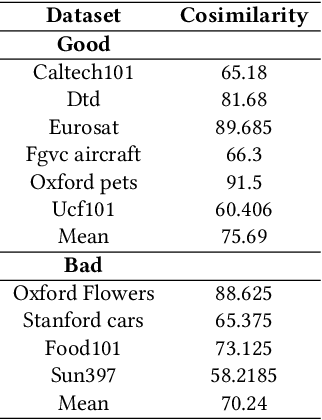

Despite the notable advancements achieved by leveraging pre-trained vision-language (VL) models through few-shot tuning for downstream tasks, our detailed empirical study highlights a significant dependence of few-shot learning outcomes on the careful selection of training examples - a facet that has been previously overlooked in research. In this study, we delve into devising more effective strategies for the meticulous selection of few-shot training examples, as opposed to relying on random sampling, to enhance the potential of existing few-shot prompt learning methodologies. To achieve this, we assess the effectiveness of various Active Learning (AL) techniques for instance selection, such as Entropy and Margin of Confidence, within the context of few-shot training. Furthermore, we introduce two innovative selection methods - Representativeness (REPRE) and Gaussian Monte Carlo (Montecarlo) - designed to proactively pinpoint informative examples for labeling in relation to pre-trained VL models. Our findings demonstrate that both REPRE and Montecarlo significantly surpass both random selection and AL-based strategies in few-shot training scenarios. The research also underscores that these instance selection methods are model-agnostic, offering a versatile enhancement to a wide array of few-shot training methodologies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge