Weakly Supervised Concept Map Generation through Task-Guided Graph Translation

Paper and Code

Nov 01, 2021

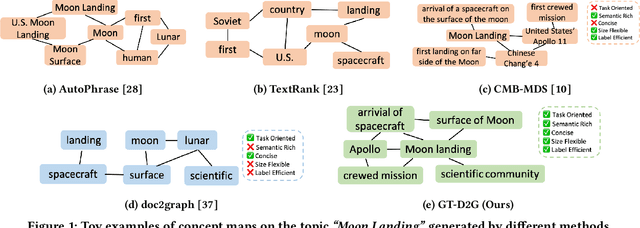

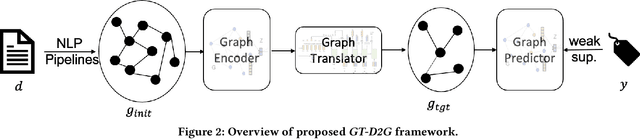

Recent years have witnessed the rapid development of concept map generation techniques due to their advantages in providing well-structured summarization of knowledge from free texts. Traditional unsupervised methods do not generate task-oriented concept maps, whereas deep generative models require large amounts of training data. In this work, we present GT-D2G (Graph Translation based Document-To-Graph), an automatic concept map generation framework that leverages generalized NLP pipelines to derive semantic-rich initial graphs, and translates them into more concise structures under the weak supervision of document labels. The quality and interpretability of such concept maps are validated through human evaluation on three real-world corpora, and their utility in the downstream task is further demonstrated in the controlled experiments with scarce document labels.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge