Visually plausible human-object interaction capture from wearable sensors

Paper and Code

May 05, 2022

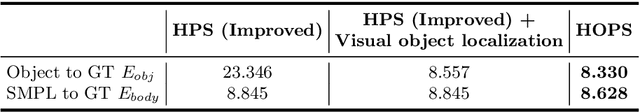

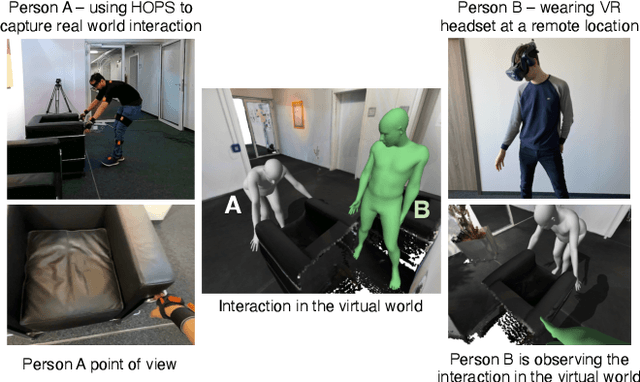

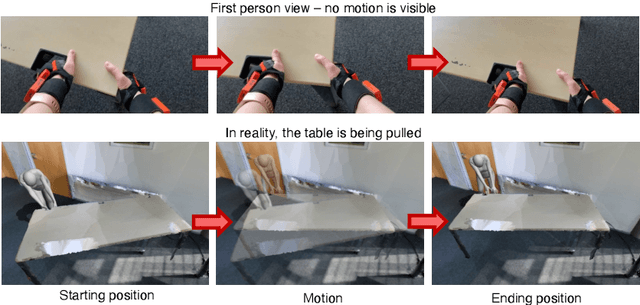

In everyday lives, humans naturally modify the surrounding environment through interactions, e.g., moving a chair to sit on it. To reproduce such interactions in virtual spaces (e.g., metaverse), we need to be able to capture and model them, including changes in the scene geometry, ideally from ego-centric input alone (head camera and body-worn inertial sensors). This is an extremely hard problem, especially since the object/scene might not be visible from the head camera (e.g., a human not looking at a chair while sitting down, or not looking at the door handle while opening a door). In this paper, we present HOPS, the first method to capture interactions such as dragging objects and opening doors from ego-centric data alone. Central to our method is reasoning about human-object interactions, allowing to track objects even when they are not visible from the head camera. HOPS localizes and registers both the human and the dynamic object in a pre-scanned static scene. HOPS is an important first step towards advanced AR/VR applications based on immersive virtual universes, and can provide human-centric training data to teach machines to interact with their surroundings. The supplementary video, data, and code will be available on our project page at http://virtualhumans.mpi-inf.mpg.de/hops/

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge