Visualizing and Understanding Patch Interactions in Vision Transformer

Paper and Code

Mar 11, 2022

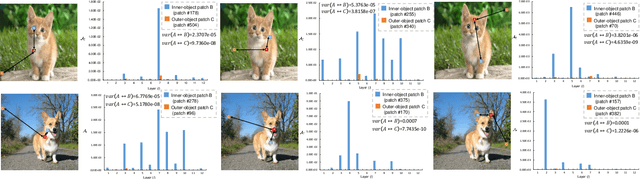

Vision Transformer (ViT) has become a leading tool in various computer vision tasks, owing to its unique self-attention mechanism that learns visual representations explicitly through cross-patch information interactions. Despite having good success, the literature seldom explores the explainability of vision transformer, and there is no clear picture of how the attention mechanism with respect to the correlation across comprehensive patches will impact the performance and what is the further potential. In this work, we propose a novel explainable visualization approach to analyze and interpret the crucial attention interactions among patches for vision transformer. Specifically, we first introduce a quantification indicator to measure the impact of patch interaction and verify such quantification on attention window design and indiscriminative patches removal. Then, we exploit the effective responsive field of each patch in ViT and devise a window-free transformer architecture accordingly. Extensive experiments on ImageNet demonstrate that the exquisitely designed quantitative method is shown able to facilitate ViT model learning, leading the top-1 accuracy by 4.28% at most. Moreover, the results on downstream fine-grained recognition tasks further validate the generalization of our proposal.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge