Visual Relationship Detection with Relative Location Mining

Paper and Code

Nov 02, 2019

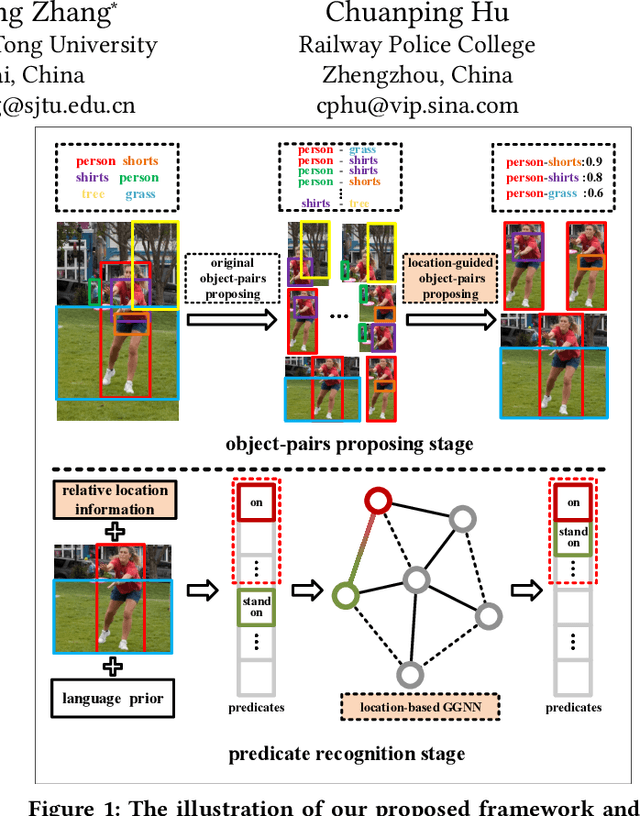

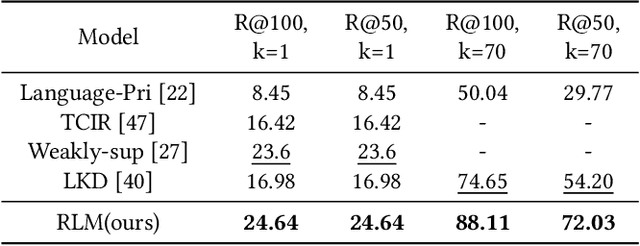

Visual relationship detection, as a challenging task used to find and distinguish the interactions between object pairs in one image, has received much attention recently. In this work, we propose a novel visual relationship detection framework by deeply mining and utilizing relative location of object-pair in every stage of the procedure. In both the stages, relative location information of each object-pair is abstracted and encoded as auxiliary feature to improve the distinguishing capability of object-pairs proposing and predicate recognition, respectively; Moreover, one Gated Graph Neural Network(GGNN) is introduced to mine and measure the relevance of predicates using relative location. With the location-based GGNN, those non-exclusive predicates with similar spatial position can be clustered firstly and then be smoothed with close classification scores, thus the accuracy of top $n$ recall can be increased further. Experiments on two widely used datasets VRD and VG show that, with the deeply mining and exploiting of relative location information, our proposed model significantly outperforms the current state-of-the-art.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge