Verification of Image-based Neural Network Controllers Using Generative Models

Paper and Code

May 14, 2021

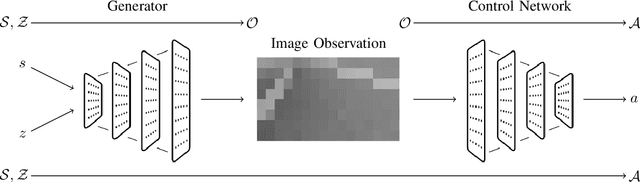

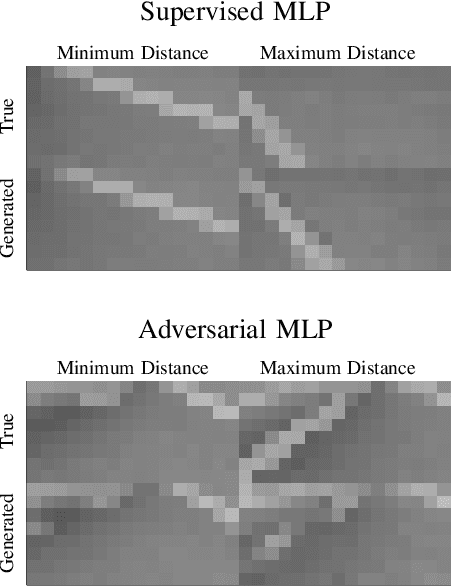

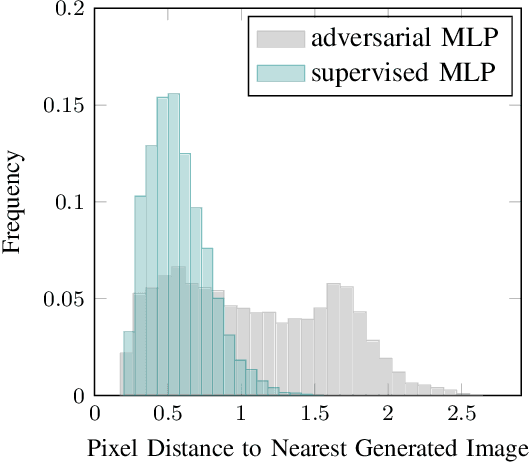

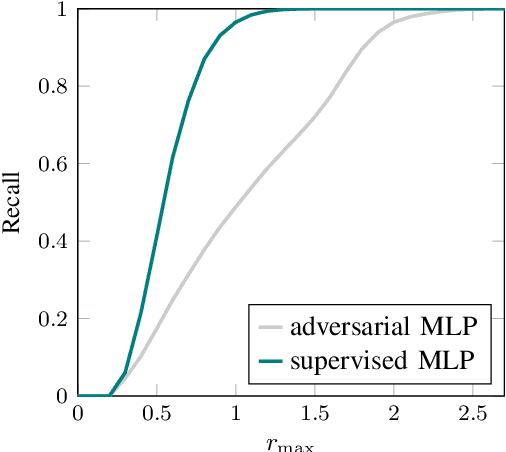

Neural networks are often used to process information from image-based sensors to produce control actions. While they are effective for this task, the complex nature of neural networks makes their output difficult to verify and predict, limiting their use in safety-critical systems. For this reason, recent work has focused on combining techniques in formal methods and reachability analysis to obtain guarantees on the closed-loop performance of neural network controllers. However, these techniques do not scale to the high-dimensional and complicated input space of image-based neural network controllers. In this work, we propose a method to address these challenges by training a generative adversarial network (GAN) to map states to plausible input images. By concatenating the generator network with the control network, we obtain a network with a low-dimensional input space. This insight allows us to use existing closed-loop verification tools to obtain formal guarantees on the performance of image-based controllers. We apply our approach to provide safety guarantees for an image-based neural network controller for an autonomous aircraft taxi problem. We guarantee that the controller will keep the aircraft on the runway and guide the aircraft towards the center of the runway. The guarantees we provide are with respect to the set of input images modeled by our generator network, so we provide a recall metric to evaluate how well the generator captures the space of plausible images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge