UNIFY: a Unified Policy Designing Framework for Solving Constrained Optimization Problems with Machine Learning

Paper and Code

Oct 25, 2022

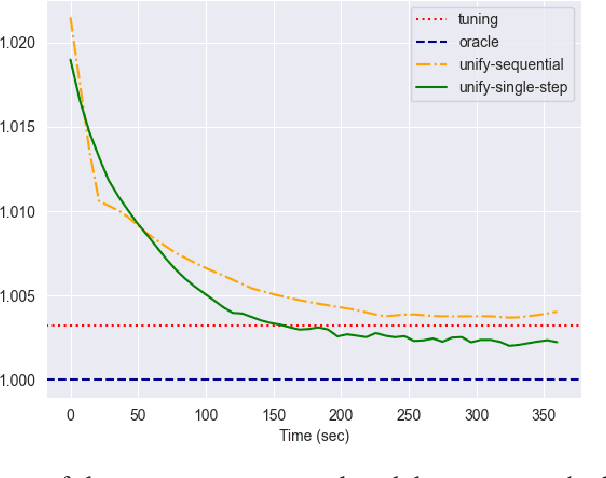

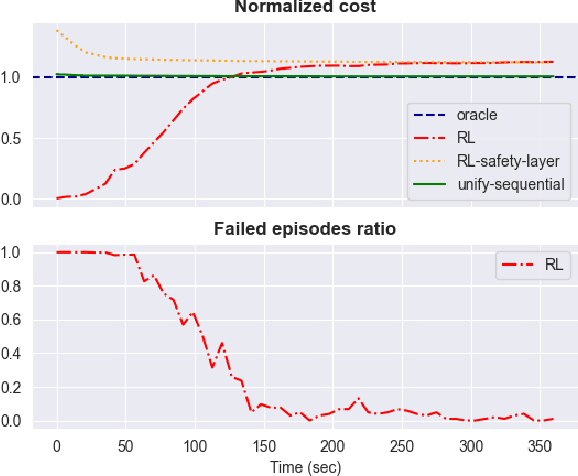

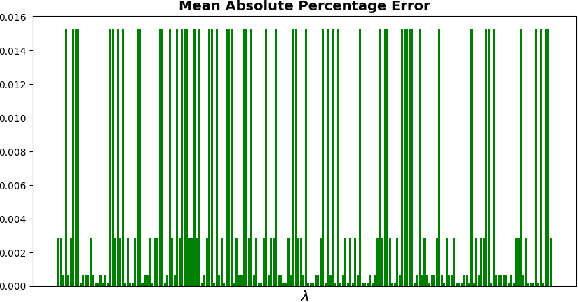

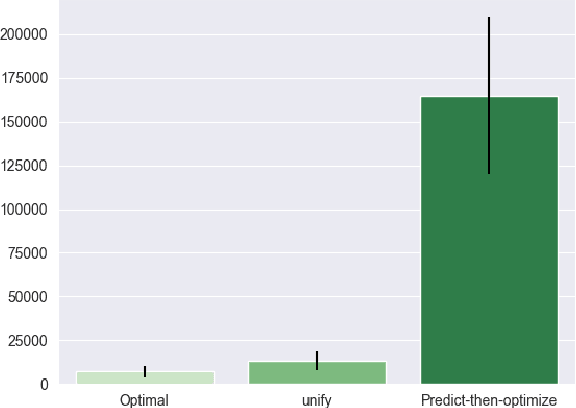

The interplay between Machine Learning (ML) and Constrained Optimization (CO) has recently been the subject of increasing interest, leading to a new and prolific research area covering (e.g.) Decision Focused Learning and Constrained Reinforcement Learning. Such approaches strive to tackle complex decision problems under uncertainty over multiple stages, involving both explicit (cost function, constraints) and implicit knowledge (from data), and possibly subject to execution time restrictions. While a good degree of success has been achieved, the existing methods still have limitations in terms of both applicability and effectiveness. For problems in this class, we propose UNIFY, a unified framework to design a solution policy for complex decision-making problems. Our approach relies on a clever decomposition of the policy in two stages, namely an unconstrained ML model and a CO problem, to take advantage of the strength of each approach while compensating for its weaknesses. With a little design effort, UNIFY can generalize several existing approaches, thus extending their applicability. We demonstrate the method effectiveness on two practical problems, namely an Energy Management System and the Set Multi-cover with stochastic coverage requirements. Finally, we highlight some current challenges of our method and future research directions that can benefit from the cross-fertilization of the two fields.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge