Uniform-in-Time Propagation of Chaos for Mean Field Langevin Dynamics

Paper and Code

Dec 06, 2022

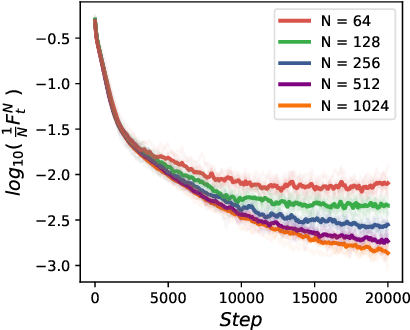

We study the uniform-in-time propagation of chaos for mean field Langevin dynamics with convex mean field potenital. Convergences in both Wasserstein-$2$ distance and relative entropy are established. We do not require the mean field potenital functional to bear either small mean field interaction or displacement convexity, which are common constraints in the literature. In particular, it allows us to study the efficiency of the noisy gradient descent algorithm for training two-layer neural networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge