Understanding Weight Similarity of Neural Networks via Chain Normalization Rule and Hypothesis-Training-Testing

Paper and Code

Aug 08, 2022

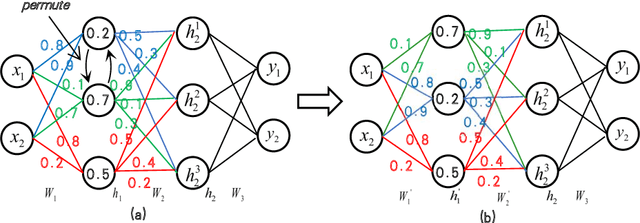

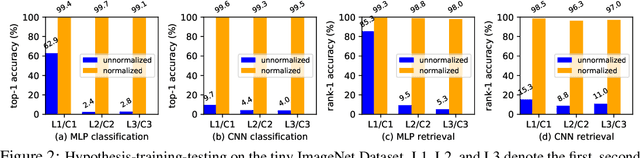

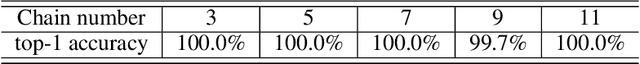

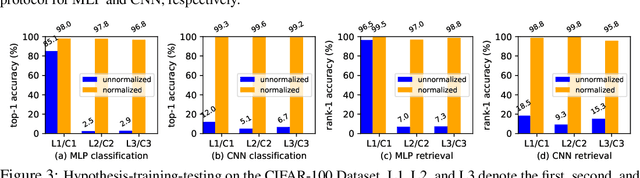

We present a weight similarity measure method that can quantify the weight similarity of non-convex neural networks. To understand the weight similarity of different trained models, we propose to extract the feature representation from the weights of neural networks. We first normalize the weights of neural networks by introducing a chain normalization rule, which is used for weight representation learning and weight similarity measure. We extend the traditional hypothesis-testing method to a hypothesis-training-testing statistical inference method to validate the hypothesis on the weight similarity of neural networks. With the chain normalization rule and the new statistical inference, we study the weight similarity measure on Multi-Layer Perceptron (MLP), Convolutional Neural Network (CNN), and Recurrent Neural Network (RNN), and find that the weights of an identical neural network optimized with the Stochastic Gradient Descent (SGD) algorithm converge to a similar local solution in a metric space. The weight similarity measure provides more insight into the local solutions of neural networks. Experiments on several datasets consistently validate the hypothesis of weight similarity measure.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge