Uncertainty Averse Pushing with Model Predictive Path Integral Control

Paper and Code

Oct 15, 2017

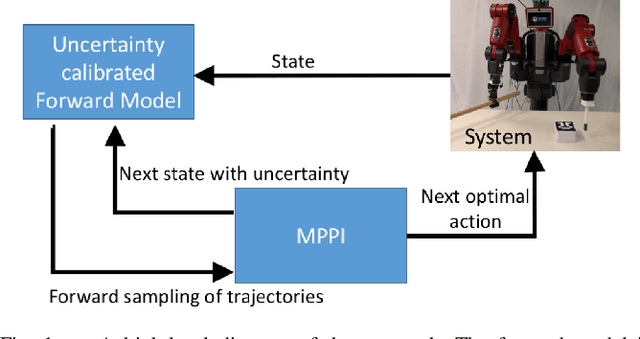

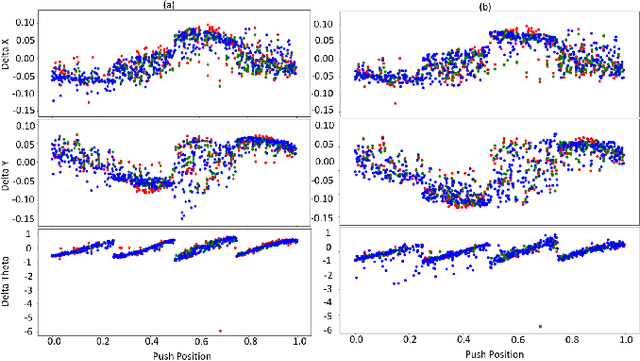

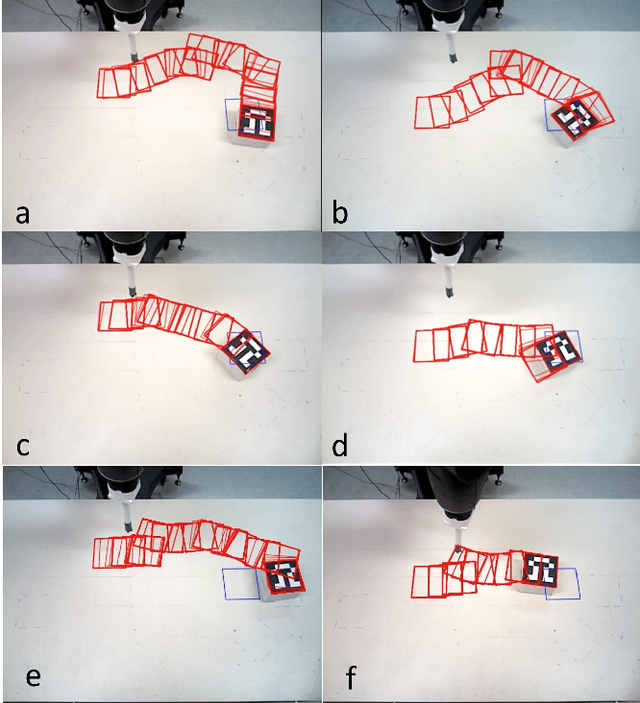

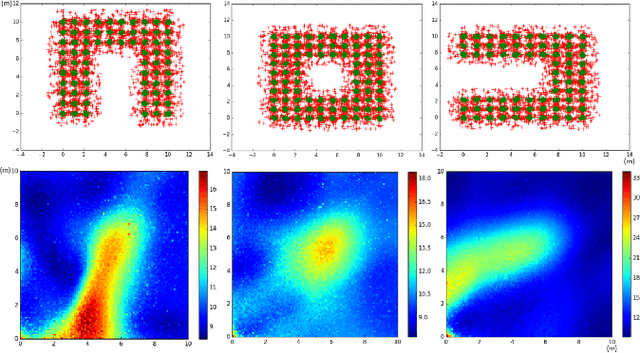

Planning robust robot manipulation requires good forward models that enable robust plans to be found. This work shows how to achieve this using a forward model learned from robot data to plan push manipulations. We explore learning methods (Gaussian Process Regression, and an Ensemble of Mixture Density Networks) that give estimates of the uncertainty in their predictions. These learned models are utilised by a model predictive path integral (MPPI) controller to plan how to push the box to a goal location. The planner avoids regions of high predictive uncertainty in the forward model. This includes both inherent uncertainty in dynamics, and meta uncertainty due to limited data. Thus, pushing tasks are completed in a robust fashion with respect to estimated uncertainty in the forward model and without the need of differentiable cost functions. We demonstrate the method on a real robot, and show that learning can outperform physics simulation. Using simulation, we also show the ability to plan uncertainty averse paths.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge