UMS-VINS: United Monocular-Stereo Features for Visual-Inertial Tightly Coupled Odometry

Paper and Code

Mar 15, 2023

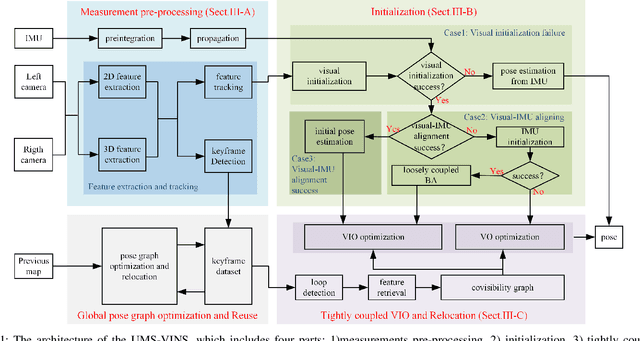

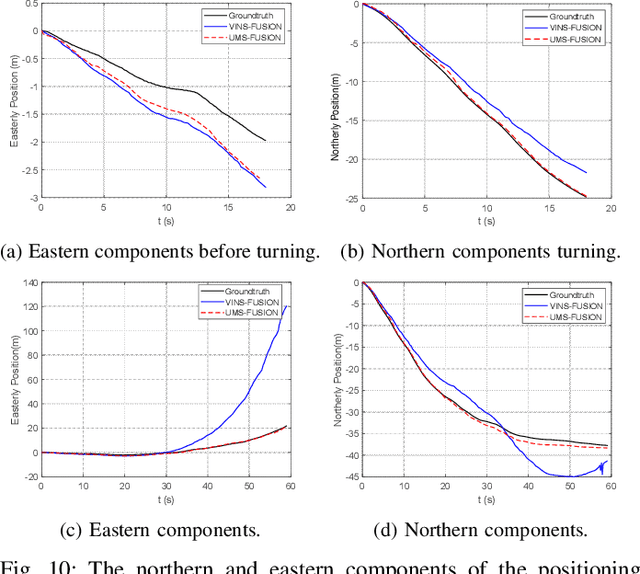

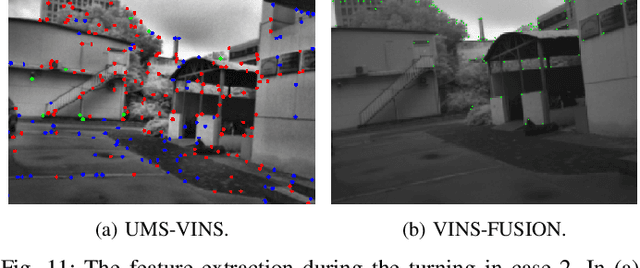

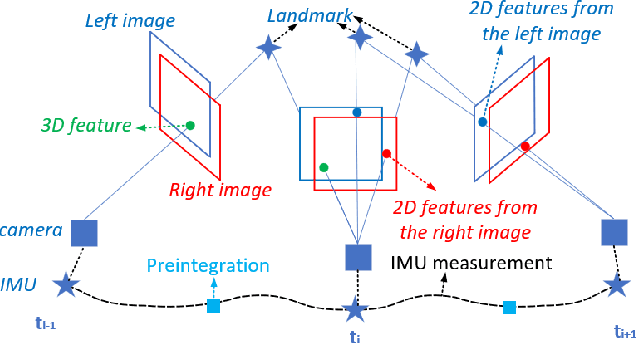

This paper introduces the united monocular-stereo features into a visual-inertial tightly coupled odometry (UMS-VINS) for robust pose estimation. UMS-VINS requires two cameras and a low-cost inertial measurement unit (IMU). The UMS-VINS is an evolution of VINS-FUSION, which modifies the VINS-FUSION from the following three perspectives. 1) UMS-VINS extracts and tracks features from the sub-pixel plane to achieve better positions of the features. 2) UMS-VINS introduces additional 2-dimensional features from the left and/or right cameras. 3) If the visual initialization fails, the IMU propagation is directly used for pose estimation, and if the visual-IMU alignment fails, UMS-VINS estimates the pose via the visual odometry. The performances on both public datasets and new real-world experiments indicate that the proposed UMS-VINS outperforms the VINS-FUSION from the perspective of localization accuracy, localization robustness, and environmental adaptability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge