Tutoring Reinforcement Learning via Feedback Control

Paper and Code

Dec 12, 2020

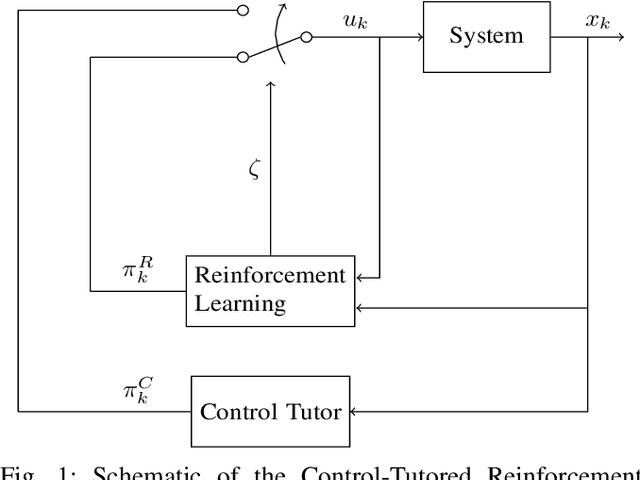

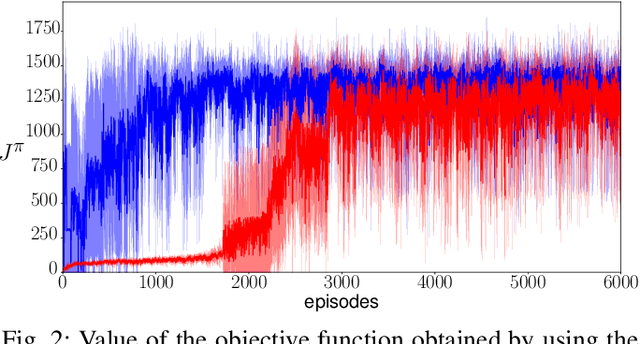

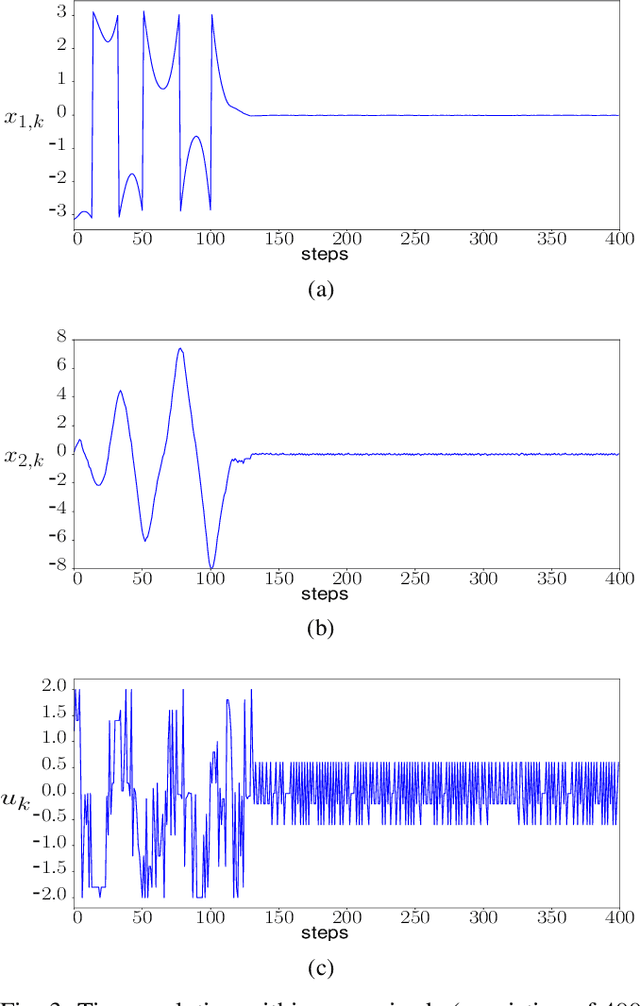

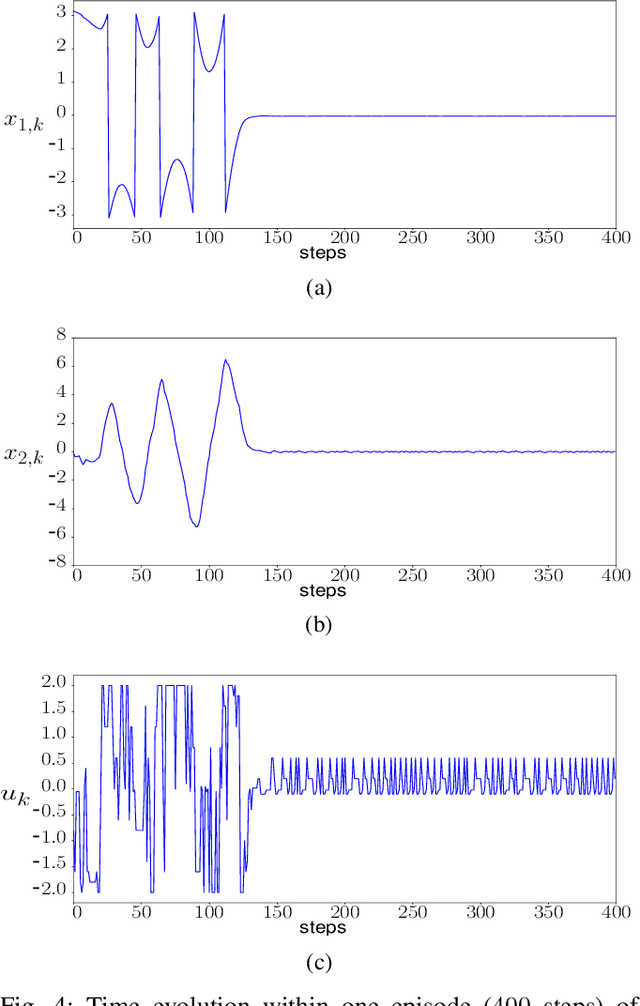

We introduce a control-tutored reinforcement learning (CTRL) algorithm. The idea is to enhance tabular learning algorithms by means of a control strategy with limited knowledge of the system model. By tutoring the learning process, the learning rate can be substantially reduced. We use the classical problem of stabilizing an inverted pendulum as a benchmark to numerically illustrate the advantages and disadvantages of the approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge