Translating multispectral imagery to nighttime imagery via conditional generative adversarial networks

Paper and Code

Dec 28, 2019

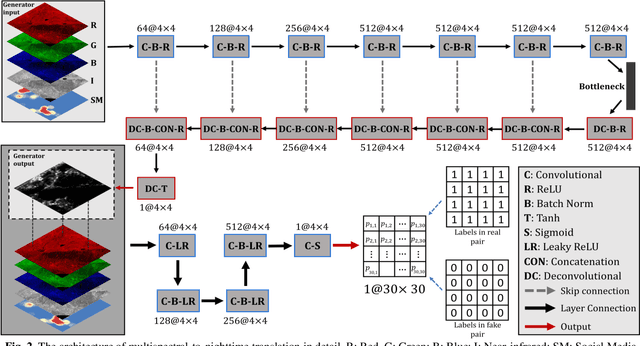

Nighttime satellite imagery has been applied in a wide range of fields. However, our limited understanding of how observed light intensity is formed and whether it can be simulated greatly hinders its further application. This study explores the potential of conditional Generative Adversarial Networks (cGAN) in translating multispectral imagery to nighttime imagery. A popular cGAN framework, pix2pix, was adopted and modified to facilitate this translation using gridded training image pairs derived from Landsat 8 and Visible Infrared Imaging Radiometer Suite (VIIRS). The results of this study prove the possibility of multispectral-to-nighttime translation and further indicate that, with the additional social media data, the generated nighttime imagery can be very similar to the ground-truth imagery. This study fills the gap in understanding the composition of satellite observed nighttime light and provides new paradigms to solve the emerging problems in nighttime remote sensing fields, including nighttime series construction, light desaturation, and multi-sensor calibration.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge