Translating automated brain tumour phenotyping to clinical neuroimaging

Paper and Code

Jun 13, 2022

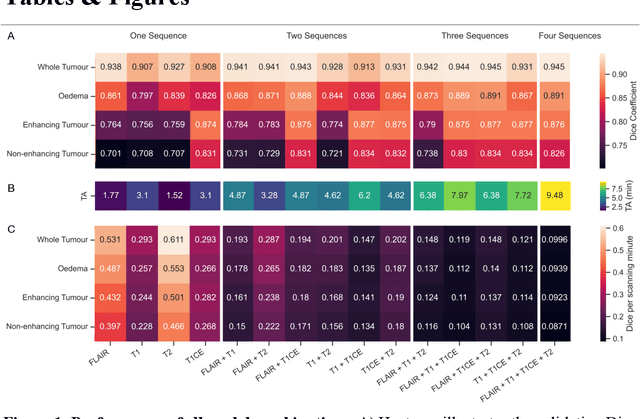

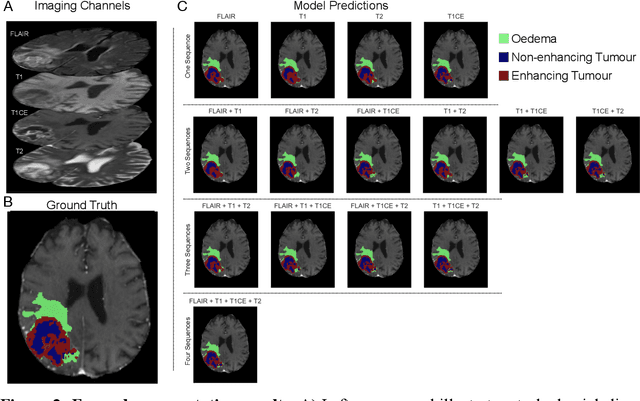

Background: The complex heterogeneity of brain tumours is increasingly recognized to demand data of magnitudes and richness only fully-inclusive, large-scale collections drawn from routine clinical care could plausibly offer. This is a task contemporary machine learning could facilitate, especially in neuroimaging, but its ability to deal with incomplete data common in real world clinical practice remains unknown. Here we apply state-of-the-art methods to large scale, multi-site MRI data to quantify the comparative fidelity of automated tumour segmentation models replicating the various levels of completeness observed in clinical reality. Methods: We compare deep learning (nnU-Net-derived) tumour segmentation models with all possible combinations of T1, contrast-enhanced T1, T2, and FLAIR imaging sequences, trained and validated with five-fold cross-validation on the 2021 BraTS-RSNA glioma population of 1251 patients, and tested on a diverse, real-world 50 patient sample. Results: Models trained on incomplete data segmented lesions well, often equivalently to those trained on complete data, exhibiting Dice coefficients of 0.907 (single sequence) to 0.945 (full datasets) for whole tumours, and 0.701 (single sequence) to 0.891 (full datasets) for component tissue types. Incomplete data segmentation models could accurately detect enhancing tumour in the absence of contrast imaging, quantifying its volume with an R2 between 0.95-0.97. Conclusions: Deep learning segmentation models characterize tumours well when missing data and can even detect enhancing tissue without the use of contrast. This suggests translation to clinical practice, where incomplete data is common, may be easier than hitherto believed, and may be of value in reducing dependence on contrast use.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge