Transformer based Grapheme-to-Phoneme Conversion

Paper and Code

Apr 14, 2020

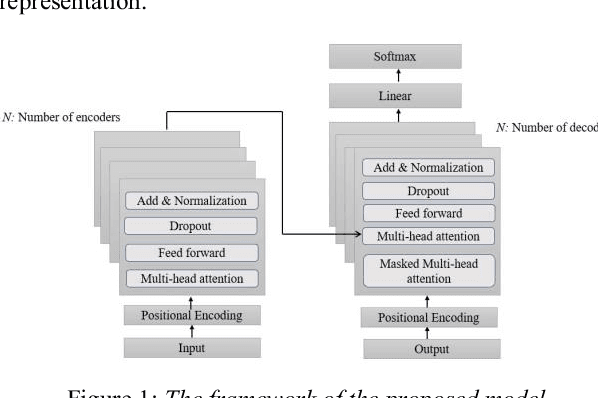

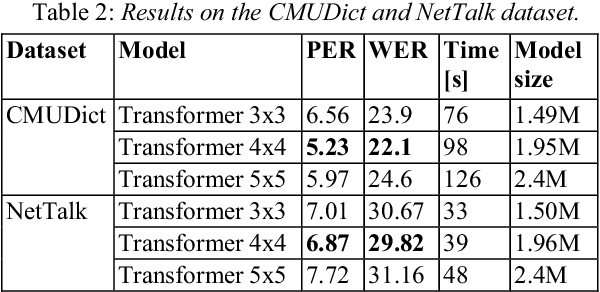

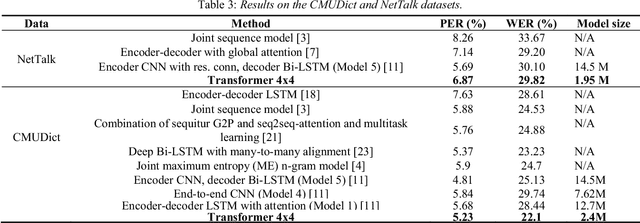

Attention mechanism is one of the most successful techniques in deep learning based Natural Language Processing (NLP). The transformer network architecture is completely based on attention mechanisms, and it outperforms sequence-to-sequence models in neural machine translation without recurrent and convolutional layers. Grapheme-to-phoneme (G2P) conversion is a task of converting letters (grapheme sequence) to their pronunciations (phoneme sequence). It plays a significant role in text-to-speech (TTS) and automatic speech recognition (ASR) systems. In this paper, we investigate the application of transformer architecture to G2P conversion and compare its performance with recurrent and convolutional neural network based approaches. Phoneme and word error rates are evaluated on the CMUDict dataset for US English and the NetTalk dataset. The results show that transformer based G2P outperforms the convolutional-based approach in terms of word error rate and our results significantly exceeded previous recurrent approaches (without attention) regarding word and phoneme error rates on both datasets. Furthermore, the size of the proposed model is much smaller than the size of the previous approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge