Training Neural Networks by Using Power Linear Units (PoLUs)

Paper and Code

Feb 01, 2018

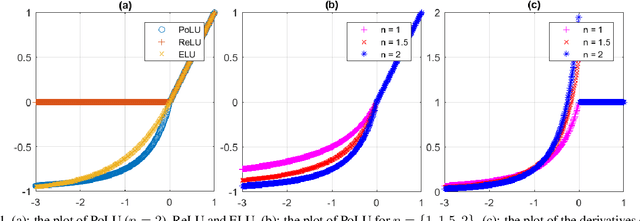

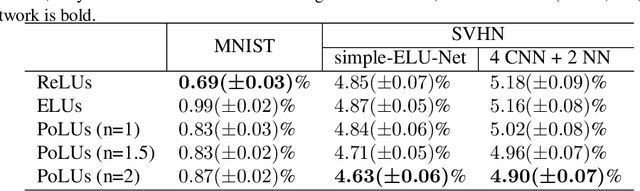

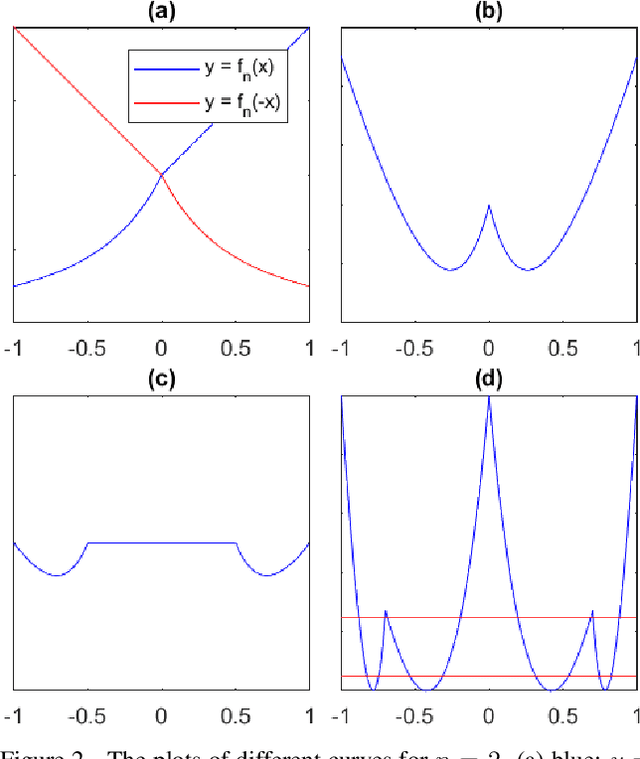

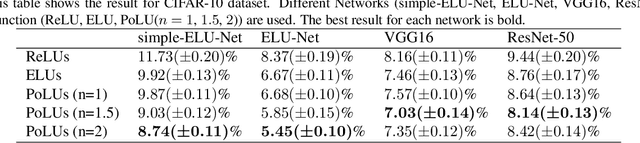

In this paper, we introduce "Power Linear Unit" (PoLU) which increases the nonlinearity capacity of a neural network and thus helps improving its performance. PoLU adopts several advantages of previously proposed activation functions. First, the output of PoLU for positive inputs is designed to be identity to avoid the gradient vanishing problem. Second, PoLU has a non-zero output for negative inputs such that the output mean of the units is close to zero, hence reducing the bias shift effect. Thirdly, there is a saturation on the negative part of PoLU, which makes it more noise-robust for negative inputs. Furthermore, we prove that PoLU is able to map more portions of every layer's input to the same space by using the power function and thus increases the number of response regions of the neural network. We use image classification for comparing our proposed activation function with others. In the experiments, MNIST, CIFAR-10, CIFAR-100, Street View House Numbers (SVHN) and ImageNet are used as benchmark datasets. The neural networks we implemented include widely-used ELU-Network, ResNet-50, and VGG16, plus a couple of shallow networks. Experimental results show that our proposed activation function outperforms other state-of-the-art models with most networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge