Towards Secure and Practical Machine Learning via Secret Sharing and Random Permutation

Paper and Code

Aug 18, 2021

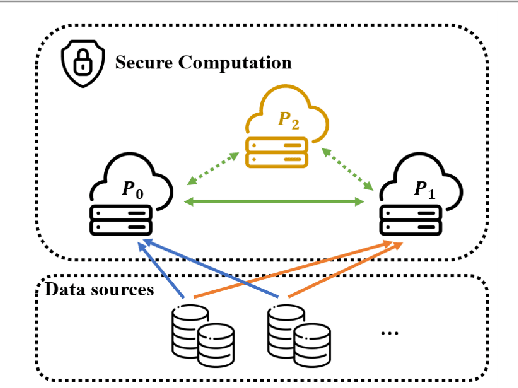

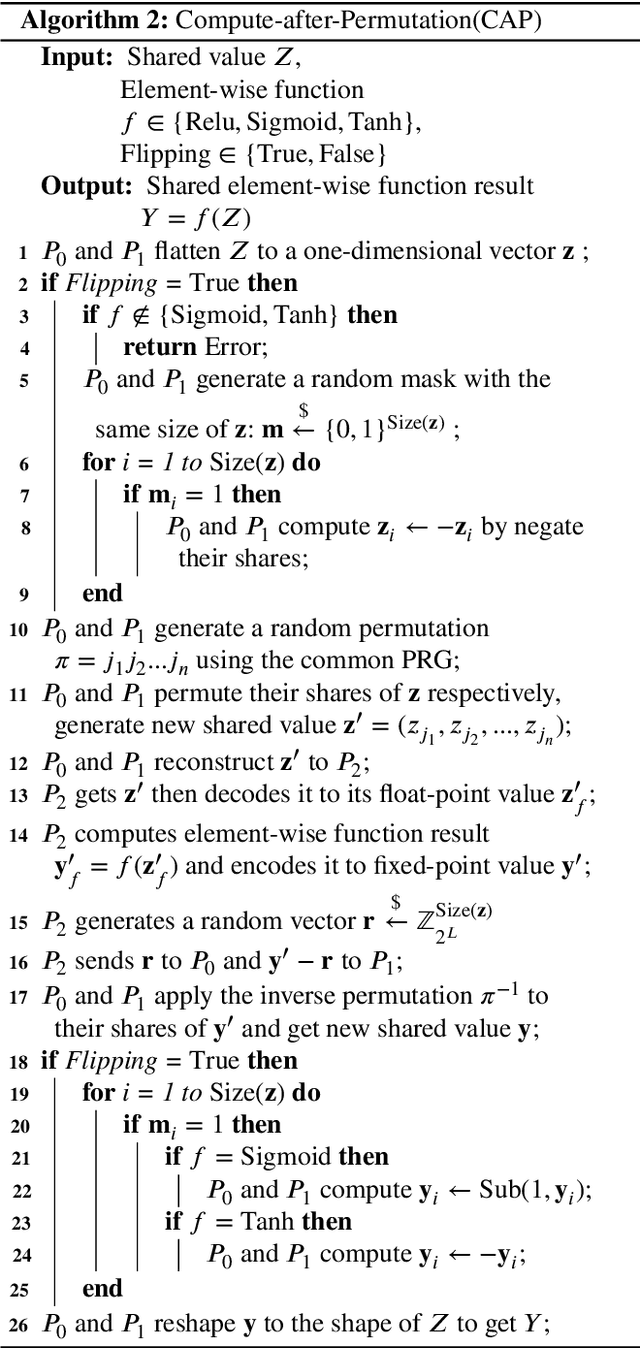

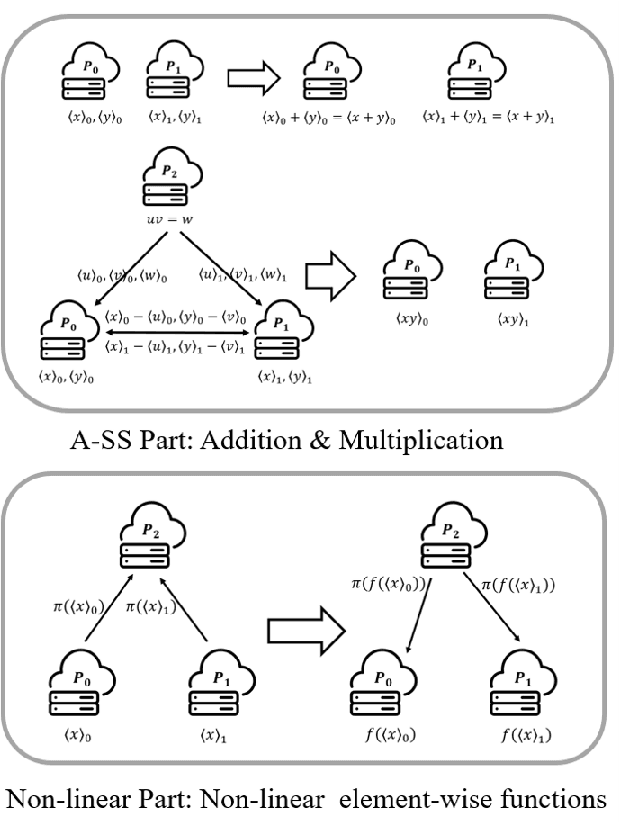

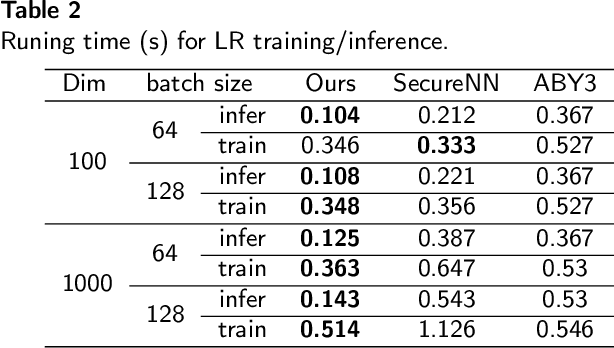

With the increasing demands for privacy protection, privacy-preserving machine learning has been drawing much attention in both academia and industry. However, most existing methods have their limitations in practical applications. On the one hand, although most cryptographic methods are provable secure, they bring heavy computation and communication. On the other hand, the security of many relatively efficient private methods (e.g., federated learning and split learning) is being questioned, since they are non-provable secure. Inspired by previous work on privacy-preserving machine learning, we build a privacy-preserving machine learning framework by combining random permutation and arithmetic secret sharing via our compute-after-permutation technique. Since our method reduces the cost for element-wise function computation, it is more efficient than existing cryptographic methods. Moreover, by adopting distance correlation as a metric for privacy leakage, we demonstrate that our method is more secure than previous non-provable secure methods. Overall, our proposal achieves a good balance between security and efficiency. Experimental results show that our method not only is up to 6x faster and reduces up to 85% network traffic compared with state-of-the-art cryptographic methods, but also leaks less privacy during the training process compared with non-provable secure methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge