Towards Practical Differential Privacy in Data Analysis: Understanding the Effect of Epsilon on Utility in Private ERM

Paper and Code

Jun 06, 2022

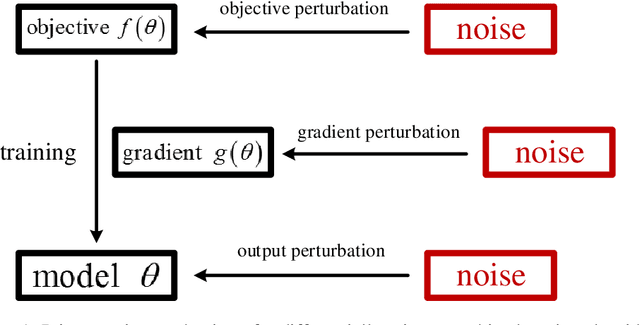

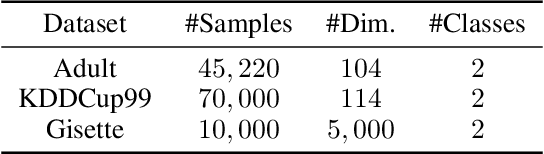

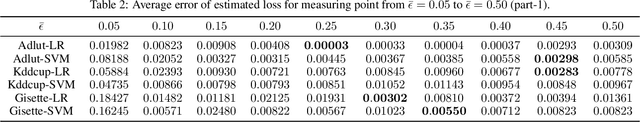

In this paper, we focus our attention on private Empirical Risk Minimization (ERM), which is one of the most commonly used data analysis method. We take the first step towards solving the above problem by theoretically exploring the effect of epsilon (the parameter of differential privacy that determines the strength of privacy guarantee) on utility of the learning model. We trace the change of utility with modification of epsilon and reveal an established relationship between epsilon and utility. We then formalize this relationship and propose a practical approach for estimating the utility under an arbitrary value of epsilon. Both theoretical analysis and experimental results demonstrate high estimation accuracy and broad applicability of our approach in practical applications. As providing algorithms with strong utility guarantees that also give privacy when possible becomes more and more accepted, our approach would have high practical value and may be likely to be adopted by companies and organizations that would like to preserve privacy but are unwilling to compromise on utility.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge