Towards Personalized Explanation of Robotic Planning via User Feedback

Paper and Code

Nov 01, 2020

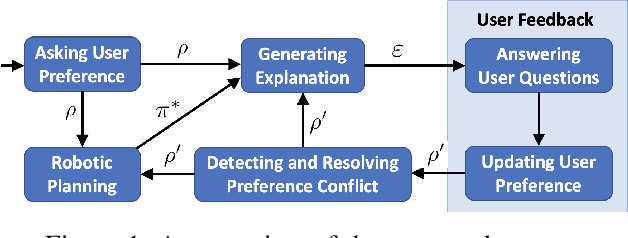

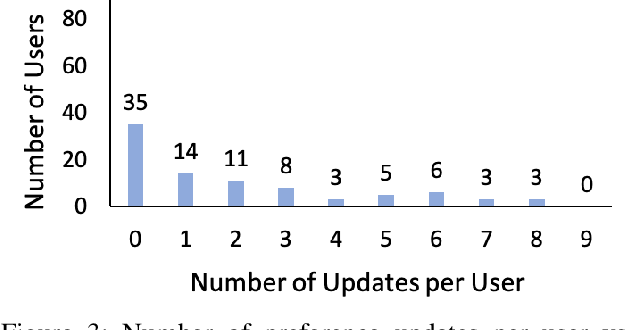

Prior studies have found that providing explanations about robots' decisions and actions help to improve system transparency, increase human users' trust of robots, and enable effective human-robot collaboration. Different users have various preferences about what should be included in explanations. However, little research has been conducted for the generation of personalized explanations. In this paper, we present a system for generating personalized explanations of robotic planning via user feedback. We consider robotic planning using Markov decision processes (MDPs) and develop an algorithm to automatically generate a personalized explanation of an optimal robotic plan (i.e., an optimal MDP policy) based on the user preference regarding four elements (i.e., objective, locality, specificity, and abstraction). In addition, we design the system to interact with users via answering users' further questions about the generated explanations. Users have the option to update their preferences to view different explanations. The system is capable of detecting and resolving any preference conflict via user interaction. Our user study results show that the generated personalized explanations improve user satisfaction, while the majority of users liked the system's capabilities of question-answering, and conflict detection and resolution.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge