Towards Improving Generalization of Deep Networks via Consistent Normalization

Paper and Code

Aug 31, 2019

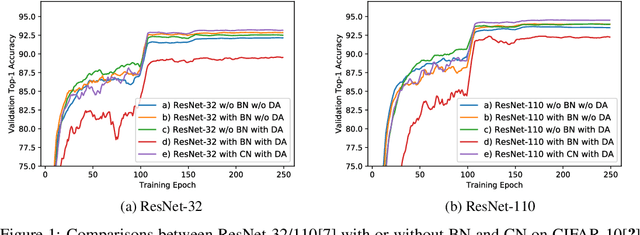

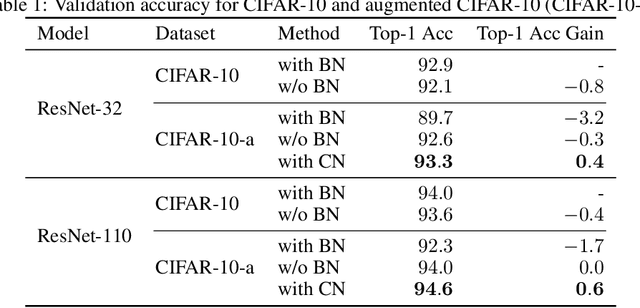

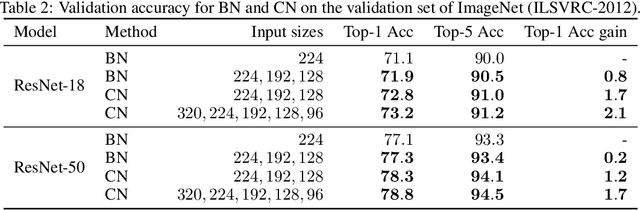

Batch Normalization (BN) was shown to accelerate training and improve generalization of Convolutional Neural Networks (ConvNets), which typically use the Conv-BN couple as building block. However, this work shows a common phenomenon that the Conv-BN module does not necessarily outperform the networks trained without using BN, especially when data augmentation is presented in training. We find that this phenomenon occurs because there is inconsistency between the distribution of the augmented data and that of the normalized representation. To address this issue, we propose Consistent Normalization (CN) that not only retains the advantages of the existing normalization methods, but also achieves state-of-the-art performance on various tasks including image classification, segmentation, and machine translation. The code will be released to facilitate reproducibility.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge