Toward Deeper Understanding of Nonconvex Stochastic Optimization with Momentum using Diffusion Approximations

Paper and Code

Oct 01, 2018

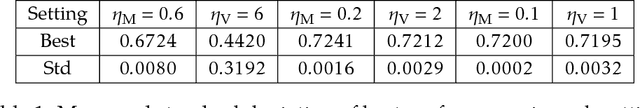

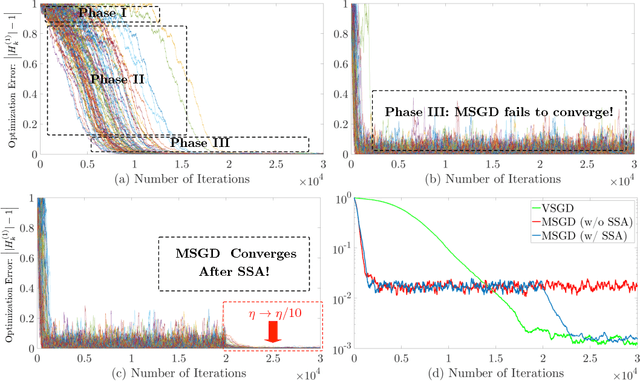

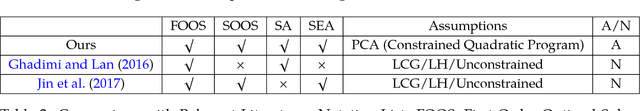

Momentum Stochastic Gradient Descent (MSGD) algorithm has been widely applied to many nonconvex optimization problems in machine learning. Popular examples include training deep neural networks, dimensionality reduction, and etc. Due to the lack of convexity and the extra momentum term, the optimization theory of MSGD is still largely unknown. In this paper, we study this fundamental optimization algorithm based on the so-called "strict saddle problem." By diffusion approximation type analysis, our study shows that the momentum helps escape from saddle points, but hurts the convergence within the neighborhood of optima (if without the step size annealing). Our theoretical discovery partially corroborates the empirical success of MSGD in training deep neural networks. Moreover, our analysis applies the martingale method and "Fixed-State-Chain" method from the stochastic approximation literature, which are of independent interest.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge