Touch-to-Touch Translation -- Learning the Mapping Between Heterogeneous Tactile Sensing Technologies

Paper and Code

Nov 04, 2024

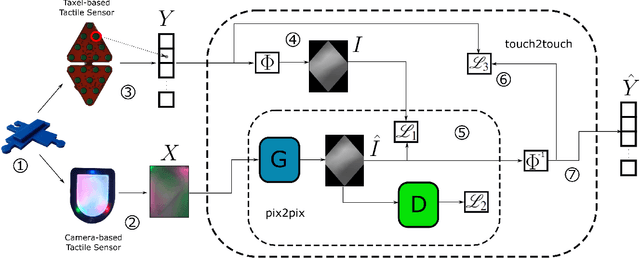

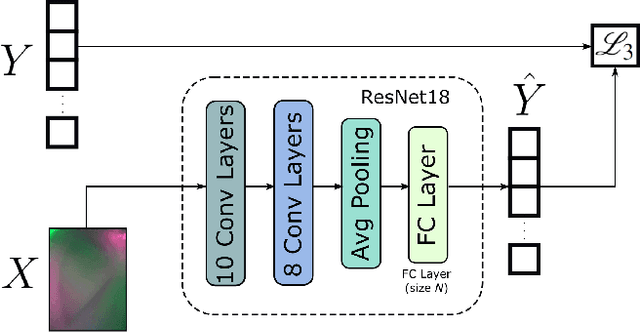

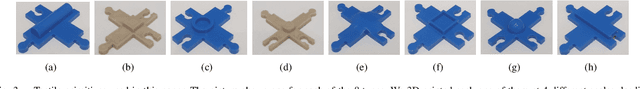

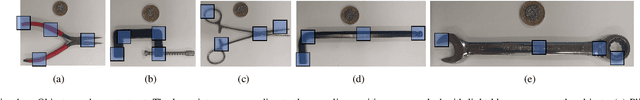

The use of data-driven techniques for tactile data processing and classification has recently increased. However, collecting tactile data is a time-expensive and sensor-specific procedure. Indeed, due to the lack of hardware standards in tactile sensing, data is required to be collected for each different sensor. This paper considers the problem of learning the mapping between two tactile sensor outputs with respect to the same physical stimulus -- we refer to this problem as touch-to-touch translation. In this respect, we proposed two data-driven approaches to address this task and we compared their performance. The first one exploits a generative model developed for image-to-image translation and adapted for this context. The second one uses a ResNet model trained to perform a regression task. We validated both methods using two completely different tactile sensors -- a camera-based, Digit and a capacitance-based, CySkin. In particular, we used Digit images to generate the corresponding CySkin data. We trained the models on a set of tactile features that can be found in common larger objects and we performed the testing on a previously unseen set of data. Experimental results show the possibility of translating Digit images into the CySkin output by preserving the contact shape and with an error of 15.18% in the magnitude of the sensor responses.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge