To Monitor Or Not: Observing Robot's Behavior based on a Game-Theoretic Model of Trust

Paper and Code

Apr 06, 2019

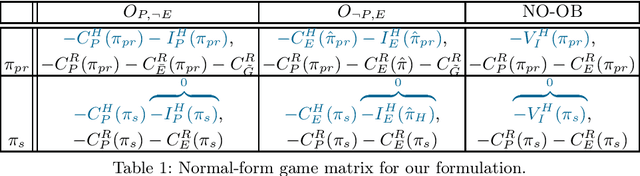

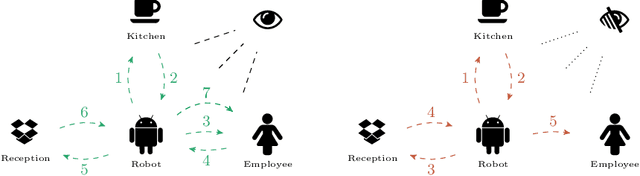

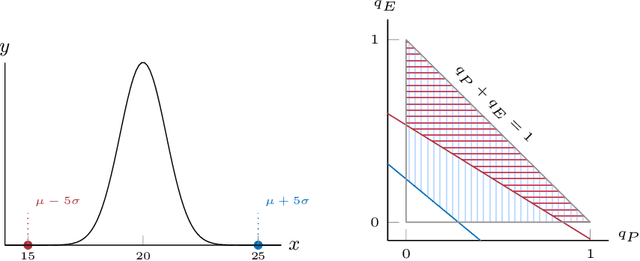

In scenarios where a robot generates and executes a plan, there may be instances where this generated plan is less costly for the robot to execute but incomprehensible to the human. When the human acts as a supervisor and is held accountable for the robot's plan, the human may be at a higher risk if the incomprehensible behavior is deemed to be unsafe. In such cases, the robot, who may be unaware of the human's exact expectations, may choose to do (1) the most constrained plan (i.e. one preferred by all possible supervisors) incurring the added cost of executing highly sub-optimal behavior when the human is observing it and (2) deviate to a more optimal plan when the human looks away. These problems amplify in situations where the robot has to fulfill multiple goals and cater to the needs of different human supervisors. In such settings, the robot, being a rational agent, should take any chance it gets to deviate to a lower cost plan. On the other hand, continuous monitoring of the robot's behavior is often difficult for human because it costs them valuable resources (e.g., time, effort, cognitive overload, etc.). To optimize the cost for constant monitoring while ensuring the robots follow the {\em safe} behavior, we model this problem in the game-theoretic framework of trust where the human is the agent that trusts the robot. We show that the notion of human's trust, which is well-defined when there is a pure strategy equilibrium, is inversely proportional to the probability it assigns for observing the robot's behavior. We then show that with high probability, our game lacks a pure strategy Nash equilibrium, forcing us to define trust boundary over mixed strategies of the human in order to guarantee safe behavior by the robot.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge