Tight Regret Bounds for Infinite-armed Linear Contextual Bandits

Paper and Code

May 04, 2019

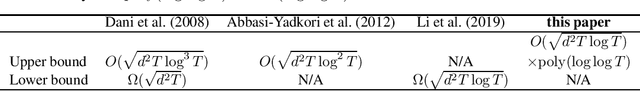

Linear contextual bandit is a class of sequential decision making problems with important applications in recommendation systems, online advertising, healthcare, and other machine learning related tasks. While there is much prior research, tight regret bounds of linear contextual bandit with infinite action sets remain open. In this paper, we prove regret upper bound of $O(\sqrt{d^2T\log T})\times \mathrm{poly}(\log\log T)$ where $d$ is the domain dimension and $T$ is the time horizon. Our upper bound matches the previous lower bound of $\Omega(\sqrt{d^2 T\log T})$ up to iterated logarithmic terms.

* 9 pages

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge