The Role of Global Labels in Few-Shot Classification and How to Infer Them

Paper and Code

Aug 09, 2021

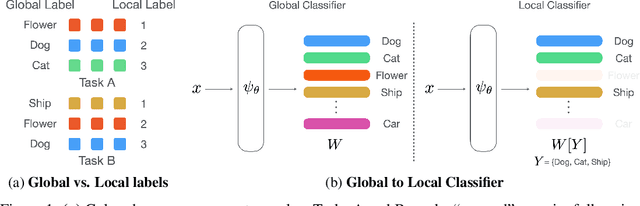

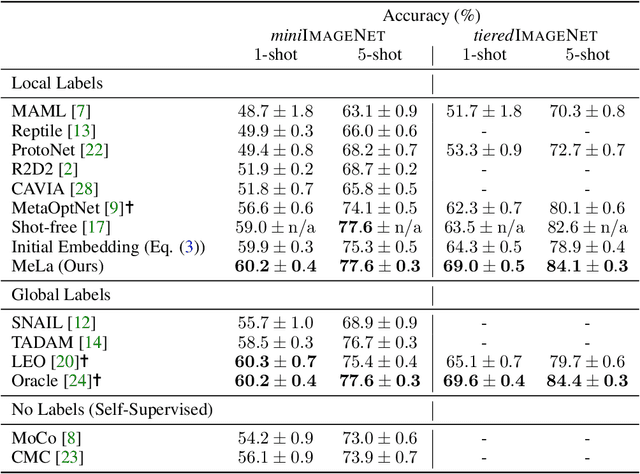

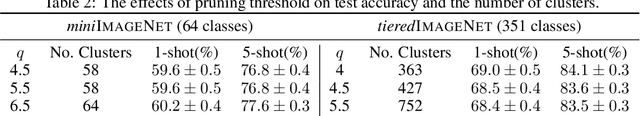

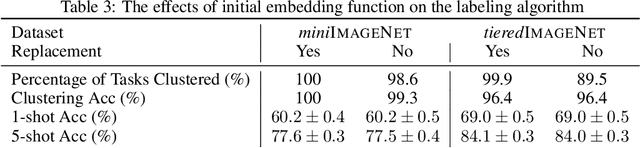

Few-shot learning (FSL) is a central problem in meta-learning, where learners must quickly adapt to new tasks given limited training data. Surprisingly, recent works have outperformed meta-learning methods tailored to FSL by casting it as standard supervised learning to jointly classify all classes shared across tasks. However, this approach violates the standard FSL setting by requiring global labels shared across tasks, which are often unavailable in practice. In this paper, we show why solving FSL via standard classification is theoretically advantageous. This motivates us to propose Meta Label Learning (MeLa), a novel algorithm that infers global labels and obtains robust few-shot models via standard classification. Empirically, we demonstrate that MeLa outperforms meta-learning competitors and is comparable to the oracle setting where ground truth labels are given. We provide extensive ablation studies to highlight the key properties of the proposed strategy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge