The Practical Challenges of Active Learning: Lessons Learned from Live Experimentation

Paper and Code

Jun 28, 2019

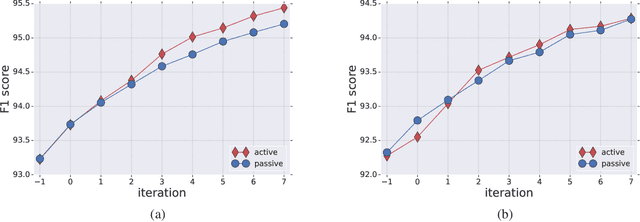

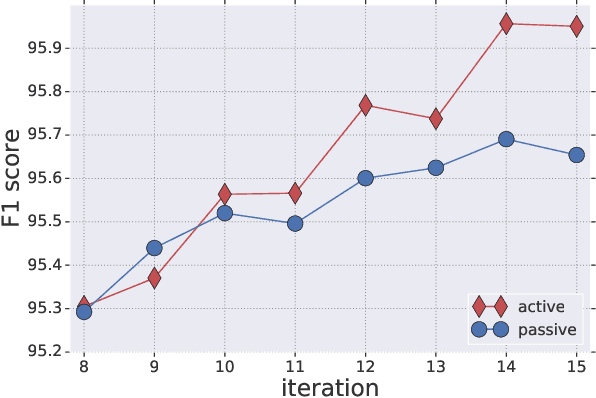

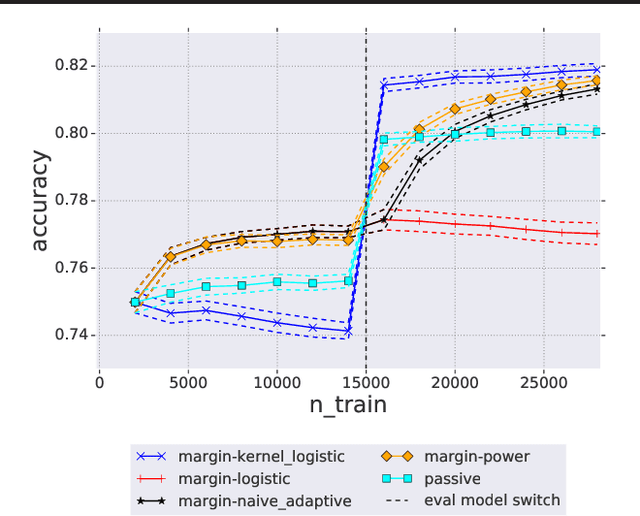

We tested in a live setting the use of active learning for selecting text sentences for human annotations used in training a Thai segmentation machine learning model. In our study, two concurrent annotated samples were constructed, one through random sampling of sentences from a text corpus, and the other through model-based scoring and ranking of sentences from the same corpus. In the course of the experiment, we observed the effect of significant changes to the learning environment which are likely to occur in real-world learning tasks. We describe how our active learning strategy interacted with these events and discuss other practical challenges encountered in using active learning in the live setting.

* Presented at 2019 ICML Workshop on Human in the Loop Learning (HILL

2019), Long Beach, USA

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge