The paradox of the compositionality of natural language: a neural machine translation case study

Paper and Code

Aug 12, 2021

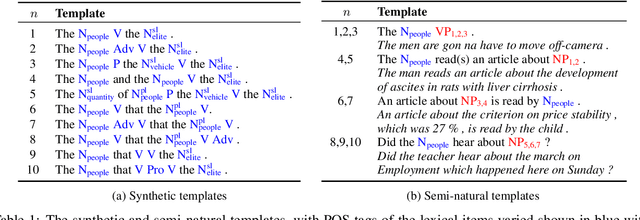

Moving towards human-like linguistic performance is often argued to require compositional generalisation. Whether neural networks exhibit this ability is typically studied using artificial languages, for which the compositionality of input fragments can be guaranteed and their meanings algebraically composed. However, compositionality in natural language is vastly more complex than this rigid, arithmetics-like version of compositionality, and as such artificial compositionality tests do not allow us to draw conclusions about how neural models deal with compositionality in more realistic scenarios. In this work, we re-instantiate three compositionality tests from the literature and reformulate them for neural machine translation (NMT). The results highlight two main issues: the inconsistent behaviour of NMT models and their inability to (correctly) modulate between local and global processing. Aside from an empirical study, our work is a call to action: we should rethink the evaluation of compositionality in neural networks of natural language, where composing meaning is not as straightforward as doing the math.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge