The Geometric Occam's Razor Implicit in Deep Learning

Paper and Code

Dec 01, 2021

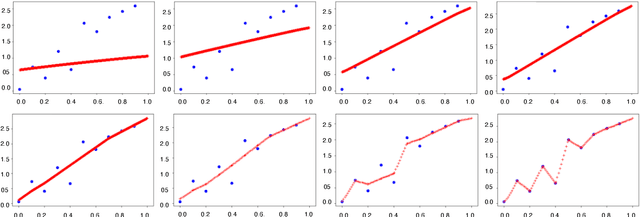

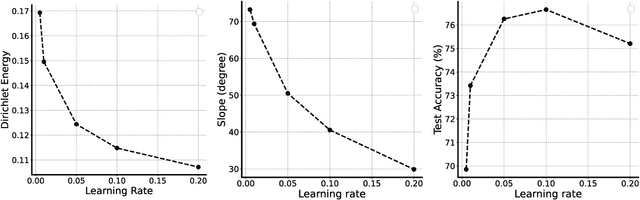

In over-parameterized deep neural networks there can be many possible parameter configurations that fit the training data exactly. However, the properties of these interpolating solutions are poorly understood. We argue that over-parameterized neural networks trained with stochastic gradient descent are subject to a Geometric Occam's Razor; that is, these networks are implicitly regularized by the geometric model complexity. For one-dimensional regression, the geometric model complexity is simply given by the arc length of the function. For higher-dimensional settings, the geometric model complexity depends on the Dirichlet energy of the function. We explore the relationship between this Geometric Occam's Razor, the Dirichlet energy and other known forms of implicit regularization. Finally, for ResNets trained on CIFAR-10, we observe that Dirichlet energy measurements are consistent with the action of this implicit Geometric Occam's Razor.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge