The Evolutionary Dynamics of Independent Learning Agents in Population Games

Paper and Code

Jun 29, 2020

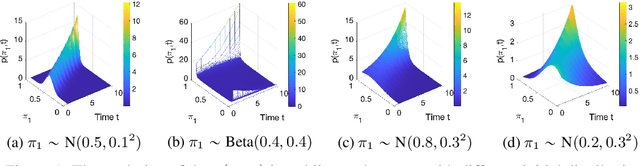

Understanding the evolutionary dynamics of reinforcement learning under multi-agent settings has long remained an open problem. While previous works primarily focus on 2-player games, we consider population games, which model the strategic interactions of a large population comprising small and anonymous agents. This paper presents a formal relation between stochastic processes and the dynamics of independent learning agents who reason based on the reward signals. Using a master equation approach, we provide a novel unified framework for characterising population dynamics via a single partial differential equation (Theorem 1). Through a case study involving Cross learning agents, we illustrate that Theorem 1 allows us to identify qualitatively different evolutionary dynamics, to analyse steady states, and to gain insights into the expected behaviour of a population. In addition, we present extensive experimental results validating that Theorem 1 holds for a variety of learning methods and population games.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge